1. Introduction

Information regarding tree species functions as the fundamental data for forest management. The accurate identification of forest tree species is crucial for inventorying forest resources, estimating carbon storage on forest grounds, effectively managing forest resources, and for the timely monitoring of species diversity. Numerous continuous narrow spectral bands and high spatial resolution of hyperspectral image (HSI) can provide continuous spectral information for each pixel [

1], which plays a crucial role in the accurate classification of tree species [

2,

3]. Forest-type identification is a vital aspect of the application of hyperspectral imagery [

4,

5,

6]. HSI provide abundant spectral information. However, they are prone to the Hughes [

7] phenomenon whereby the classification accuracy first increases and then decreases as the number of bands in the analysis increases. Therefore, for the dimensionality reduction of airborne hyperspectral data, the representative spectral–spatial features should be extracted [

8]. Feature transformation is a dimensionality reduction method in which multiple feature values of the original high-dimensional feature space are mapped onto a low-dimensional space, based on a specific principle and using certain mathematical operations, similar to the principal component analysis (PCA) method [

9]. Sidike et al. [

10] used a progressively expanded neural network to gradually classify HSI. They also used the PCA dimensionality reduction method to reduce computational complexity and avoid dimensional disasters, by extracting features for each band. Another method is band selection [

11], which is based on the original information of the image. This method selects some of the most representative bands from the original band according to a specific criterion, similar to the random forest (RF) [

12,

13] method. Wu et al. [

14] eliminated hyperspectral features using the RF method and further improved the classification accuracy and performance of the support vector machine classifier. However, regardless of whether feature transformation or band selection is employed, information loss owing to dimensionality reduction is inevitable. Therefore, the impact of dimensionality reduction on specific data should be investigated. The fine spectral and spatial characteristics of airborne hyperspectral data are beneficial for tree species identification. However, the problem of reduced classification efficiency and information redundancy caused by a large data volume is particularly prominent. Notably, it limits the application of airborne HSI in regional classification mapping [

15,

16]. Thus, exploring new classification methods and seeking a balanced solution between making full use of effective information, improving classification time efficiency, and ensuring classification accuracy are crucial for the regional mapping of complex forest stands and tree species. However, only a few studies have been conducted in this regard.

Deep learning can extract advanced and semantic features [

17,

18]. Moreover, its objective function directly focuses on classification and automatically completes feature extraction from the data and the training of the classifier [

19]. Hence, deep learning has become a research hotspot for HSI classification [

20,

21,

22]. However, the topic is still challenging. In the application of deep learning classification, the ability to achieve feature representation relies on a large number of training samples [

23]. Deep learning classification of small samples may cause network overfitting, which is the network model performs very well on the training set, and nearly perfectly predicts and distinguishes all the data, but shows substantial differences on the new test set. Commonly used methods to solve network overfitting include increasing the number of samples, adding regularization to the network structure or dropout to the network structure, and terminating model training early. Among them, regularization [

24] and dropout [

25] are effective solutions to eliminate model overfitting. In particular, L2 regularization can be regarded as the regularization of the loss function, which is used to impose restrictions on some parameters in the loss function. Dropout refers to allowing the activation value of a certain neuron to cease working with a certain probability during forward propagation. Only a specific number of neurons are involved in each training cycle, while other neurons are not included. In forests, sample collection is hindered because of the complex terrain and stand structure. Further, the heterogeneity of the structure, composition of the tree species, and similarity of the image features render sample labeling tasks difficult for the classification of forest tree species. Therefore, the problem of tree species classification based on a deep learning method for small-sample sets should be addressed urgently [

26,

27,

28]. Pan et al. [

29] designed a new network called vertex component analysis network for deep feature extraction from the smoothed HSI. Moreover, this method achieves higher accuracy when the number of training samples is not abundant. Xu et al. [

30] proposed a random patches network for HSI classification, which directly regards the random patches obtained from the image as the convolution kernels without any training.

The convolutional neural network (CNN) is one of the most popular deep learning methods, exhibiting significant advantages in image classification [

31]. The significant improvement in computing power in recent years has resulted in the rapid development of many CNNs, such as AlexNet [

32], VGGNet [

33], MobilenetV1 [

34], and MobilenetV2 [

35]. However, these CNNs have complex network structures, which include a large number of layers, such as convolutional layers and pooling layers. This complex network structure consumes significant amounts of valuable computing and memory resources and wastes training time. Recent studies on HSI classification have proved that the three-dimensional convolutional neural network (3D-CNN) model is superior to other CNNs [

36], and it can solve high-dimensional classification problems for large data sets with high spatial and spectral resolution [

37]. The prototypical network (PrNet) has been proven to be effective for solving small-sample classification [

38]. PrNets learn a metric space in which classification can be performed by computing distances to prototype representations of each class. It can allow neurons to use prior knowledge to adjust the model according to the new task [

39]. Specifically, a simple classifier is generated dynamically in the new training stage, and the core embedding is fixed after the training [

40]. The PrNet is a metric learning approach. The basic idea of metric learning is to learn the distance function between images [

41]. The PrNet extracts features from the image, calculates the average value of the image embedding for each category, and obtains the prototype of each category. The classification can be performed by calculating the distance of the image embedded in the prototype. Thus, the distance function is crucial. In this regard, Euclidean distance is the most effective distance function [

42].

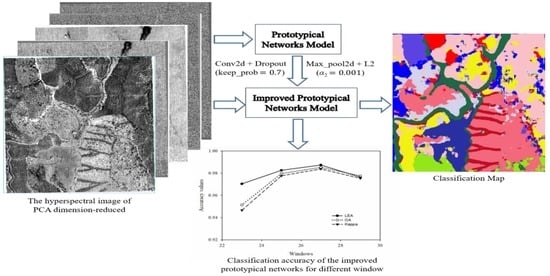

The PrNet has a simple network structure and is suitable for small-sample learning problems. Based on the large and fine spatial spectrum characteristics of the airborne HSIs, in this study, we use the PrNet to classify tree species. Due to the differences in quantities and characteristics of the information obtained from different data sources, the data source affects the classification performance of the PrNet. Therefore, based on the volume and characteristics of information from a specific data source, we study how different training samples affect the classification accuracy and efficiency of the PrNet classification, which is a crucial aspect. Moreover, under the same experimental conditions, we compare the classification performance of 3D-CNN and PrNet to verify whether PrNet has advantages in small-sample conditional classification. The major contributions of the proposed PrNet can be summarized as follows:

Improve the structure and parameter adjustment of a PrNet such that it is more suitable for the tree species classification of a small sample of airborne HIS.

Analyze the effect of data dimensionality reduction on the classification accuracy and the network operation efficiency.

Proposes a sample size suitable for PrNet to classify tree species of airborne HIS.

2. Materials and Methods

2.1. Study Area

The land area of the Gaofeng forest farm in Nanning City, Guangxi Province, China, is 59,000 hm

2. The complex forest stands and understory conditions pose challenges for tree species classification. In this study, the subfields of the Gaofeng forest farm (108°22′1″–108°22′30″ E, 22°57′42″–22°58′13″ N) were selected as the research area (

Figure 1). The land area within the study area is 74.1 hm

2, the regional altitude is 149–263 m, and the landform types are hills and low mountains. The study area belongs to the south subtropical monsoon climate region. The annual average temperature is 21.6 °C, extreme maximum temperature is 40.0 °C, extreme minimum temperature is −2 °C, and accumulated temperature is 7500 °C. The average annual rainfall is 1304.2 mm, and the average relative humidity is 79%. The study area is dominated by middle-aged and near-mature forests. The study of remote sensing tree species classification in this area is crucial for the classification and mapping of forests with complex structures and compositions.

2.2. Classification System and Survey of Field Sample Points

The pure

Cunninghamia lanceolata (

C. lanceolata, CL) forest in the study area is a model forest that is undergoing natural transformation. Over the past 20 years, the region has adjusted the stand structure by gradually transforming the artificial pure forest into a coniferous and broadleaf forest to cultivate

C. lanceolata (CL) and

Castanopsis hystrix (

C. hystrix, CH) large-diameter timber and to improve the economic benefits of the forest stand, forest quality, and ecology features. The study area also includes a demonstration garden of fast-growing and high-yielding tree species, with

Eucalyptus robusta and

Pinus as the main species [

43]. In 2014, the

Taxus chinensis and

Manglietiastrum sinicum were intercropped under the canopy. However, because of the late planting time, the average stand height was between 1.5 and 0.78 m, which is generally located under the

C. lanceolata (CL) and

C. hystrix (CH) forest, and as a result, the optical remote sensing images cannot obtain the spectral reflectance features. Thus, 11 categories in the study area are used for classification, including nine main tree species, cutting sites, and roads. Coniferous species include

C. lanceolata (CL),

Pinus elliottii (

P. elliottii, PE), and

Pinus massoniana (

P. massoniana, PM). The broadleaf species include

Eucalyptus urophylla (

E. urophylla, EU),

Eucalyptus grandis (

E. grandis, EG),

C. hystrix (CH),

Acacia melanoxylon (

A. melanoxylon, AM),

Mytilaria laosensis (

M. laosensis, ML), and other soft broadleaf species (SB).

The field survey was conducted in the Gaofeng Forest Farm, between 16 January 2019 and 5 February 2019. First, Gaofen-2 satellite images were interpreted at a resolution of 1 m. According to the principle of uniform distribution, the plots were arranged for the area covered by remote sensing. Some areas with complex terrain could not be arranged into plots. Thus, 19 square survey plots of 25 m × 25 m each were delineated. Seven plots comprised pure

C. lanceolata (CL) forests, three plots comprised

E. urophylla (EU) forests, and three plots comprised

E. grandis (EG) forests. The remaining six plots contained other stands and mixed forests. The tree species included

C. lanceolata (CL),

P. massoniana (PM),

E. urophylla (EU),

E. grandis (EG), and

C. hystrix (CH). The position of individual trees was taken as the actual sample point. The data regarding the positions of individual trees consisted of two parts: the position within the plot, measured using a China Sanding STS-752 Series Total Station (Nanjing Hanzhong Mapping Equipment Co. Ltd., Jiangsu, China), and the position outside the plot, measured using a hand-held GPS (South Group, Guangzhou). The positioning accuracy of the sampling points is demonstrated in the work of Wu and Zhang [

14]. For the categories with too many sample points, the edges of the sample points with an excessively dense distribution were removed to render the number of sample points for each feature category consistent and evenly distributed. For the categories with too few sample points, the points on the study area images were manually marked based on the field survey GPS positioning points and digital orthophoto map (DOM, resolution: 0.2 m). Hence, each category held 112 sample points, for a total of 1232 points (

Figure 1).

2.3. Acquisition and Preprocessing of Hyperspectral Images

The airborne HSIs were acquired at noon under a cloudless sky on 13 January 2019 and 30 January 2019. The hyperspectral equipment was housed in a LiCHy system (Integrated Geospatial Innovations, Kreuztal, Germany) that integrates light detection and ranging (LiDAR), a charge-coupled device camera, an AISA Eagle II hyperspectral sensor, and an inertial measurement unit (IMU). The LiCHy system is a push broom imaging system, and it covers the spectral ranges from 400 to 1000 nm. The aircraft carrying the hyperspectral equipment had a flight speed of 180 km·h

−1, a relative altitude of 750 m, an absolute altitude of 1000 m, and a route spacing of 400 m. The HSIs were composed of a geometrically corrected radiance, including 125 bands with wavelengths ranging from 400.76 to 987.21 nm. The size of the hyperspectral imagery in the study area was 914 × 1056 × 125 (Height × Width × Band).

Table 1 summarizes the detailed parameters of the hyperspectral sensors.

This study used the MODTRAN 4+ radiation transfer model [

44] supported by ENVI 5.3 to perform atmospheric correction on the HSIs. The model can correct the cascade effect caused by diffuse reflection and adjust the spectrum smoothing caused by artificial suppression. Due to the complex terrain of the study area, the remote sensing images were affected by the orientation of the sensor, the height and orientation of the sun, and uneven brightness values. Thus, terrain correction was performed on the HSIs based on the digital elevation model (DEM) generated from the synchronously acquired LiDAR data. Changes to the image radiance values caused by terrain fluctuation were eliminated so that the image better reflected the spectral characteristics of the ground. The Savitzky–Golay filter was used to smooth the spectral data to effectively remove the noise caused by various factors [

45].

PCA and RF methods were used to reduce the dimensionality of the HSIs in the study area. After PCA dimension reduction, the first five principal components contained more than 99% of the information of all bands. Therefore, these components were used as the data sources after PCA dimension reduction. Furthermore, RF can calculate the importance of each frequency band and arrange them in descending order. Based on the average reduction accuracy, the difference between the importance values of the two adjacent bands in the first eight bands was >0.1. Starting from the ninth band, the difference between the importance values of the two adjacent bands was <0.1, indicating only a slight difference in importance when starting from the ninth band. Therefore, the first eight bands were used as the data sources after RF dimension reduction. These included Band 33 (546.05 nm), Band 34 (550.70 nm), Band 35 (546.05 nm), Band 36 (555.35 nm), Band 37 (564.68 nm), Band 71 (726.10 nm), Band 82 (779.13 nm), and Band 96 (846.88 nm).

2.4. Sample Data Construction

The HSI, PCA dimension-reduced image (HSI_PCA), and RF dimension-reduced image (HSI_RF) were used as individual data sources. The open source geospatial data abstraction library framework was used to crop the sample data. First, the latitude and longitude coordinates of each measured sample point and their classification label attributes were read. Thereafter, the latitude and longitude coordinates were converted into screen coordinates (sample data are in pixels). The screen coordinates were used as input parameters to obtain the cropped area of the HSI data (Height × Width × Band, or H × W × B), and the sample was cropped from the data source according to a certain window size. The slice data were generated by creating an ENVI standard format file, which was used as the input data source of the PrNet.

Figure 2 shows the flowchart of the sample data clipping algorithm [

29]. The sample data were divided into training and test samples in ratios of 80% and 20%, respectively.

2.5. Prototypical Network Construction

2.5.1. Prototypical Network Model

The classification principle of the PrNet is that the points of each class are clustered around a prototype. Specifically, the neural network learns the nonlinear mapping of the input to the embedding space and uses the average value of the support set as the prototype of its class in the embedding space. Next, the nearest class prototype is found to classify the embedded query points.

Figure 3 shows the schematic representation of the PrNet [

40]. The figure shows samples in the projection space with three categories (C1, C2, and C3). The distance between samples of the same category was relatively close. The average of these sample features was used as the category prototype (black dots in

Figure 3). To perform classification on the unlabeled sample X, the sample was projected into the space, and the prototype closest to X was calculated. Finally, X was considered to be in the category represented by the prototype (C2).

In the PrNet classification,

N samples are considered for the training set

S, Equation (1), and each sample corresponds to a type of sample label.

where

xi∈

RD is the

D dimensional feature vector of a sample,

yi∈{1, …,

K} is the corresponding sample label, and

Sk serves as a training set with the sample label

k. The training set of each class is further divided into a support set and a query set. The support set was used to calculate the prototype, and the query set was used to optimize the prototype. If there are A classes, and each class has B samples as the support set, it is A-way-B-shot.

The PrNet embeds the function

f∅RD→

RM (∅ is the learning parameter), calculates the

M dimension of each class, and represents

ck∈

RM as the class prototype. Each prototype is the average of the vectors obtained by the embedding function of the support set samples of its class Equation (2).

Set the distance function as

d:

RM ×

RM→ [0, +∞]. In this study, the distance function is expressed as the square of the Euclidean distance ||

z-

z′||

2.The PrNet calculates the distance from the query, sets

x to the prototypical in the projection of the embedded space through the distance function, and then uses the Softmax function to calculate the probability of a sample belonging to this category Equation (3).

The loss function uses a negative log-likelihood function Equation (4) and stochastic gradient descent (SGD) to minimize the loss function of the real category

k and randomly select categories from the training set. Subsequently, a subset of the training set was selected as the support set within each category. Finally, one of the remaining categories was selected as a query point for training data input.

Figure 4 shows the flowchart of the loss calculation algorithm for each training.

where

N is the number of training set samples,

K is the number of training set categories,

N_

C≤

K is the number of categories for each training,

N_

S is the number of support set samples for each category, and

N_

Q is the number of samples in the query set of each category. In addition, RANDOMSAMPLE (

S,

N) represents a random and non-repetitive set of

N elements selected from the set

S.

2.5.2. Prototypical Network Classification Algorithm and Improvement

Slice data with a window size of S × S were used as the input data to the PrNet. The number of convolution blocks (Layer 1…Layer N, Layer last) in the architecture of the embedded function varies according to the size of the cropped data window. Each convolutional structure consists of a convolutional layer (Conv2d), batch normalization layer (Batch_norm), nonlinear activation function (Relu), and maximum pooling layer (Max_pool2d). The fully connected layer (Flatten) uses the output (1 × 1 × F) of the last convolutional structure as input and converts it into F eigenvalues (

Figure 5). The Euclidean distance from the query set to each prototypical in the projection space was estimated, and the Softmax activation function Equation (3) was used to calculate the probability of a sample belonging to each category.

In this study, the output space dimension (F) of the convolutional layer was 64, and the size of the convolution kernel was 3 × 3. The size of the pooling core of the largest pooling layer was 2 × 2. This network structure produced a 64-dimensional output space (F). The same embedding function was used to operate the support set and query set. Both sets were then used as input parameters for loss and accuracy calculation. All models were trained using Adam-SGD, and the initial learning rate was 10−3. The learning rate was halved every 2000 training cycles. We employed the Euclidean distance as a metric function to train the PrNet.

In existing research on the recognition of text using PrNets, images of text are commonly rotated and scaled to increase the size of the training set [

40,

41]. This study classifies forest tree species without rotating and zooming the images, and a small training set may cause overfitting of the network. Therefore, we proposed an improved method to eliminate possible network overfitting. In the PrNet classification framework, the largest pooling layer of each convolutional structure was used to connect the next convolutional structure and provide its input. Therefore, L2 regularization (Kernel: L2) of the convolution kernel was added to the convolutional layer, and dropout (the probability of neuron deactivation is 1-Keep_prob) was added to the maximum pooling layer as an improved PrNet (IPrNet) to classify HSI. Equation (5) shows the loss calculation function of the training model IPrNet. The improved model was more generalized and not dependent on certain local features.

Figure 5 presents the classification process.

where −log

p∅ (

y =

k│

x) is the loss function,

α2 is the regularization coefficient, and

w is the weight vector.

2.5.3. Accuracy Verification

The classification accuracy includes training accuracy and test accuracy. Training accuracy is expressed using last epoch accuracy (LEA), which is the average overall accuracy of each iteration. The test accuracy is expressed using the overall accuracy (OA), Equation (6) and the Kappa coefficient (Kappa), Equation (7).

where

Xii is the number of correct classifications of a category in the error matrix,

X+i is the total number of true reference samples of that category,

Xi+ is the total number of categories classified into this category, and

M is the total number of samples.

2.6. Three-Dimensional Convolutional Neural Network Construction

In this study, we developed a classification framework based on the 3D-CNN model proposed by Zhang [

46] (

Figure 6). Five convolutional structures were considered, each of which includes a convolutional layer (Conv3d, padding = “same,” kernel = 3 × 3 × 3), nonlinear activation function (Relu), and batch normalization layer (Batch_norm). The first and last convolution structures set a maximum pooling layer (Max_pool3d), which was used to rapidly reduce the data dimension. Subsequently, we flattened the feature output by the last 3D convolutional layer and transformed the 3D feature cube into features with dimensions of 1 × 128 through the first hidden layer (Dense). These feature vectors passed the Relu linear activation function and entered the second hidden layer (Dense). Moreover, they obtained the features whose dimensions are consistent with the number of categories (11). We used the Softmax activation function to calculate the probability of belonging to each category as the basis for classification. Additionally, to avoid model overfitting, the last convolution structure dropout was added after the output of the first hidden layer and before the second hidden layer, and the Keep_prob was set to 0.7.

Table 2 presents the output dimensions and training parameters of each model layer.

2.7. Experiments Design

Four experiments were designed to verify the classification performance of the IPrNet in the small-sample dataset and to verify the generalization ability of the network using different window sizes and dimensionality reduction methods. Additionally, 3D-CNN was used to classify tree species to verify whether the IPrNet is advantageous in the classification of small-sample tree species.

- (1)

Experiment A: Classification accuracy of the PrNet using different sample windows.

The HSI_PCA was sampled using different window sizes, after which it was sent to the PrNet for classification. To determine whether the PrNet had overfitting before the improvement, this experiment used the unimproved PrNet for classification. The window size started from 3 pixels, increased in steps of 2 pixels, and was set according to the rules of 3 × 3, 5 × 5, 7 × 7, etc., until the cut sample exceeded the study area. Then, the classification accuracy of samples in different windows were compared to find the optimal window. Moreover, if there was a significant difference between the training accuracy (LEA) and the test accuracy (OA, Kappa), it indicates that the network had obvious overfitting.

- (2)

Experiment B: Classification accuracy of the IPrNet using different Keep_prob values.

The HSI_PCA was used as the data source, using IPrNet for classification. The regularization coefficient was set to 0.001, and Keep_prob was set to 0.5, 0.7, and 0.9. The sample window is defined as the window with the highest classification accuracy in experiment A. The experimental results were compared to find the optimal Keep_prob values.

- (3)

Experiment C: Classification accuracy of the IPrNet using different data sources.

The HSI, HSI_PCA, and HSI_RF was used as different data sources. The sample window and Keep_prob were set according to the result with the highest classification accuracy in experiment B. Subsequently, we compared the classification accuracy and the network operation efficiency of the IPrNet for different data sources.

- (4)

Experiment D: Classification accuracy of the 3D-CNN under the same conditions as Experiment C.

Taking the best sample window of experiment A, the best Keep_prob values of experiment B, and the best data source of experiment C as the experimental conditions, and using 3D-CNN for classification, we compared the classification results of 3D-CNN and IPrNet to verify whether IPrNet had advantages in the classification of small-sample sets.

The increase in the number of network training iterations did not improve the test accuracy of the model. Therefore, the initial number of epochs/iterations was 20/50, and the iterations increased in steps of 50. The optimal number of iterations was tested in turn as a basis for terminating model training.

5. Conclusions

In this study, an improved prototypical network (IPrNet) was proposed. It performed better than 3D-CNN in the classification of forest tree species with a small-sample dataset. Comparing with 3D-CNN model, IPrNet training required fewer weight coefficients. Using Bregman divergence as the proximity measurement function, the convergence, and local minimum of the clustering algorithm were the same as the usual K-means. The Euclidean distance function satisfied the Bregman divergence. When the IPrNet is connected with clustering, it is reasonable to use the class means as the prototype for clustering, which made it easier to classify samples in space. The classification of airborne HSIs of nine complex forest tree species, cutting site, and roads in a 74.1 hm2 study area required only 1290’s. The OA reached 98.53%, and the Kappa reached 0.9838, which is suitable for regional tree species classification and mapping.

The different window samples were input into the IPrNet for classification, where an optimal classification window existed. The window size should be determined according to the area size and forest stand distribution pattern. Furthermore, the improved method of adding L2 regularization to the convolutional layer and dropout to the maximum pooling layer could effectively address the network overfitting. In actual classification applications, the IPrNet can effectively solve the classification problem of small-sample sets.

The IPrNet classified the tree species of airborne HSIs without dimensionality reduction, the results had a long training time and low test accuracy. After the dimensionality reduction of airborne HSIs, the training time was halved, and the test accuracy was significantly increased. Moreover, the classification accuracy of the HSI_PCA was higher than that of the HSI_RF, and the network training time of the HSI_PCA was shorter than that of the HSI_RF. Thus, using the IPrNet to classify the HSI after PCA dimensionality reduction can achieve desirable classification results.