The following is an introduction to the LSTM model.

2.3.1. Long Short-Term Memory (LSTM) Networks

An LSTM network is a special recurrent neural network (RNN). Therefore, before introducing LSTM, we provide a brief introduction to RNNs. We introduce the advantages of RNNs compared to traditional neural networks in processing time-series data, and their shortcomings in respect of long-term memory (an LSTM network was proposed to solve this problem).

Recurrent Neural Networks (RNNs)

A traditional neural network is fully connected from the input layer to the hidden layers and then to the output layer; however, the neurons between each layer are not connected, which leads to large deviations in the results of traditional neural networks when processing sequence data [

30]. Unlike traditional neural networks, recurrent neural networks (RNNs) can remember the previous information and apply it to the current output calculation; that is, the neurons between the hidden layers are connected, and the input of the hidden layer is composed of the output of the input layer and the output of the hidden layer at the previous moment.

Based on the characteristics of sequential data processing, a common RNN training method is the back-propagation through time (BPTT) algorithm. This algorithm is characterized by finding better points along the negative gradient direction of parameter optimization until convergence. However, in the optimization process, to solve the partial derivative of parameters at a certain moment, the information for all moments before that moment should be traced back, and the overall partial derivative function is the sum of all moments. When activation functions are added, the partial multiplications will result in multiplications of the derivatives of the activation functions, which will lead to “gradient disappearance” or “gradient explosion” [

31].

Long Short-Term Memory (LSTM) Networks

To solve the problem of gradient disappearance or gradient explosion in RNNs, Horchreiter et al. [

32] proposed LSTM in 1997, which combines short-term and long-term memory through gate control, thus solving the above problems to a certain extent.

Figure 4 shows the basic structure of an LSTM cell. It has an input gate Γ

i an output gate Γ

o, a forgetting gate Γ

f, and a memory cell

.

ht is the hidden state at time point

t,

xt is the input of the network at time point

t, and

σ is the activation function (sigmoid).V, W, and U are the neurons’ shared weight coefficient matrix.

An LSTM network protects and controls information through three “gates”:

- 1.

The forgetting gate determines what information in needs to be discarded or retained. By obtaining and , the output [0, 1] is assigned to , where 1 means completely retained and 0 means completely discarded. The output of the forgetting gate is as follows (where is the bias vector of the hidden layer element, is the bias vector, and the subscript is the corresponding element):

- 2.

The input gate determines how much information to add to the cell and generates the information of the sigmoid and tanh by combining with the forgetting gate to update the state of the cell. The input gate steps are:

- 3.

The output gate determines which part of the information of the current cell state is used as the output, and is still completed by the sigmoid and tanh. The output gate steps are:

According to the above steps, the LSTM model can deal with long-term and short-term time-dependent problems well.

The steps of the LSTM model method are as follows:

Step (1): Data normalization

As stated in

Section 2.1, we used the first 80% of data in the time series of observation data for the training model and the last 20% of the data for the testing model.

Table 2 shows the statistics for the training set and the test set.

To ensure the input wind observations shared the same structures and time scales [

33], we used the maximum and minimum values in the training set to normalize the input of the model (scaling the data to 0–1), and we reverse normalized the output data (scaled the data from 0–1 back to normal data). The normalized data were computed with:

where

is the normalized feature value for time

.

is the maximum value of the training dataset for one feature.

is the real value at time

.

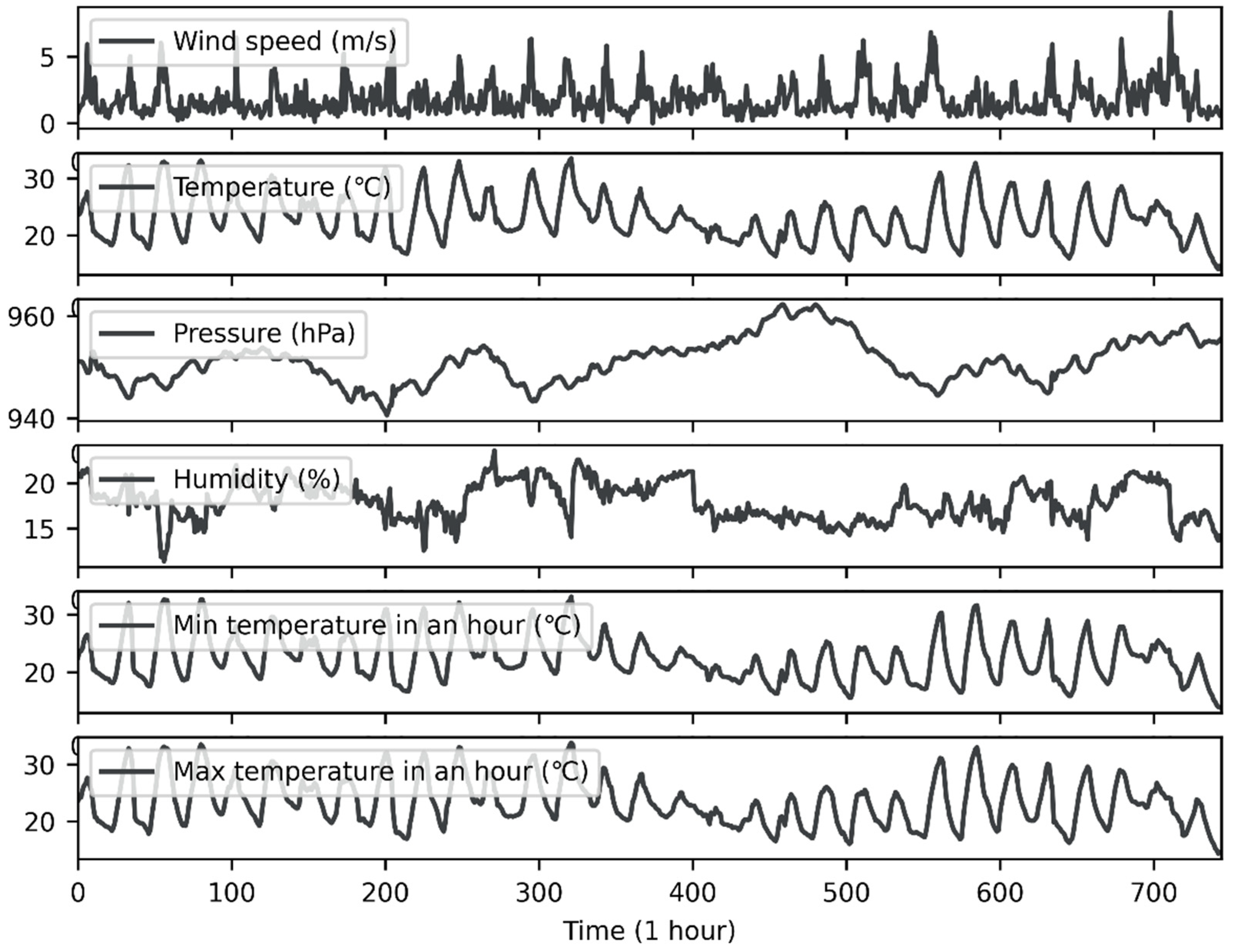

is the minimum value of the training data for one feature. The wind speed, temperature, pressure, humidity, minimum temperature, and maximum temperature were all normalized to 0–1 with the above equation.

Step (2): Data formatting

We used Keras, a third-party Python library, to set up the LSTM network. LSTM is a supervised learning model (supervised learning means that the training data used to train the model needed to contain two parts: the sample data and the corresponding labels). The LSTM model in Keras assumes that the data are divided into two parts: input

X and output

Y, where input

X represents the sample data and

Y represents the label corresponding to the sample data. For the time-series problem, the input

X represents the observed data for the last several time points, and the output

Y represents the predicted value of the next time point (this can be understood as follows: we input the wind speed observation values from the last several hours into the model, and the model outputs the wind speed for the next hour). Therefore, both the training and test sets need to be formatted in two parts:

X and

Y. Equations (7) and (8) represent the data format:

where

is the time step. We set this to 8, which means that the last 8 h of wind speed observations are used to predict the next hour’s wind speed.

indicates sample

,

indicates the label corresponding to sample

and

is the wind speed at time

.

For the training set, according to Equation (7), 592 (n = total data size − time steps = 600 − 8 = 592) samples were input into the LSTM network, and each sample contained wind speed observation values for 8 h. According to Equation (8), the labels corresponding to the samples contain the wind speed observation values from the 9th hour to the 600th hour. For example, the first sample contains the wind speed observation values for 8 h from the first hour to the eighth hour, and its corresponding label is the wind speed observation value of the ninth hour. The sample data and corresponding labels were used together to train the model.

The test set was also labeled in the same way.

Step (3): Model training

We used the formatted training set data to train the LSTM network model. By iteratively learning the training data, the weight parameters of the network were optimized so that the model could learn the time-series characteristics of the wind speed.

Table 3 shows the hyper-parameters of the LSTM network model. (We recommend that the reader obtains the specific meaning of each hyper-parameter from [

34].)

According to

Table 3, we divided the samples of the training set into 148 (=592/4) batches. All the batches were transmitted forward and back in the neural network for 30 epochs to train the model. In each epoch, we used MSE (the calculation is shown in Equation (9);

is the prediction of wind speed at time

, and

is the observation of the wind speed at time

) to calculate the difference between the forward calculation result of each iteration of the neural network and the true value, and used ADAM to change each weight of parameters in the network so that the loss function was constantly reduced and the accuracy of the neural network was increased.

Step (4): Predicting the wind speed with the trained LSTM network model

The input of the model was

X of the test set, which was obtained in step (2). The output

of the model is shown in Equation (10):

where

(or

) is the prediction of the wind speed at time

.

It should be noted that the prediction

is scaled in the range of 0–1, so it must be inversely normalized to obtain the wind speed prediction within the real range. Equation (11) describes how to inversely normalize the model output:

where

is the wind speed prediction (within the real range) at time

,

is the maximum wind speed observation in the training set, and

is the minimum wind speed observation in the training set. The reason for using the maximum and minimum values of the training set instead of the test set for inverse normalization is that in actual situations, we can only know the observed values of the wind speed in the past, so we can only use the past maximum and minimum wind speeds to predict the maximum and minimum wind speeds in the future.

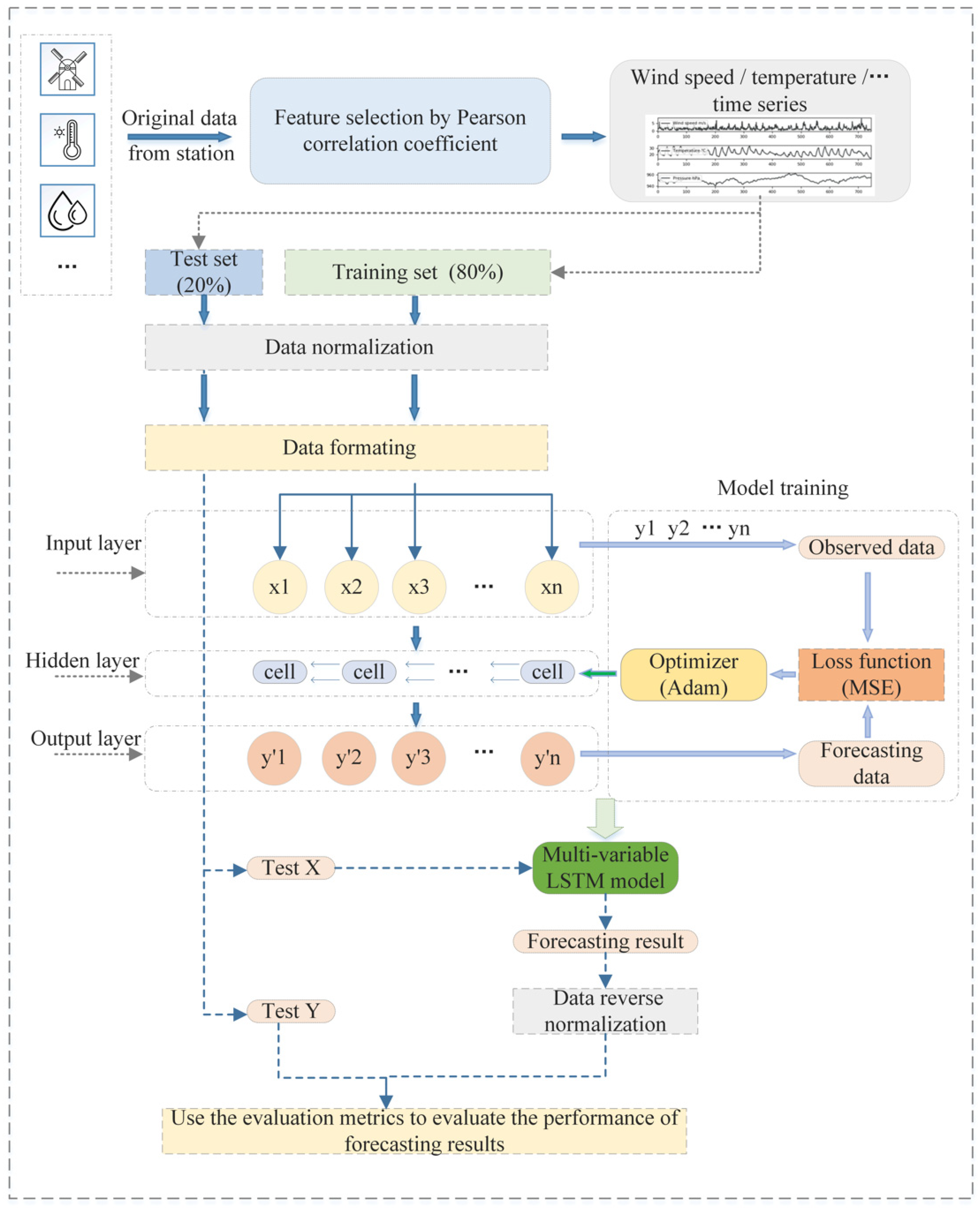

2.3.2. Multi-Variable Long Short-Term Memory (MV-LSTM) Network

In this paper, we propose a multi-variable LSTM network model based on Pearson correlation coefficient feature selection for short-term wind speed prediction, which takes the historical data for multiple meteorological elements into account. The framework is shown in

Figure 5.

The steps of the MV-LSTM network method are as follows:

Step (1): Feature selection

There is a certain correlation between different meteorological variables [

35]. Therefore, we calculated the Pearson correlation coefficient between each meteorological element and the wind speed, identified several meteorological elements (features) that are significantly related to the wind speed, and used them in conjunction with the wind speed time series as the input of the model. Equation (12) describes how to calculate the Pearson correlation coefficient [

36]:

where

is the Pearson correlation coefficient between

x and

y,

is the covariance between

X and

Y,

is the standard deviation of

X, and

is the standard deviation of

Y.

The Pearson correlation coefficient ranges from –1 to 1. When the correlation coefficient approaches 1, the two variables are positively correlated. When the correlation coefficient tends to –1, the two variables are negatively correlated [

37]. The bigger the absolute value of the correlation coefficient, the stronger the correlation between the variables. However, it is not sufficient to discuss only the absolute value of the coefficient. Therefore, hypothesis testing was used in this study to discuss the correlation between each meteorological element and the wind speed:

- (1)

We first propose the null hypothesis and the alternative hypothesis:

The null hypothesis: which means that the two variables (X and Y) are linearly independent;

The alternative hypothesis: , which means that the two variables are linearly dependent.

- (2)

We calculate the probability value (p-value) of the null hypothesis being true (when the two variables are linearly independent).

- (3)

We set the significance level: .

- (4)

We compare the p-value to . If the p-value is less than , the null hypothesis is considered as the extreme case, thus rejecting the null hypothesis and accepting the alternative hypothesis, which means that the linear correlation between X and Y is statistically significant.

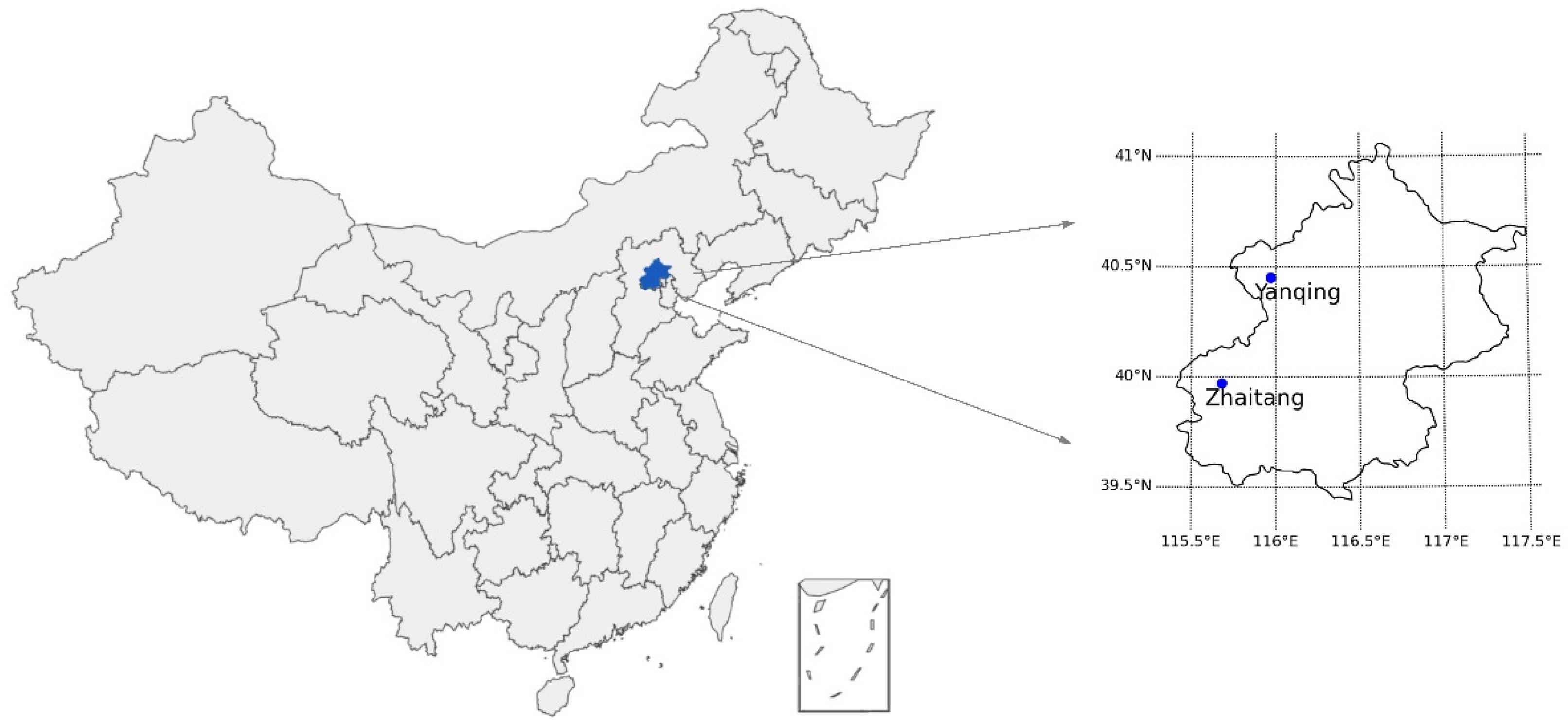

Table 4 lists the correlation between each meteorological element and the wind speed from the datasets for Yanqing Station and Zhaitang Station.

As can be seen in

Table 4, when the significance level is set to 0.05, for the different stations, the meteorological elements related to wind speed were also different. Therefore, we built MV-LSTM models for Yanqing Station and Zhaitang Station separately. Both models used the same hyper-parameters (see Step (4) for details). The models for each station were trained and tested using observations from that station. In Yanqing Station, the meteorological elements, including the temperature, pressure, humidity, minimum temperature in 1 h, and maximum temperature in 1 h, were related to wind speed. Therefore, these meteorological variables and wind speed data were selected as the inputs of the MV-LSTM model for Yanqing Station. In Zhaitang Station, the meteorological elements, including the temperature, pressure, minimum temperature in 1 h, and maximum temperature in 1 h, were related to wind speed. Therefore, these meteorological variables and wind speed data were selected as the inputs of the MV-LSTM model for Zhaitang.

Step (2): Data normalization

Similarly to the single-variable LSTM method, to ensure the input meteorological variables share the same structures and time scales, the selected meteorological elements and wind speed sequences must be normalized. Step (1) in

Section 2.3.2 describes how the wind speed sequence was normalized. For the MV-LSTM model, not only the wind speed, but also the meteorological elements selected by features, should be normalized. As an example, Equation (13) shows normalization of temperature:

where

is the normalized temerature at time

,

is the temperature observation at time

,

is the minimum temperature of the training set, and

is the maximum temperature of the training set. The segmentation method for the training set and test set was the same as that of the single-variable LSTM method (the first 80% of the data of each site were used as the training set, and the last 20% were used as the test set.).

Step (3): Data formatting

Similarly to the single-variable LSTM method, the datasets need to be transformed into a format for supervised learning. However, there is a difference between the single-variable LSTM method and MV-LSTM method for

. Unlike

in the single-variable LSTM method, which contains 8 h of wind speed observations (from the time

i to the time

i+7),

in MV-LSTM contains 8 h of observations of several meteorological elements (including the wind speed and other meteorological elements selected as features). Equation (14) describes the data format of

in MV-LSTM:

where

is sample

, and

is the observations of the meteorological elements at time

i. For Yanqing Station,

, where

,

,

,

and

are the temperature observation, the pressure observation, the humidity observation, the minimum temperature in the last hour at time

and the maximum temperature in the last hour at time

, respectively. For Zhaitang Station,

.

Step (4): Model training

From step (3), for the training set and test set of each station, we obtained the corresponding and . We used and of each station’s training set to train the model for that station.

The hyper-parameters of the MV-LSTM network model are the same as that of the LSTM network.

in the training set enters the hidden layer through the input layer of the model, which has several cells. The meteorological element data for each moment () propagate among the cells, and the data for the past moment affect the output of the cell at the next moment through the gate control mechanism. When all samples in have propagated an epoch in the hidden layer, the model will output the predicted value of this epoch through the output layer. The difference between the predicted value and the observed value is calculated with the loss function. Then, the optimizer is used to make the model update the connection weight of each neuron in the hidden layer in the direction of the decreasing value of the loss function.

After 30 epochs, the trained MV-LSTM network model was obtained.

Step (5): Predicting the wind speed with the trained MV-LSTM network model

in the test set (obtained with step (3)) was used as the input of the model, and the trained model would output the predicted value

(see Equation (10) in

Section 2.3.2 for details). Similarly to the single-variable LSTM method, we need the value of

predicted with inverse normalization to obtain the predicted wind speed value

Y’ within the real range (see Equation (11) in

Section 2.3.2 for details on inverse normalization).

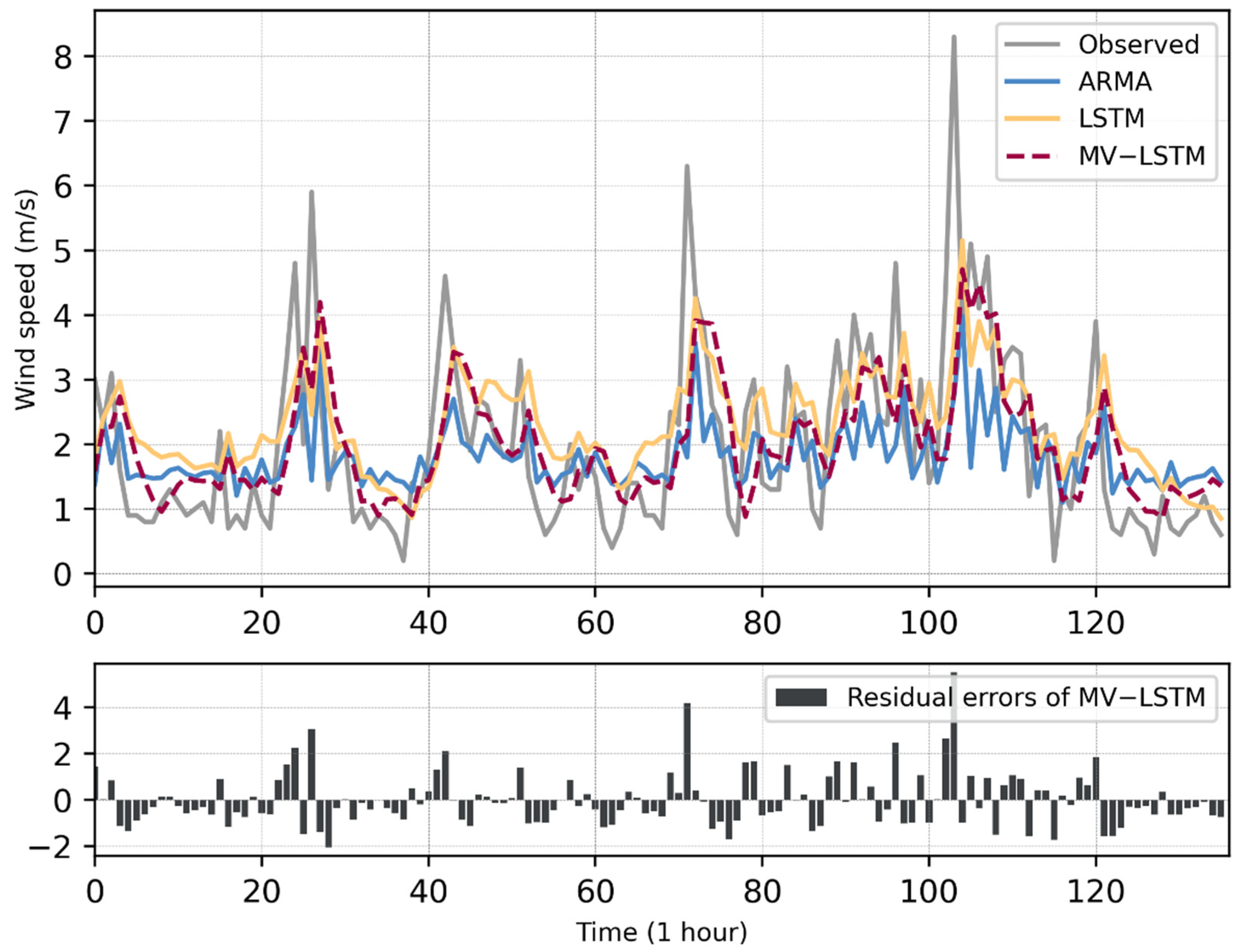

Finally, we evaluated the difference between the predicted value

Y’ of the test set and the observed value

Y of the test set so as to evaluate the performance of the model (see

Section 2.4 for the evaluation metrics).