High Resolution Viewscape Modeling Evaluated Through Immersive Virtual Environments

Abstract

:1. Introduction

2. Methods

2.1. Study area

2.2. Viewscape Modeling

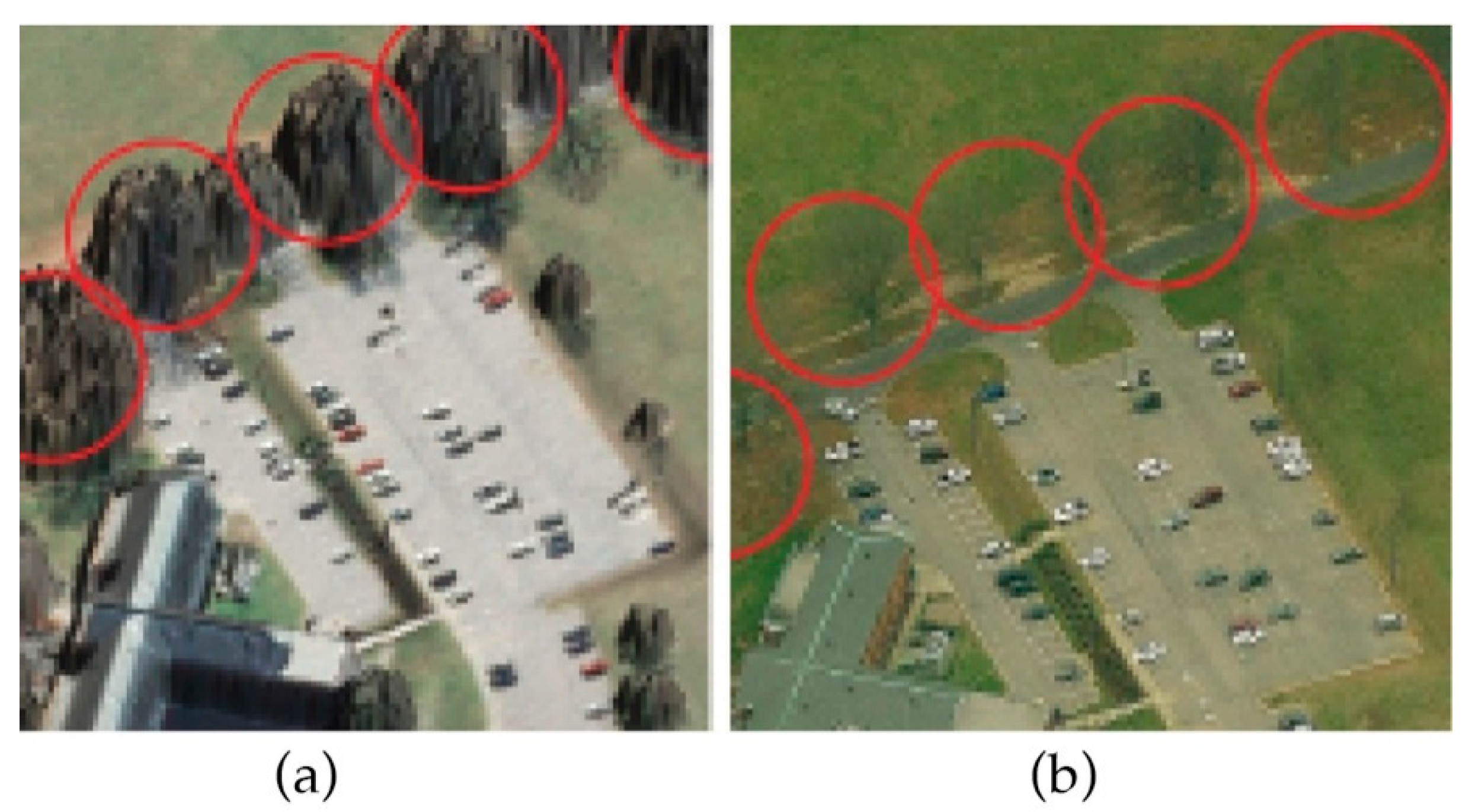

2.2.1. Digital Surface Model (DSM) and Landcover

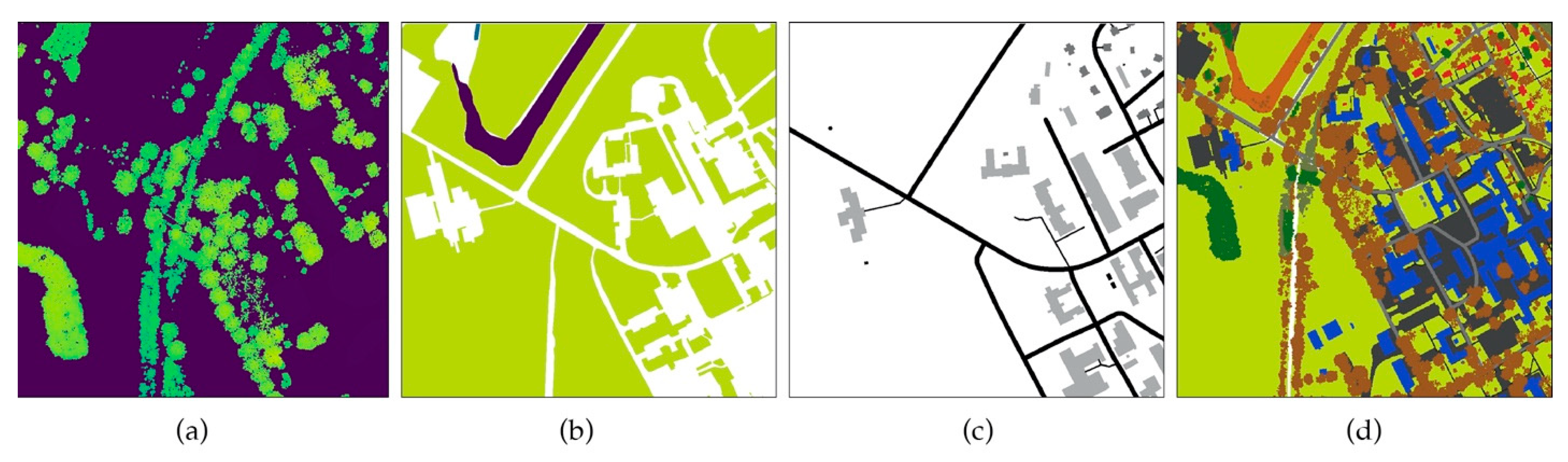

- Canopy height model (CHM) was, obtained through filtering and interpolating lidar vegetation points and subtracting their elevation from ground elevation (Figure 3a). We applied a supervised classification method [43] to strata of infrared imagery (NAIP), lidar vegetation maximum values, and orthoimagery to classify the CHM into the mixed forest, evergreen and deciduous landcovers (Figure 3b).

- The ground cover layer consists of grasslands, herbaceous, and unpaved surfaces, which were manually digitized in the 30 cm resolution orthoimagery.

- Buildings and paved surfaces (e.g., streets, parking surface), which were rasterized from the vector line and polygon data (Figure 3c; data retrieved from City of Raleigh GIS datasets; Raleigh, NC, US Open Data server, https://data-ral.opendata.arcgis.com/).

2.2.2. Trunk Obstruction Modeling

2.2.3. Computing Viewscape Metrics

2.3. Immersive Virtual Environment (IVE) Survey of Perceived Visual Characteristics

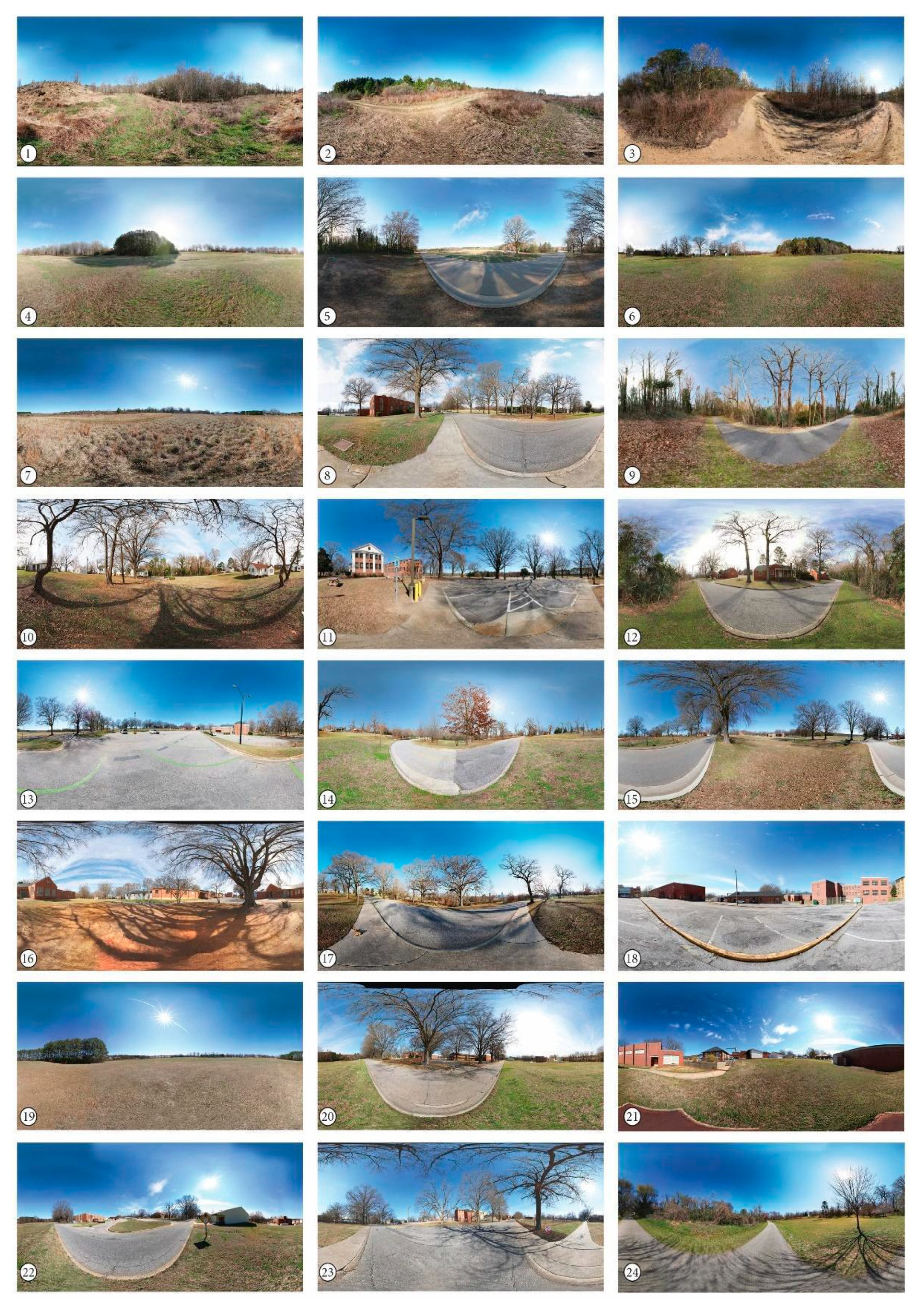

2.3.1. IVE Stimuli

2.3.2. Survey Procedure

2.4. Viewscape Model Assessment

3. Results

3.1. Viewscape Modeling

3.2. Immersive Virtual Environment Survey

4. Discussion

4.1. Predicting Perceived Visual Characteristics

4.2. Methodological Considerations for Modeling Viewscapes

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Conflicts of Interest

References

- Ode, Å.; Miller, D. Analysing the relationship between indicators of landscape complexity and preference. Environ. Plan. B Plan. Des. 2011, 38, 24–38. [Google Scholar] [CrossRef]

- Tveit, M.; Ode, Å.; Fry, G. Key concepts in a framework for analyzing visual landscape character. Landsc. Res. 2006, 31, 229–255. [Google Scholar] [CrossRef]

- Barton, J.; Hine, R.; Pretty, J. The health benefits of walking in greenspaces of high natural and heritage value. J. Integr. Environ. Sci. 2009, 6, 261–278. [Google Scholar] [CrossRef]

- Hipp, J.A.; Gulwadi, G.B.; Alves, S.; Sequeira, S. The Relationship Between Perceived Greenness and Perceived Restorativeness of University Campuses and Student-Reported Quality of Life. Environ. Behav. 2015, 48, 1292–1308. [Google Scholar] [CrossRef]

- Jansson, M.; Fors, H.; Lindgren, T.; Wiström, B. Perceived personal safety in relation to urban woodland vegetation—A review. Urban For. Urban Green. 2013, 12, 127–133. [Google Scholar] [CrossRef] [Green Version]

- Roe, J.J.; Aspinall, P.A.; Mavros, P.; Coyne, R. Engaging the Brain: The Impact of Natural versus Urban Scenes Using Novel EEG Methods in an Experimental Setting. Environ. Sci. 2013, 1, 93–104. [Google Scholar] [CrossRef] [Green Version]

- Palmer, J.F.; Hoffman, R.E. Rating reliability and representation validity in scenic landscape assessments. Landsc. Urban Plan. 2001, 54, 149–161. [Google Scholar] [CrossRef]

- Ode, Å.; Hagerhall, C.M.; Sang, N. Analyzing Visual Landscape Complexity: Theory and Application. Landsc. Res. 2010, 35, 111–131. [Google Scholar] [CrossRef]

- Sang, N.; Miller, D.; Ode, Å. Landscape metrics and visual topology in the analysis of landscape preference. Environ. Plan. B Plan. Des. 2008, 35, 504–520. [Google Scholar] [CrossRef]

- Brabyn, L.; Mark, D.M. Using viewsheds, GIS, and a landscape classification to tag landscape photographs. Appl. Geogr. 2011, 31, 1115–1122. [Google Scholar] [CrossRef]

- Wilson, J.; Lindsey, G.; Liu, G. Viewshed characteristics of urban pedestrian trails, Indianapolis, Indiana, USA. J. Maps 2008, 4, 108–118. [Google Scholar] [CrossRef]

- Zanon, J.D. Utilizing Viewshed Analysis to Identify Viewable Landcover Classes and Prominent Features within Big Bend National Park. Pap. Resour. Anal. 2015, 17, 1–11. [Google Scholar]

- Sang, N. Wild Vistas: Progress in Computational Approaches to ‘Viewshed’ Analysis. In Mapping Wilderness; Carver, S.J., Fritz, S., Eds.; Springer Science+Business Media: Dordrecht, The Netherlands, 2016; pp. 69–87. [Google Scholar]

- Schirpke, U.; Tasser, E.; Tappeiner, U. Predicting scenic beauty of mountain regions. Landsc. Urban Plan. 2013, 111, 1–12. [Google Scholar] [CrossRef]

- Bell, J.; Wilson, J.; Liu, G. Neighborhood Greenness and 2-year Changes in Body Mass Index of Children and Youth. Am. J. Prev. Med. 2008, 35, 547–553. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Vukomanovic, J.; Singh, K.K.; Petrasova, A.; Vogler, J.B. Not seeing the forest for the trees: Modeling exurban viewscapes with LiDAR. Landsc. Urban Plan. 2018, 170, 169–176. [Google Scholar] [CrossRef]

- Schirpke, U.; Timmermann, F.; Tappeiner, U.; Tasser, E. Cultural ecosystem services of mountain regions: Modelling the aesthetic value. Ecol. Indic. 2016, 69, 78–90. [Google Scholar] [CrossRef] [Green Version]

- Sahraoui, Y.; Clauzel, C.; Foltête, J.-C. Spatial modelling of landscape aesthetic potential in urban-rural fringes. J. Environ. Manag. 2016, 181, 623–636. [Google Scholar] [CrossRef]

- Vukomanovic, J.; Orr, B.J. Landscape Aesthetics and the Scenic Drivers of Amenity Migration in the New West: Naturalness, Visual Scale, and Complexity. Land 2014, 3, 390–413. [Google Scholar] [CrossRef] [Green Version]

- Yu, S.; Yu, B.; Song, W.; Wu, B.; Zhou, J.; Huang, Y.; Wu, J.; Zhao, F.; Mao, W. View-based greenery: A three-dimensional assessment of city buildings’ green visibility using Floor Green View Index. Landsc. Urban Plan. 2016, 152, 13–26. [Google Scholar] [CrossRef]

- Ulrich, R.S. Human responses to vegetation and landscapes. Landsc. Urban Plan. 1986, 13, 29–44. [Google Scholar] [CrossRef]

- Nasar, J.L.; Julian, D.; Buchman, S.; Humphreys, D.; Mrohaly, M. The emotional quality of scenes and observation points: A look at prospect and refuge. Landsc. Plan. 1983, 10, 355–361. [Google Scholar] [CrossRef]

- Vukomanovic, J.; Vogler, J.; Petrasova, A. Modeling the connection between viewscapes and home locations in a rapidly exurbanizing region. Comput. Environ. Urban Syst. 2019, 78, 101388. [Google Scholar] [CrossRef]

- Barry, S.; Elith, J. Error and uncertainty in habitat models. J. Appl. Ecol. 2006, 43, 413–423. [Google Scholar] [CrossRef]

- Moudrý, V.; Šímová, P. Influence of positional accuracy, sample size and scale on modelling species distributions: A review. Int. J. Geogr. Inf. Sci. 2012, 26, 2083–2095. [Google Scholar] [CrossRef]

- Klouček, T.; Lagner, O.; Šímová, P. How does data accuracy influence the reliability of digital viewshed models? A case study with wind turbines. Appl. Geogr. 2015, 64, 46–54. [Google Scholar] [CrossRef]

- Bartie, P.; Reitsma, F.; Kingham, S.; Mills, S. Incorporating vegetation into visual exposure modelling in urban environments. Int. J. Geogr. Inf. Sci. 2011, 25, 851–868. [Google Scholar] [CrossRef]

- Murgoitio, J.J.; Shrestha, R.; Glenn, N.F.; Spaete, L.P. Improved visibility calculations with tree trunk obstruction modeling from aerial LiDAR. Int. J. Geogr. Inf. Sci. 2017, 27, 1865–1883. [Google Scholar] [CrossRef]

- Llobera, M. Modeling visibility through vegetation. Int. J. Geogr. Inf. Sci. 2007, 21, 799–810. [Google Scholar] [CrossRef]

- Kronqvist, A.; Jokinen, J.; Rousi, R. Evaluating the authenticity of virtual environments: Comparison of three devices. Adv. Hum. Comput. Interact. 2016, 2016, 2937632. [Google Scholar] [CrossRef]

- Slater, M.; Lotto, B.; Arnold, M.M.; Sanchez-Vives, M.V. How we experience immersive virtual environments: The concept of presence and its measurement. Anu. Psicol. 2009, 40, 193–210. [Google Scholar]

- Kim, K.; Rosenthal, M.Z.; Zielinski, D.; Brady, R. Comparison of desktop, head mounted display, and six wall fully immersive systems using a stressful task. In Proceedings of the 2012 IEEE Virtual Reality Workshops (VRW), Costa Mesa, CA, USA, 4–8 March 2012; pp. 143–144. [Google Scholar]

- Çöltekin, A.; Lokka, I.-E.; Zahner, M. On the usability and usefulness of 3D (geo)visualizations—A focus on virtual reality environments. In Proceedings of the XXIII ISPRS Congress, Commission II, Prague, Czech Republic, 12–19 July 2016. [Google Scholar]

- Tabrizian, P.; Harmon, A.; Petrasova, B.; Petras, V.; Mitasova, R. Helena Meentemeyer, Tangible Immersion for Ecological Design. In Proceedings of the 37th Annual Conference of the Association for Computer Aided Design in Architecture (ACADIA), Cambridge, MA, USA, 2–4 November 2017; pp. 600–609. [Google Scholar]

- Lee, D.J.; Dias, E.; Scholten, H.J. (Eds.) Geodesign by Integrating Design and Geospatial Sciences; Springer: New York, NY, USA, 2015; Volume 1. [Google Scholar]

- Tabrizian, P.; Baran, P.K.; Smith, W.R.; Meentemeyer, R.K. Exploring perceived restoration potential of urban green enclosure through immersive virtual environments. J. Environ. Psychol. 2018, 55, 99–109. [Google Scholar] [CrossRef]

- Carrus, G.; Lafortezza, R.; Colangelo, G.; Dentamaro, I.; Scopelliti, M.; Sanesi, G. Relations between naturalness and perceived restorativeness of different urban green spaces. Psyecology 2013, 4, 227–244. [Google Scholar] [CrossRef]

- Hartig, T.; Evans, G.W.; Jamner, L.D.; Davis, D.S.; Gärling, T. Tracking restoration in natural and urban field settings. J. Environ. Psychol. 2003, 23, 109–123. [Google Scholar] [CrossRef]

- Kuper, R. Evaluations of landscape preference, complexity, and coherence for designed digital landscape models. Landsc. Urban Plan. 2017, 157, 407–421. [Google Scholar] [CrossRef]

- Ode, Å.; Fry, G.; Tveit, M.S.; Messager, P.; Miller, D. Indicators of perceived naturalness as drivers of landscape preference. J. Environ. Manag. 2009, 90, 375–383. [Google Scholar] [CrossRef]

- Herzog, T.R.; Kropscott, L.S. Legibility, Mystery, and Visual Access as Predictors of Preference and Perceived Danger in Forest Settings without Pathways. Environ. Behav. 2004, 36, 659–677. [Google Scholar] [CrossRef] [Green Version]

- Mitášová, H.; Hofierka, J. Interpolation by regularized spline with tension: II. Application to terrain modeling and surface geometry analysis. Math. Geol. 1993, 25, 657–669. [Google Scholar] [CrossRef]

- Phiri, D.; Morgenroth, J. Developments in Landsat land cover classification methods: A review. Remote Sens. 2017, 9, 967. [Google Scholar] [CrossRef] [Green Version]

- Jasiewicz, J.; Stepinski, T.F. Geomorphons—A pattern recognition approach to classification and mapping of landforms. Geomorphology 2013, 182, 147–156. [Google Scholar] [CrossRef]

- Antonello, A.; Franceschi, S.; Floreancig, V.; Comiti, F.; Tonon, G. Application of a pattern recognition algorithm for single tree detection from LiDAR data. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII, 18–22. [Google Scholar] [CrossRef] [Green Version]

- Haverkort, H.; Toma, L.; Zhuang, Y. Computing visibility on terrains in external memory. J. Exp. Algorithmics 2009, 13, 1–5. [Google Scholar] [CrossRef]

- Uuemaa, E.; Antrop, M.; Marja, R.; Roosaare, J.; Mander, Ü. Landscape Metrics and Indices: An Overview of Their Use in Landscape Research Imprint/Terms of Use. Living Rev. Landsc. Res. 2009, 3, 1–28. [Google Scholar] [CrossRef]

- Dramstad, W.E.; Olson, J.D.; Forman, R.T.T. Landscape Ecology Principles in Landscape Architecture and Land-Use Planning; Island Press: Washington, DC, USA, 1996. [Google Scholar]

- Dimitrijevic, A. Comparison of Spherical Cube Map Projections Used in Planet-Sized Terrain Rendering. Facta Univ. Ser. Math. Inform. 2016, 31, 259–297. [Google Scholar]

- Dramstad, W.E.; Tveit, M.S.; Fjellstad, W.J.; Fry, G.L.A. Relationships between visual landscape preferences and map-based indicators of landscape structure. Landsc. Urban Plan. 2006, 78, 465–474. [Google Scholar] [CrossRef]

- Marselle, M.R.; Irvine, K.N.; Lorenzo-Arribas, A.; Warber, S.L. Does perceived restorativeness mediate the effects of perceived biodiversity and perceived naturalness on emotional well-being following group walks in nature? J. Environ. Psychol. 2016, 46, 217–232. [Google Scholar] [CrossRef]

- Kuper, R. Preference, complexity, and color information entropy values for visual depictions of plant and vegetative growth. HortTechnology. 2015, 25, 625–634. [Google Scholar] [CrossRef] [Green Version]

- Lindal, P.J.; Hartig, T. Architectural variation, building height, and the restorative quality of urban residential streetscapes. J. Environ. Psychol. 2013, 33, 26–36. [Google Scholar] [CrossRef]

- Bordens, S.; Abbot, B. Research Design and Methods: A Process. Approach, 10th ed.; McGraw-Hill Education: New York, NY, USA, 2018. [Google Scholar]

- Hair, J.F.; Black, W.C.; Babin, B.J.; Anderson, R.E. Multivariate Data Analysis, 7th ed.; Pearson: Upper Saddle River, NJ, USA, 2009. [Google Scholar]

- Stamps, A.E. Effects of Permeability on Perceived Enclosure and Spaciousness. Environ. Behav. 2010, 42, 864–886. [Google Scholar] [CrossRef]

- Fry, G.; Tveit, M.S.; Ode, Å.; Velarde, M.D. The ecology of visual landscapes: Exploring the conceptual common ground of visual and ecological landscape indicators. Ecol. Indic. 2009, 9, 933–947. [Google Scholar] [CrossRef]

- Bell, S. Landscape pattern, perception and visualisation in the visual management of forests. Landsc. Urban Plan. 2001, 54, 201–211. [Google Scholar] [CrossRef]

- Kaplan, R.; Kaplan, S. The Experience of Nature. A Psychological Perspective; Cambridge University Press: Cambridge, UK, 1989. [Google Scholar]

- Stamps, A.E. Advances in visual diversity and entropy. Environ. Plan. B Plan. Des. 2003, 30, 449–463. [Google Scholar] [CrossRef]

- Rosenholtz, R.; Li, Y.; Nakano, L. Measuring visual clutter. J. Vis. 2007, 7, 17. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Fairbairn, D. Measuring Map Complexity. Cartogr. J. 2006, 43, 224–238. [Google Scholar] [CrossRef]

- Stigmar, H.; Harrie, L. Evaluation of Analytical Measures of Map Legibility. Cartogr. J. 2011, 48, 41–53. [Google Scholar] [CrossRef]

- Palumbo, L.; Makin, A.D.J.; Bertamini, M. Examining visual complexity and its influence on perceived duration. J. Vis. 2014, 14, 3. [Google Scholar] [CrossRef] [Green Version]

- Hagerhall, C.M.; Laike, T.; Taylor, R.P.; Küller, M.; Küller, R.; Martin, T.P. Investigations of human EEG response to viewing fractal patterns. Perception 2008, 37, 1488–1494. [Google Scholar] [CrossRef] [PubMed]

- Taylor, R.P.; Spehar, B.; Wise, J.A.; Clifford, C.W.; Newell, B.R.; Hagerhall, C.M.; Purcell, T.; Martin, T.P. Perceptual and physiological responses to the visual complexity of fractal patterns. Nonlinear Dyn. Psychol. Life Sci. 2005, 9, 89–114. [Google Scholar]

- Schnur, S.; Bektaş, K.; Çöltekin, A. Measured and perceived visual complexity: A comparative study among three online map providers. Cartogr. Geogr. Inf. Sci. 2018, 45, 238–254. [Google Scholar] [CrossRef]

- Chmielewski, S.; Tompalski, P. Estimating outdoor advertising media visibility with voxel-based approach. Appl. Geogr. 2017, 87, 1–13. [Google Scholar] [CrossRef]

- Appleton, K.; Lovett, A. GIS-based visualisation of rural landscapes: Defining ‘sufficient’ realism for environmental decision-making. Landsc. Urban Plan. 2003, 65, 117–131. [Google Scholar] [CrossRef]

- Palmer, J.F. Using spatial metrics to predict scenic perception in a changing landscape: Dennis, Massachusetts. Landsc. Urban Plan. 2004, 69, 201–218. [Google Scholar] [CrossRef]

- Collado, S.; Staats, H.; Sorrel, M.A. A relational model of perceived restorativeness: Intertwined effects of obligations, familiarity, security and parental supervision. J. Environ. Psychol. 2016, 48, 24–32. [Google Scholar] [CrossRef]

- Keane, T. The Role of Familiarity in Prairie Landscape Aesthetics. In Proceedings of the 12th North American Prairie Conference, Cedar Falls, IA, USA, 5–9 August 1990; pp. 205–208. [Google Scholar]

- Tang, I.-C.; Sullivan, W.C.; Chang, C.-Y. Perceptual Evaluation of Natural Landscapes: The Role of the Individual Connection to Nature. Environ. Behav. 2015, 47, 595–617. [Google Scholar] [CrossRef] [Green Version]

- Mayer, F.S.; Frantz, C.M. The connectedness to nature scale: A measure of individuals’ feeling in community with nature. J. Environ. Psychol. 2004, 24, 503–515. [Google Scholar] [CrossRef] [Green Version]

| Composition Metrics | Configuration Metrics | ||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Viewscape | Deciduous | Mixed | Evergreen | Herbaceous | Grass | Building | Paved | extent | depth | relief | skyline | horizontal | VdepthVar | Nump | SI | ED | PS | PD | SDI |

| (%) | (%) | (%) | (%) | (%) | (%) | (%) | (m2) | (m) | (m) | (m) | (m2) | ||||||||

| 1 | 4.40 | 28.60 | 0.40 | 66.10 | 0.00 | 0.40 | 0.00 | 6921 | 99 | 4.29 | 8.04 | 6904 | 4.13 | 3 | 18.31 | 6955 | 0.09 | 58,756 | 0.82 |

| 2 | 13.40 | 42.00 | 2.80 | 31.00 | 30.00 | 2.60 | 0.30 | 21,214 | 2130 | 6.78 | 1.86 | 2089 | 36.99 | 2 | 73.48 | 14,373 | 0.01 | 127,130 | 1.56 |

| 3 | 4.40 | 28.60 | 0.40 | 4.30 | 37.90 | 0.30 | 1.60 | 3140 | 506 | 11.10 | 4.65 | 1273 | 24.73 | 1 | 39.53 | 11,462 | 0.07 | 91,617 | 0.95 |

| 4 | 27.40 | 3.90 | 4.00 | 0.00 | 56.50 | 4.90 | 3.30 | 42,582 | 325 | 3.14 | 8.28 | 40,666 | 10.10 | 20 | 26.09 | 4186 | 0.04 | 33,455 | 1.22 |

| 5 | 7.30 | 8.10 | 7.10 | 6.30 | 56.50 | 3.30 | 2.80 | 98,618 | 812 | 4.44 | 10.55 | 79,819 | 18.28 | 82 | 47.52 | 4964 | 0.04 | 30,744 | 1.75 |

| 6 | 8.50 | 14.00 | 3.70 | 0.00 | 63.20 | 5.20 | 1.90 | 91,891 | 1972 | 5.91 | 9.38 | 61,032 | 16.85 | 21 | 49.44 | 5294 | 0.04 | 31,170 | 1.45 |

| 7 | 12.80 | 12.10 | 2.30 | 0.00 | 60.00 | 4.20 | 7.70 | 97,852 | 2132 | 5.97 | 11.20 | 68,717 | 18.67 | 27 | 48.02 | 5075 | 0.04 | 30,504 | 1.41 |

| 8 | 5.30 | 18.50 | 5.40 | 5.30 | 59.50 | 1.90 | 0.90 | 103,798 | 1789 | 3.40 | 9.58 | 55,347 | 14.99 | 73 | 54.37 | 5463 | 0.05 | 33,642 | 1.53 |

| 9 | 19.80 | 5.10 | 3.40 | 0.00 | 54.40 | 6.70 | 10.50 | 91,018 | 1074 | 5.37 | 11.37 | 60,146 | 29.40 | 73 | 53.24 | 5917 | 0.04 | 34,626 | 1.37 |

| 10 | 0.00 | 60.80 | 0.00 | 0.00 | 39.20 | 0.00 | 0.00 | 80 | 150 | 0.26 | 6.09 | 79 | 2.24 | 2 | 5.60 | 14,168 | 0.05 | 102,955 | 0.67 |

| 11 | 24.90 | 14.10 | 4.80 | 0.00 | 35.90 | 10.00 | 10.30 | 5593 | 366 | 2.37 | 10.08 | 5457 | 6.16 | 22 | 20.21 | 7676 | 0.09 | 56,625 | 1.68 |

| 12 | 10.80 | 16.20 | 7.70 | 0.00 | 43.80 | 10.70 | 8.60 | 36,353 | 982 | 3.17 | 9.28 | 22,770 | 19.82 | 18 | 49.59 | 7839 | 0.08 | 50,237 | 1.76 |

| 13 | 32.50 | 0.40 | 4.80 | 0.00 | 28.20 | 23.00 | 11.00 | 1712 | 210 | 2.22 | 10.20 | 1564 | 6.10 | 8 | 14.14 | 10,021 | 0.13 | 87,406 | 1.62 |

| 14 | 13.30 | 3.50 | 7.10 | 0.90 | 62.50 | 4.50 | 8.10 | 106,496 | 891 | 5.36 | 11.33 | 96,335 | 17.88 | 36 | 39.73 | 4081 | 0.03 | 21,146 | 1.27 |

| 15 | 25.60 | 25.20 | 2.80 | 0.00 | 34.40 | 5.20 | 6.80 | 19,282 | 461 | 5.36 | 10.71 | 16,813 | 11.50 | 19 | 42.10 | 8806 | 0.11 | 69,296 | 1.53 |

| 16 | 13.90 | 4.10 | 7.40 | 2.40 | 59.80 | 4.50 | 22.00 | 104,086 | 843 | 5.89 | 10.37 | 81,323 | 25.46 | 50 | 48.48 | 5098 | 0.04 | 30,592 | 1.37 |

| 17 | 22.30 | 5.80 | 0.20 | 0.00 | 16.70 | 22.70 | 32.40 | 8605 | 311 | 1.36 | 6.34 | 6409 | 7.37 | 17 | 18.63 | 5752 | 0.05 | 35,191 | 1.51 |

| 18 | 32.70 | 5.70 | 3.20 | 0.00 | 52.70 | 2.00 | 3.80 | 28,459 | 305 | 5.31 | 12.59 | 25,200 | 16.61 | 32 | 32.72 | 6298 | 0.05 | 42,796 | 1.20 |

| 19 | 26.30 | 22.20 | 1.30 | 0.00 | 35.00 | 3.90 | 11.20 | 50,713 | 1293 | 4.76 | 12.04 | 34,196 | 26.99 | 42 | 63.49 | 8672 | 0.08 | 59,631 | 1.52 |

| 20 | 7.40 | 2.90 | 10.30 | 0.00 | 12.60 | 18.10 | 48.80 | 10,702 | 300 | 2.11 | 6.22 | 10,369 | 5.29 | 13 | 19.73 | 5642 | 0.06 | 40,294 | 1.45 |

| 21 | 10.40 | 3.50 | 5.00 | 0.70 | 69.90 | 3.80 | 6.40 | 185,289 | 538 | 7.12 | 11.20 | 167,052 | 17.30 | 44 | 39.15 | 3109 | 0.02 | 17,501 | 1.11 |

| 22 | 24.20 | 5.30 | 8.70 | 0.00 | 46.00 | 5.80 | 10.00 | 42,773 | 325 | 2.93 | 7.90 | 38,522 | 12.37 | 31 | 33.13 | 5093 | 0.04 | 32,375 | 1.50 |

| 23 | 9.30 | 0.40 | 0.20 | 0.00 | 25.90 | 23.00 | 41.20 | 6250 | 137 | 1.10 | 7.26 | 6059 | 4.96 | 12 | 15.35 | 5425 | 0.06 | 43,198 | 1.31 |

| 24 | 23.00 | 3.00 | 7.20 | 0.00 | 28.90 | 15.30 | 22.70 | 23,569 | 414 | 1.55 | 7.02 | 21,166 | 17.10 | 23 | 37.48 | 7370 | 0.08 | 55,496 | 1.61 |

| Visual Access | Naturalness | Complexity | ||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| IVE Scene | Mean | SD | min | max | Mean | SD | min | max | Mean | SD | min | max |

| 1 | 2.21 | 1.51 | 1.00 | 9.00 | 10.31 | 1.03 | 6.00 | 11.00 | 2.29 | 1.22 | 1.00 | 4.00 |

| 2 | 4.76 | 0.89 | 4.00 | 7.00 | 9.48 | 1.52 | 3.00 | 11.00 | 3.82 | 1.38 | 2.00 | 6.00 |

| 3 | 3.53 | 1.66 | 2.00 | 9.00 | 9.38 | 1.64 | 3.00 | 11.00 | 4.71 | 1.95 | 2.00 | 11.00 |

| 4 | 7.88 | 2.33 | 4.00 | 11.00 | 8.06 | 2.22 | 3.00 | 11.00 | 4.35 | 1.91 | 1.00 | 10.00 |

| 5 | 8.65 | 1.79 | 3.00 | 10.00 | 7.24 | 2.12 | 3.00 | 11.00 | 8.03 | 1.22 | 6.00 | 10.00 |

| 6 | 8.59 | 1.91 | 4.00 | 11.00 | 7.72 | 1.17 | 4.00 | 9.00 | 7.18 | 2.68 | 1.00 | 11.00 |

| 7 | 8.94 | 2.04 | 3.00 | 11.00 | 7.78 | 1.95 | 4.00 | 11.00 | 6.74 | 2.31 | 2.00 | 10.00 |

| 8 | 9.12 | 2.24 | 3.00 | 11.00 | 9.22 | 1.07 | 6.00 | 11.00 | 6.09 | 2.39 | 1.00 | 10.00 |

| 9 | 8.88 | 1.04 | 5.00 | 10.00 | 7.09 | 1.99 | 4.00 | 11.00 | 9.09 | 2.68 | 1.00 | 11.00 |

| 10 | 1.85 | 1.13 | 1.00 | 7.00 | 9.50 | 1.24 | 6.00 | 11.00 | 2.41 | 2.27 | 0.00 | 10.00 |

| 11 | 3.53 | 1.56 | 2.00 | 8.00 | 6.91 | 2.10 | 3.00 | 11.00 | 8.71 | 1.75 | 2.00 | 11.00 |

| 12 | 7.32 | 1.97 | 4.00 | 11.00 | 6.36 | 2.04 | 3.00 | 11.00 | 8.56 | 1.60 | 6.00 | 11.00 |

| 13 | 2.38 | 1.61 | 1.00 | 9.00 | 4.03 | 0.93 | 3.00 | 6.00 | 7.85 | 1.13 | 6.00 | 10.00 |

| 14 | 9.53 | 1.78 | 2.00 | 11.00 | 6.06 | 2.76 | 1.00 | 11.00 | 6.03 | 2.28 | 1.00 | 10.00 |

| 15 | 8.18 | 1.64 | 4.00 | 11.00 | 7.91 | 2.05 | 4.00 | 11.00 | 7.15 | 1.28 | 5.00 | 9.00 |

| 16 | 8.35 | 1.81 | 5.00 | 11.00 | 7.24 | 1.97 | 3.00 | 11.00 | 7.68 | 1.34 | 6.00 | 10.00 |

| 17 | 5.62 | 2.53 | 2.00 | 11.00 | 3.97 | 1.23 | 2.00 | 9.00 | 8.85 | 2.34 | 4.00 | 11.00 |

| 18 | 7.74 | 2.06 | 1.00 | 11.00 | 8.91 | 1.96 | 2.00 | 11.00 | 4.06 | 1.59 | 2.00 | 9.00 |

| 19 | 8.29 | 1.64 | 6.00 | 11.00 | 8.09 | 1.67 | 5.00 | 11.00 | 7.71 | 2.36 | 2.00 | 11.00 |

| 20 | 5.29 | 2.52 | 1.00 | 11.00 | 1.68 | 1.14 | 1.00 | 6.00 | 5.47 | 2.98 | 1.00 | 11.00 |

| 21 | 10.62 | 0.49 | 10.00 | 11.00 | 8.65 | 1.33 | 3.00 | 10.00 | 4.44 | 1.42 | 2.00 | 7.00 |

| 22 | 7.09 | 1.82 | 4.00 | 11.00 | 6.48 | 2.27 | 2.00 | 11.00 | 6.41 | 2.40 | 1.00 | 10.00 |

| 23 | 3.00 | 0.85 | 2.00 | 4.00 | 2.32 | 1.25 | 1.00 | 7.00 | 9.00 | 2.13 | 4.00 | 11.00 |

| 24 | 7.12 | 2.25 | 2.00 | 11.00 | 3.32 | 1.05 | 2.00 | 7.00 | 8.74 | 1.19 | 7.00 | 11.00 |

| Perceived Visual Characteristic | Viewscape Metric | Coefficient | Normalized Coefficient | Student t | p | Tolerance | VIF | |

|---|---|---|---|---|---|---|---|---|

| Perceived Visual Access | (Intercept) | 7.120 | 7.11 | <.001 | *** | |||

| Extent | 0.000 | 0.390 | 6.74 | <.001 | *** | 0.14 | 7.15 | |

| Depth | 0.001 | 0.110 | 2.94 | .003 | ** | 0.333 | 3 | |

| n = 32 | Skyline | −0.176 | −0.143 | −2.76 | .006 | ** | 0.173 | 5.77 |

| R² adj = 0.65 | Relief | −0.158 | −0.119 | −2.85 | .004 | ** | 0.27 | 3.7 |

| p < .001 | Vdepth_var | 0.077 | 0.215 | 3.94 | <.001 | *** | 0.157 | 6.36 |

| Building | −0.180 | −0.414 | −7.61 | <.001 | *** | 0.159 | 6.28 | |

| Paved | 0.026 | 0.106 | 2.21 | .028 | * | 0.201 | 4.97 | |

| Deciduous | 0.058 | 0.173 | 4.65 | <.001 | *** | 0.338 | 2.96 | |

| Herbaceous | −0.044 | −0.20 | −6.87 | <.001 | *** | 0.551 | 1.82 | |

| Nump | 19.200 | 0.164 | 3.17 | .002 | ** | 0.175 | 5.72 | |

| ED | 0.000 | −0.390 | −7.33 | <.001 | *** | 0.163 | 6.12 | |

| Perceived Naturalness | ||||||||

| (Intercept) | 2.441 | 3.25 | .001 | ** | ||||

| Relief | 0.157 | 0.128 | 3.88 | <.001 | *** | 0.471 | 2.12 | |

| n = 34 | Deciduous | 0.057 | 0.187 | 6.30 | <.001 | *** | 0.582 | 1.72 |

| R² adj = 0.62 | Mixed | 0.074 | 0.370 | 9.07 | <.001 | *** | 0.311 | 3.21 |

| p < .001 | Evergreen | −0.13 | −0.133 | −4.36 | <.001 | *** | 0.537 | 1.86 |

| Herbaceous | 0.067 | 0.335 | 8.26 | <.001 | *** | 0.315 | 3.18 | |

| Grass | 0.066 | 0.407 | 7.25 | <.001 | *** | 0.164 | 6.11 | |

| Building | −0.12 | −0.302 | −5.42 | <.001 | *** | 0.166 | 6.02 | |

| SI | −0.017 | −0.124 | −3.19 | .001 | ** | 0.549 | 1.82 | |

| Nump | 7.026 | 0.102 | 2.08 | .038 | * | 0.517 | 1.94 | |

| Perceived Complexity | ||||||||

| (Intercept) | −1.37 | −3.47 | <.001 | *** | ||||

| Relief | 0.152 | 0.126 | 2.58 | .008 | ** | 0.549 | 1.82 | |

| n = 34 | Depth | −0.001 | −0.138 | −2.95 | .003 | ** | 0.328 | 3.05 |

| R² adj = 0.42 | Skyline | 0.06 | 0.072 | 1.59 | .032 | * | 0.408 | 2.45 |

| p < .001 | Building | 0.191 | 0.474 | 10.32 | <.001 | *** | 0.344 | 2.91 |

| SDI | 2.74 | 0.305 | 7.88 | <.001 | *** | 0.442 | 2.26 | |

| ED | 0.001 | 0.142 | 3.36 | <.001 | *** | 0.367 | 2.73 | |

| Nump | 0.001 | 0.378 | 5.93 | <.001 | *** | 0.123 | 8.1 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tabrizian, P.; Petrasova, A.; Baran, P.K.; Vukomanovic, J.; Mitasova, H.; Meentemeyer, R.K. High Resolution Viewscape Modeling Evaluated Through Immersive Virtual Environments. ISPRS Int. J. Geo-Inf. 2020, 9, 445. https://0-doi-org.brum.beds.ac.uk/10.3390/ijgi9070445

Tabrizian P, Petrasova A, Baran PK, Vukomanovic J, Mitasova H, Meentemeyer RK. High Resolution Viewscape Modeling Evaluated Through Immersive Virtual Environments. ISPRS International Journal of Geo-Information. 2020; 9(7):445. https://0-doi-org.brum.beds.ac.uk/10.3390/ijgi9070445

Chicago/Turabian StyleTabrizian, Payam, Anna Petrasova, Perver K. Baran, Jelena Vukomanovic, Helena Mitasova, and Ross K. Meentemeyer. 2020. "High Resolution Viewscape Modeling Evaluated Through Immersive Virtual Environments" ISPRS International Journal of Geo-Information 9, no. 7: 445. https://0-doi-org.brum.beds.ac.uk/10.3390/ijgi9070445