1. Introduction

First-order integer autoregressive (INAR) models have been studied extensively in the literature and applied in a wide range of fields. The models emerged following the work of

McKenzie (

1985) and

Al-Osh and Alzaid (

1987). Higher-order models that make it possible to capture more complex dependence structures in observed count time series are somewhat less well developed.

Various estimation methods for the parameters of these models, including method of moments and conditional least squares, have been widely used. For efficency considerations, maximum-likelihood estimation (MLE) may be desirable, when it is available.

Al-Osh and Alzaid (

1987) present details of MLE in the first-order case.

Bu et al. (

2008) extend this to a particular higher-order model with Poisson innovations using the construction of

Du and Li (

1991). Extensions to a semi-parametric MLE (where no specific distributional assumption is made for the arrivals) is provided in

Drost et al. (

2009) and

Martin et al. (

2011).

Jung and Tremayne (

2011) discuss MLE in a second order model using the random operator of Joe (see e.g.,

Joe 1996).

In their elegant paper,

Bu et al. (

2008) provide the necessary conditional distribution for the higher-order case when the thinning operations are performed independently of one another in their expression (9) and explicitly apply it to the second-order case in their expression (13) and subsequent computations. The parallel expression for a conditional distribution in the case of a

pth-order model considered by

Alzaid and Al-Osh (

1990) has not been given. Indeed,

Alzaid and Al-Osh (

1990, sec. 6) were of the opinion that “... the MLE for the general model seems intractable”, probably because of the unavailability of a required conditional distribution. In this paper we show that the relevant conditional distribution needed for the likelihood is available, making MLE feasible. We focus on the second-order specification here.

The model of

Alzaid and Al-Osh (

1990) has been found useful for practical applications, see e.g.,

Jung and Tremayne (

2006), in the context of stock-type counts exhibiting a pronounced positive autocorrelation. A specific feature of the specification is that it invokes closure under convolution to determine the discrete distribution theory needed for MLE. This also makes the model attractive from a theoretical point of view, as may the fact that the time reversibility applying to the first-order model continuous to hold in higher-order cases (

Schweer 2015).

In this short paper we provide the MLE for the second-order model, examine its finite sample performance and offer an application. We compare the performance of the second-order specification to the widely used first-order model by means of goodness-of-fit techniques and show that the former is preferred to the latter for the data set considered.

The paper is structured as follows.

Section 2 provides some background material for deriving the conditional distributions needed to formulate a MLE and probabilistic forecasting. Then, in

Section 3 we focus on the INAR model of order two of

Alzaid and Al-Osh (

1990) and provide its MLE. The methodology can be extended to higher-order models, though this would be cumbersome.

Section 4 contains the results of simulation experiments to assess the small sample performance of the proposed estimator.

Section 5 illustrates the model’s application to a time series of iceberg order data. The final short section provides concluding remarks.

2. Preliminaries

This section describes means for obtaining discrete conditional, or predictive, distributions for thinning-based first order INAR models. Our approach to using the relevant (multivariate) distribution theory below is to employ the following familiar relationship

where

denotes an unconditional bivariate probability mass function (pmf) and

a corresponding univariate pmf.

To frame the argument, consider the simplest case of a Poisson first-order INAR model, henceforth PAR(1). The model can be written as

for

, where

denotes a random operator, which, in this case, is the well-known binomial thinning operator of

Steutel and van Harn (

1979)

and the

are assumed to be

iid Bernoulli random variables with

and

(

). In the PAR(1) model

is an

iid discrete Poisson innovation with parameter

and

and

are presumed to be stochastically independent for all points in time. The conditional distribution of the number of ‘survivors’,

R, given the past

,

, is well known to be binomial with parameters given by the dependence parameter

and

y. The desired predictive pmf is then the convolution of the binomial pmf

, determining the number of ‘survivors’, with a Poisson pmf

, determining the number of innovations, i.e.,

and yields (2) of

McKenzie (

1988).

Alternatively, one can seek to obtain the bivariate pmf

and the higher dimensional pmf’s in subsequent sections directly. Following

Joe (

1996) (specifically, the Appendix) the trivariate reduction method (see,

Mardia 1970) and its generalization will be used here. Suppose

are independent random variables in the convolution-closed infinitely divisible family

, appropriately indexed by some parameter(s). Then a stochastic representation of the dependent random variables

X and

Y from the same family is given by

compare

Joe (

1996), Equation (A2). It is straightforward to show that

(

Mardia 1970, eq. 9.1.9).

Define the following independent Poisson random variables:

; and

, where

. By independence, the joint distribution of the Poisson random variables

is the product of their marginal distributions

The joint distribution of the dependent variables

X and

Y can be obtained from (

5) by writing the {

} (where here and below

j is used as generic subscript(s) for the relevant independent random variables) in terms of

X and

Y. Introduce the ‘dummy’ argument

. Then (

4) can be written in convenient form as

The linear system (

6) can now be solved for the {

} variables by inverting the transformation defining matrix,

, to give

Since the joint distribution of the {

} is readily available from (

5),

can be obtained by summing across the ‘dummy’ argument

a to give

Although not explicitly indicated, the upper summation limit here is in fact

and is typically quite small because we envisage modelling low order counts. Using (

7) with the case of Poisson innovations gives

This result corresponds to that given as (3) by

McKenzie (

1988) and dividing by

the resulting conditional pmf is that given as (2) in

McKenzie (

1988).

Model (

2) still applies with

, see, e.g.,

Consul (

1989) and

Jung and Tremayne (

2011) for more details of the associated pmf, interpretation of the parameter

and so forth. Such a model will facilitate the handling of overdispersed count data. If closure under convolution is still to apply, it is no longer appropriate to use the binomial thinning operator as

. However, the approach due to

Joe (

1996) can still be employed with an alternative random operator. This leads to the conditional distribution of the survivors, given

, following a quasi-binomial distribution of the form

where

(

Consul 1989, p. 195), rather than a binomial distribution. The convolution of this distribution with that of

being GP gives the transition distribution required for MLE and the joint bivariate pmf of two consecutive observations is GP, thereby preserving closure under convolution. With this modification, a parallel argument following (

1) down to (

7) remains applicable though, of course, the

random variables of, say, (

5) are now GP.

3. The INAR model of Alzaid and Al-Osh

The model specification to be discussed here in detail follows the work of

Alzaid and Al-Osh (

1990). It is based on a second order difference equation, where the thinning operations are applied in a different way from

Du and Li (

1991). The innovation process is assumed to be

, so

The resulting process will henceforth be denoted the PAR(2)-AA model. Process (

8) is stationary provided

. The special character of the PAR(2)-AA process stems from the fact that the two thinning operations in (

8) are not performed independently of one another. The bivariate random variable

, with the thinnings otherwise independent of the past history, is structured so as to follow a trinomial conditional distribution with parameters

. See e.g.,

Jung and Tremayne (

2006) for details.

The particular nature of this construction has various important consequences. First, the process has a physical interpretation as a special branching process with immigration (see e.g.,

Alzaid and Al-Osh 1990). Second, the autocorrelation structure of the model is more complicated than that of a standard Gaussian AR(2) model (compare

Du and Li 1991), for the specific random thinning operations introduce a moving average component into the autocorrelation structure. This is, in fact, of the form generally associated with a continuous ARMA(2,1) model and arises from the mutual dependence structure between the components of

. In particular, the first and second order autocorrelations are

and

(see, e.g.,

Jung and Tremayne 2003).

Note that the PAR(2)-AA model outlined above embodies closure under convolution so that the marginal distribution of

is Poisson with parameter

, thereby redefining

U as used in

Section 2. Using an extension of the technique outlined in

Section 2, we now proceed to obtain the necessary predictive distribution for maximum likelihood estimation of the parameters of this model.

For this purpose we derive the trivariate Poisson distribution and introduce seven independent Poisson random variables: ; , where ; , where ; ; and .

The (dependent) random variables

can be written in terms of the independent {

} random variables as follows:

where, as in (

4), the ‘=’ means ‘equivalent in distribution to’. Such an extension of the trivariate reduction method has been used, inter alia, by

Mahamunulu (

1967),

Loukas and Kemp (

1983) and

Karlis (

2003). Introducing the following ‘dummy’ arguments:

and

, we then have, in compact form,

Solving the linear system (

9) in terms of the

random variables by inverting the transformation defining matrix,

, gives

Proceeding as in

Section 2, we obtain the joint distribution

by summing across the ‘dummy’ arguments, i.e.,

Note that the first order autocorrelation of the PAR(2)-AA model is

and remember that

U has been redefined in this section. Define

and

, then the argument above from (

5) to (

7) in these newly defined independent random variables still applies and the bivariate pmf

for the PAR(2)-AA model is easily seen to be

Using (

10) and (

11) the required conditional distribution is

The unwieldy conditional distribution (

12) can now be used for all purposes where predictive distributions are needed, including MLE, probabilistic forecasting and predictive model assessment. As the PAR(2)-AA model is a stationary Markov chain (see

Alzaid and Al-Osh 1990), conditional MLE (ignoring end effects) based on the logarithm of (

12) can be implemented on the basis of observed counts

using the conditional log-likelihood

where

is the parameter vector of interest. Despite the quadruple summation, the log-likelihood is not too burdensome to calculate, because the upper summation limits are typically quite small. Maximizing (

13) yields MLEs that have the usual desirable asymptotic properties.

We now provide the conditional mean E

for this model. It can be derived from (

12) or, alternatively, using the method described in

Alzaid and Al-Osh (

1990). Utilizing results from

Mahamunulu (

1967) we find, after some tedious algebra (details available on request), the conditional mean to be

which is clearly nonlinear. This is a distinctive feature of the PAR(2)-AA model relative to the one of

Du and Li (

1991) with its linear regression function. The shorthand notation

in the middle part of (

14) indicates the relevant past history

. Finally, note that Equation (5.4) given in

Alzaid and Al-Osh (

1990, p. 323) differs slightly from (

14); after careful checking, we believe our result to be correct.

The argument between (

8) and (

12) can be extended in principle to deal with a

p-th order model of

Alzaid and Al-Osh (

1990, sec. 2). Full details are not provided, because the argument is laborious and e.g., for

fifteen independent

Z variables would be needed.

4. Finite Sample Performance of Parameter Estimators

In this Section, the results of a series of Monte Carlo experiments to assess the finite sample properties of estimators in the PAR(2)-AA model are discussed. Specifically, the performance of the MLE of this paper is assessed relative to three competing estimators. Consistent estimates for the starting values for the MLE procedure can either be based on method of moments (MM) estimators, or obtained from a conditional, or nonlinear, least-squares (NLS) procedure. Parameter estimates may also be obtained using the ubiquitous generalized method of moments (GMM) principle, first introduced into the econometrics literature by

Hansen (

1982).

Here and in what follows, all quantities based on

are, of course, computed using sample analogues. Method of moments estimators can be obtained from moment conditions based on the marginal mean of the counts and on the first and second order sample autocorrelations (see e.g., (

Jung and Tremayne 2006)). An important quantity in the construction of both NLS and GMM estimators is the one-step ahead prediction error, or residual, defined as

. Conditional or non-linear least squares estimators can be based on the criterion function

which is to be minimized with respect to the parameter vector

.

GMM has been used in the context of count data modelling using binomial thinning in at least two contexts previously. In an early paper,

Brännäs (

1994) advocated its used in a univariate INAR(1) context while, more recently,

Silva et al. (

2020) have employed it in a bivariate INMA(1) context where no conditional, or predictive, distribution is available in closed form, thereby precluding the use of MLE. Here we employ an extension of the approach adopted by

Brännäs (

1994) to GMM; see that paper for full details. As there, we entertain only a conditional estimation problem. Based upon the residuals

, which should themselves have zero mean, a suitable set of moment condition follows readily. Since

is comprised of three elements, we employ four moment conditions to give an overidentified GMM problem.

Introduce the notation

to denote the (conditional) variance of the random variable with pmf given in (

12) and noting that, conditionally, E

,

follows. Further, the residuals

should be serially uncorrelated (conditionally as well as unconditionally), so we may also use E

and E

. These two conditions are denoted as

and

below. Hence, the conditions used are:

Since we have no closed form for the (conditional) variance, we use

These moment conditions can all be thought of as conditional moment restrictions. Summing over all available observations, dividing by the (adjusted) sample size and collecting them in a vector gives

, the vector of moment conditions. The GMM criterion function to be minimized is

where

is a positive definite weighting matrix. Since

Brännäs (

1994) finds limited benefit to calculating two-step GMM estimates in the context of the INAR(1) model, we base our results on one-step GMM estimation, where an identity matrix serves as the weighting matrix.

The simulations are designed to resemble situations typically encountered in empirical research and to cover a range of different points in the relevant parameter space. Moreover, one set of parameters is chosen such that it mimics the situation found in the application presented in

Section 5. Series of low counts of length

, 250, and 500 are generated. The design parameters for the simulations are chosen as follows. The level of the generated processes E

varies between

and 4 in order to ensure low level counts. We experimented with other (low) values for the mean of the process and found qualitatively similar results. The dependence structure of the generated processes is governed by a combination of

and

, where

. In an extension of Figure 1 in

Jung and Tremayne (

2003) the

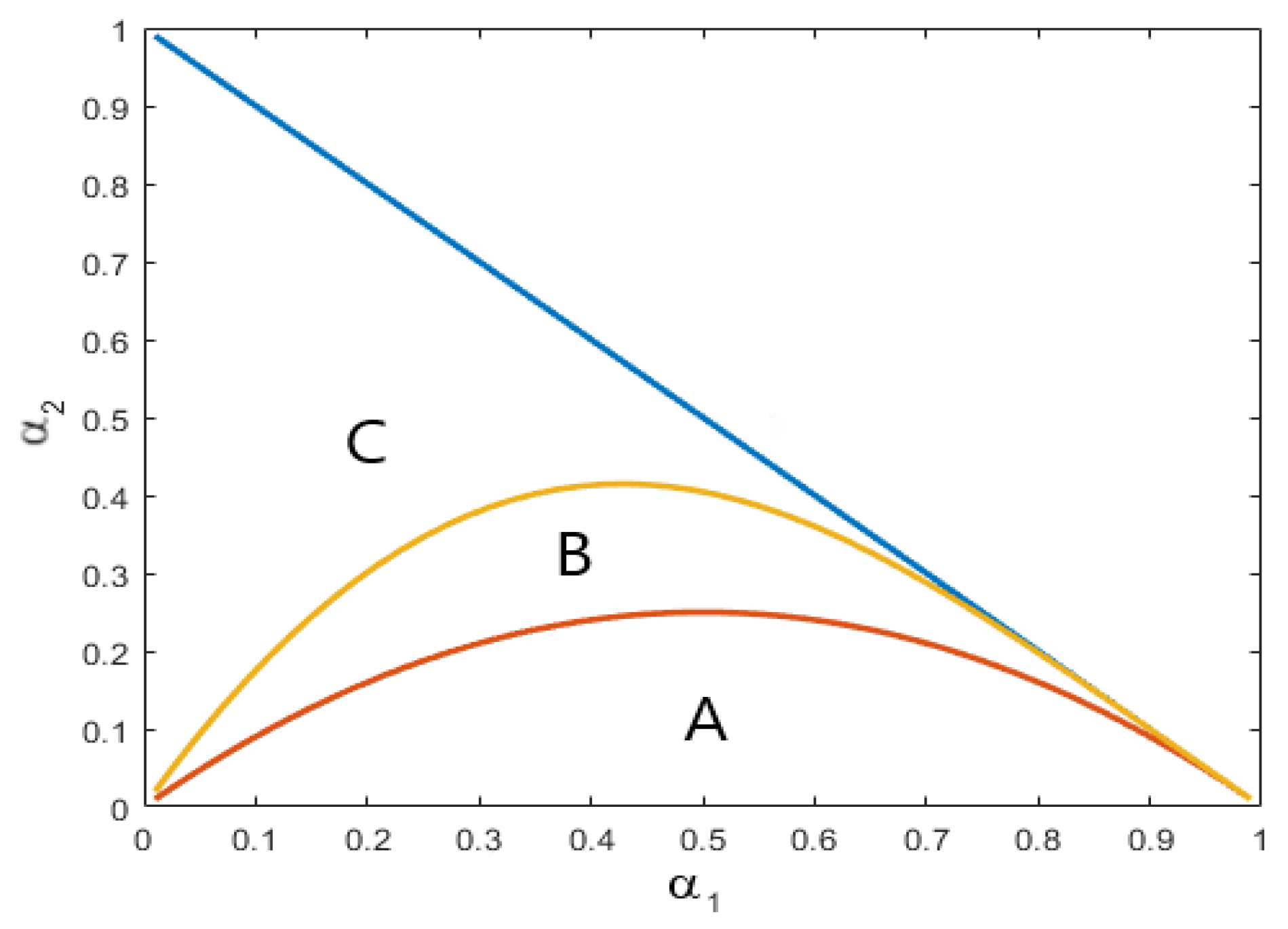

parameter space, along with some informative partitions, is depicted in

Figure 1 here. Combinations of

and

are taken from each of the three areas labelled A, B and C. All combinations from area A exhibit exponentially decaying autocorrelation functions (ACFs), while those from area B exhibit an exponential decaying behaviour for lags higher than two but

. Parameter combinations from area C exhibit more complex dependence patterns, where the ACF is oscillating at least for the first four lags before decaying exponentially to zero. The innovations

are generated as independent Poisson random variables with parameter

. The simulations are carried out using programs written in GAUSS Version 19.

Of course, due to the restricted parameter space and the unconstrained estimation procedures employed in this study, the problem of inadmissible parameter estimates has to be dealt with. The number of simulations runs is chosen such that the statistics displayed in the tables below are based on a minimum of 5000 replications. Simulated data series which lead to inadmissible parameter estimates are discarded. A Referee has indicated that it is helpful to expand on this feature of the results (full details in tabular form are available on request). Overwhelmingly, such replications stem from negative estimates of at the lowest sample size T = 125 and are predominantly infrequent, though they never exceed . Simulation results from four points in the parameter space are reported below. When , inadmissible parameter estimates never arise with the second parameterization; at most of replications are discarded with the third; and at most with the fourth (and then only ever because of inadmissible ). Parametrizations one and four lead to more inadmissible replications, probably stemming from the small value used for in data generation. The results of simulation experiments are exposited by means of tables whose entries summarize the outcomes through the bias, percentage bias and mean squared error (MSE) for the MM, NLS, GMM and ML estimators.

For the first set of simulation experiments we specify a dependence structure with an exponentially decaying ACF. Here

and

are chosen to represent a point in area A in

Figure 1. By setting

to

, the mean of the process is 3. The basic results are displayed in

Table 1; we subsequently display the results from the other three experimants in

Table 2,

Table 3 and

Table 4 and then provide some general discussion.

In the next set of simulation experiments we select a more complex dependence structure. Here

and

are chosen as representive of area B in

Figure 1. By setting

to

, the mean of the process is now 4.

For the third set of simulation experiments, the dependence parametrs are

and

and chosen so as to stem area C in

Figure 1. Choosing

to be

, the mean of the process is again 4.

Finally, the fourth and last set of simulation experiments use a point in the parameter space that is close to the estimated parameter values found for the data employed in

Section 5. For this purpose

and

and

, yielding a mean of

.

Across all four experiments, the estimates for the

parameters tend to be downward biased, while those for

are upward biased. This stems from the negative correlation between the two types of parameter estimators (dependence and location) and is equivalent to the findings reported in

Jung et al. (

2005). While the downward bias for

can be observed over all four tables above, in those cases where

is quite small (

), i.e., in the first and fourth experiments, an upward bias results in particular for low sample sizes. This arises due to the fact that the sampling distribution of

is truncated at zero as only non-negative estimates for that parameter are admissible.

The more complex the dependence structure of the data generating process is, the higher the bias for all estimators tends to become. For instance, in the third experiment, stemming from data generated using parameter values from area C in

Figure 1, the bias for the

parameter can be as large as

for the MM estimation method in small sample sizes. In all cases considered in the simulation experiments, it is seen that use of MLE to estimate the model parameters of the PAR(2)-AA model, in terms of lower biases and smaller mean squared errors, is most efficacious.

With regard to the final set of simulations and the parameter constellation found in the application in the next section, it is evident from

Table 4 that biases in the estimated parameters are negligible, in particular when MLE is employed.

5. Application

In this section we demonstrate the applicability of the PAR(2)-AA model to a real-world data set. We consider iceberg order data from the ask side of the Lufthansa stock sampled from the XETRA trading system at a frequency of 15 minutes during 15 consecutive trading days in the first quarter of 2004. For some explanatory remarks about the nature of iceberg orders, see e.g.,

Jung and Tremayne (

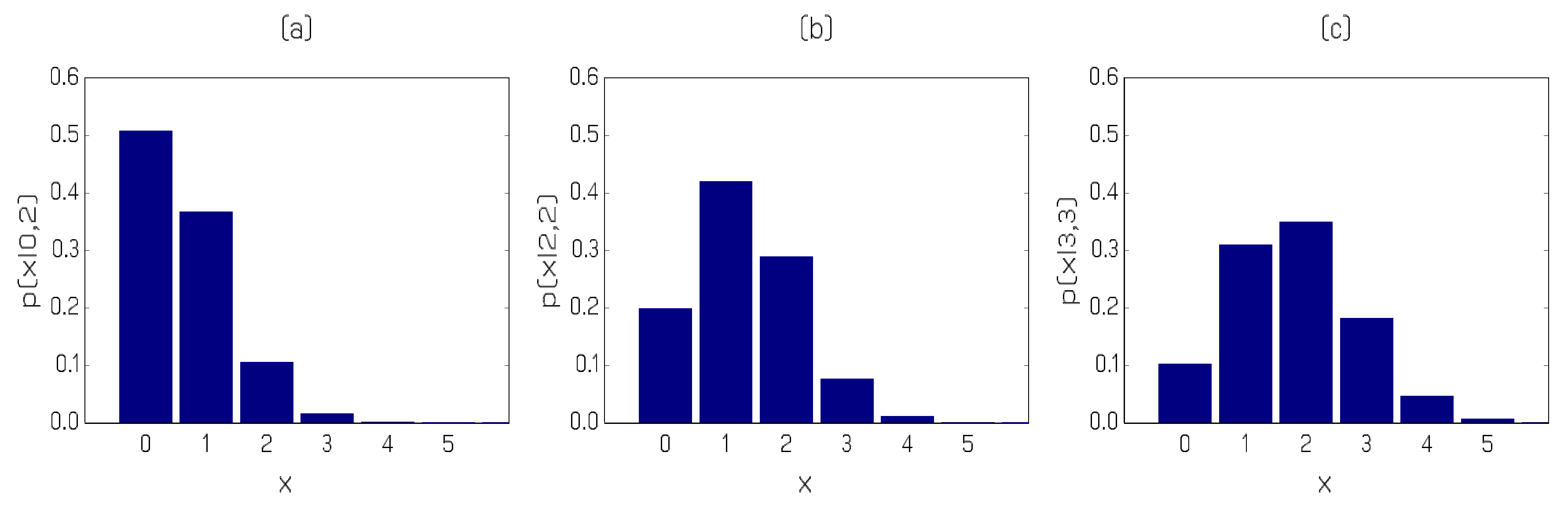

2011). The sample consists of 510 (low) counts ranging from 0 to 4. The marginal distribution of the counts is shown in

Figure 2; the sample mean and variance of the data are, respectively, 0.616 and 0.673. A time series plot and the sample (partial) autocorrelations (S(P)ACFs) are depicted in

Figure 3.

The time series plot of the data shows no discernable trend, the sample mean and variance do not suggest overdispersion and the SACF and SPACF of the data point toward a first or second order autoregressive dynamic structure. Based on these findings we fit a PAR(1) and a PAR(2)-AA model to the first 505 observations, with the last five reserved for an illustrative out-of-sample prediction exercise.

To compare the two model fits, we exploit goodness-of-fit techniques based on: (a) the predictive distributions of the two models, in particular the PIT histogram and scoring rules; (b) the correlogram of the Pearson residuals computed as

; and (c) a parametric resampling procedure based on

Tsay (

1992). See

Jung et al. (

2016) and

Czado et al. (

2009) for more details.

Figure 4 depicts the results for the two fitted models.

It is evident from the panels in

Figure 4 that the fit of the PAR(2)-AA model is superior to that of the PAR(1) model, certainly when it comes to capturing the serial dependence in the data. Further evidence favouring the PAR(2)-AA specification is provided by comparing the scoring rules provided in

Table 5 for the two model fits. We, therefore, report parameter estimates and out-of-sample results for the preferred second order model only.

Table 6 provides the parameter estimates for the three parameters of the PAR(2)-AA model based on method of moments, NLS, GMM and ML estimation, respectively. Estimated standard errors are provided for the ML estimates only. It is evident that the parameter estimate for the

parameter is statistically different from zero, providing further statistical evidence of a preference for the PAR(2)-AA model over the PAR(1) one.

We now present an illustration of out-of-sample prediction for the PAR(2)-AA model. We employ a fixed forecasting environment using only the fit to observations 1 to 505. Observations 504 to 510 are, in fact, (2,0,2,2,3,3,2); since a value 3 is only observed

of times in the entire realization, these data represent a short epoch of values that will perforce be difficult to forecast. Using (

12) we select three predictive distributions with different Markovian histories (pairs of lagged counts) for graphical presentation in

Figure 5. Panel (a) in the Figure corresponds to the prediction of observation number 506 (observed value 2, relevant past history 2,0); the predictive distribution has a mode of zero with an estimated probability close to

so that the observed value of 2 is seen as unlikely, having a predicted probability of around

. In Panel (b) of the Figure, the predictive distribution for observation number 508 is provided (observed value 3, relevant past history 2,2). The predictive distribution for the one-step ahead forecast is now markedly different; the mode has shifted up to 1, the probability of observing another 2 has risen to

and even the large value of 3 is forecast to occur with estimated probability

. Finally, Panel (c) portrays the predictive distribution for observation 510 (observed value 2 with relevant past history 3,3). Note that the estimated probability of observing yet another 3 has risen to about

and the modal forecast is in fact the value observed. Overall, the three panels of the Figure illustrate clearly how rapidly the predictive distribution responds to altering relevant past history.