Current Trends and Opportunities for Competency Assessment in Pharmacy Education–A Literature Review

Abstract

:1. Introduction

2. Background

3. Review Methodology

3.1. Aim

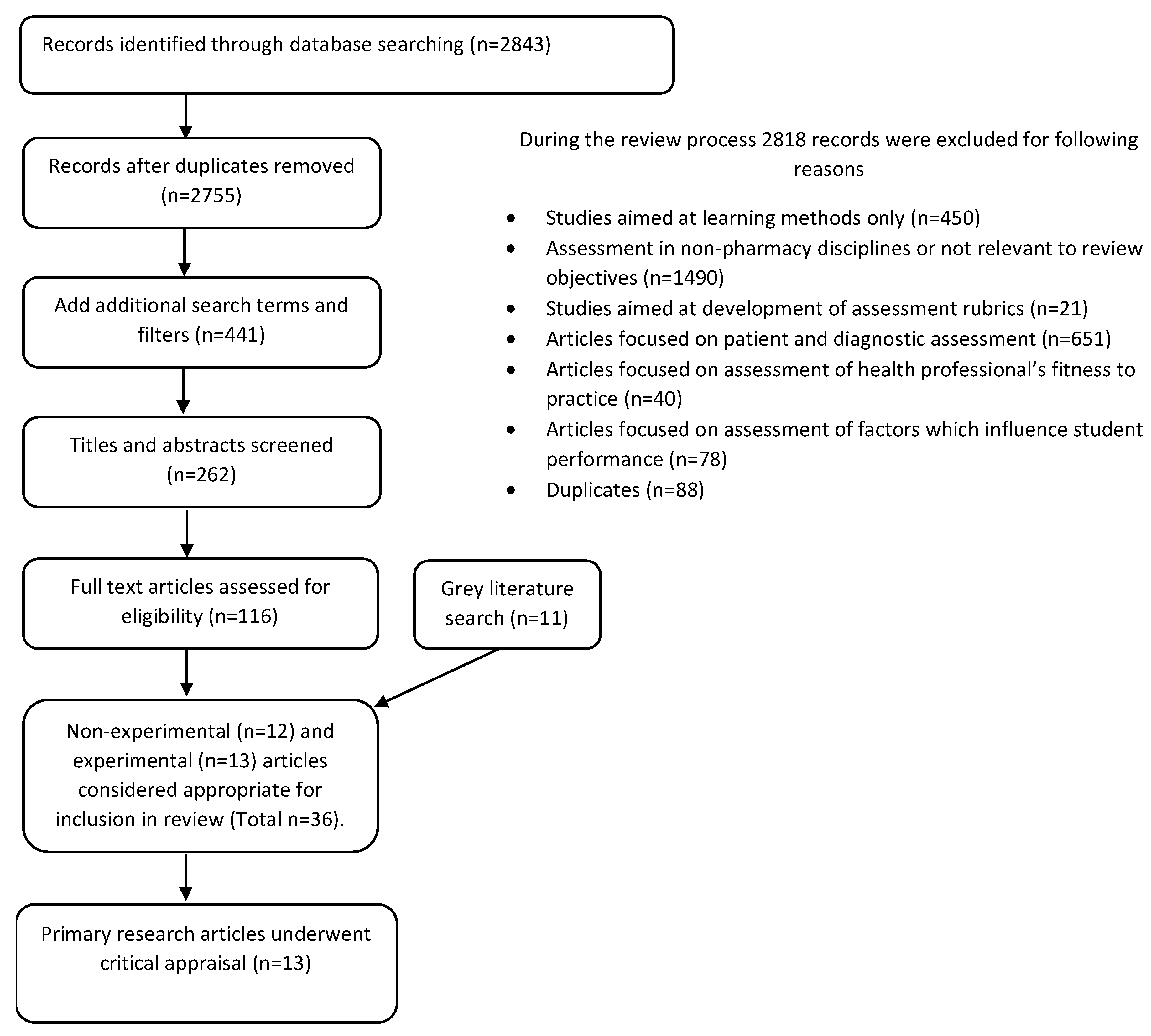

3.2. Methods

3.3. Inclusion/Exclusion Criteria

3.4. Method of Analysis

4. Results

4.1. Achieving Authenticity in Assessment Tasks and Activities

4.2. Reporting on the Validity and Reliability of Assessment Methods

4.3. Selection of Appropriate Assessment Metrics

4.4. Accounting for Different Levels of Ability and Practice

4.5. Integration of Competencies in Assessments

4.6. Assessment of Cognitive Processes

5. Discussion

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Lambert Schuwirth, W.T.; van der Vleuten, C. How to design a useful test: The principles of assessment. In Understanding Medical Education: Evidence, Theory and Practice; Swanwick, T., Ed.; The Association for the Study of Medical Education: Edinburgh, UK, 2010. [Google Scholar]

- Anderson, H.; Anaya, G.; Bird, E.; Moore, D. Review of Educational Assessment. Am. J. Pharm. Educ. 2005, 69, 84–100. [Google Scholar]

- Huba, M.; Freed, J. Learner-Centered Assessment on College Campus. In Shifting the Focus from Teaching to Learning; Allyn and Bacon: Boston, MA, USA, 2000; Volume 8. [Google Scholar]

- Koster, A.; Schalekamp, T.; Meijerman, I. Implementation of Competency-Based Pharmacy Education (CBPE). Pharmacy 2017, 5, 10. [Google Scholar] [CrossRef] [PubMed]

- Australian Qualifications Framework Council. Australian Qualifications Framework; Department of Industry, Science, Research and Tertiary Education Adelaide: Adelaide, Australia, 2013.

- Croft, H.; Nesbitt, K.; Rasiah, R.; Levett-Jones, T.; Gilligan, C. Safe dispensing in community pharmacies: How to apply the SHELL model for catching errors. Clin. Pharm. 2017, 9, 214–224. [Google Scholar]

- Kohn, L.; Corrigan, J.; Donaldson, M. To Err Is Human: Building a Safer Health System; National Academy Press: Washington, DC, USA, 1999. [Google Scholar]

- Universities Australia; Australian Chamber of Commerce and Inductry; Australian Inductry Group; The Business Council of Australia and the Australian Collaborative Education Network. National Strategy on Work Integrated Learning in University Education. Available online: http:///D:/UNIVERSITY%20COMPUTER/Pharmacist%20Reports/National%20Strategy%20on%20Work%20Integrated%20Learning%20in%20University%20Education.pdf (accessed on 19 December 2018).

- Nash, R.; Chalmers, L.; Brown, N.; Jackson, S.; Peterson, G. An international review of the use of competency standards in undergraduate pharmacy education. Pharm. Educ. 2015, 15, 131–141. [Google Scholar]

- Australian Health Practitioner Regulation Agency. National Registration and Accreditation Scheme Strategy 2015–2020. Available online: https://www.ahpra.gov.au/About-AHPRA/What-We-Do/NRAS-Strategy-2015-2020.aspx (accessed on 6 March 2019).

- Peeters, M.J. Targeting Assessment for Learning within Pharmacy Education. Am. J. Pharm. Educ. 2016, 81, 5–9. [Google Scholar] [CrossRef] [PubMed]

- Schuwirth, L.; van der Vleuten, C.P. Programmatic assessment: From assessment of learning to assessment for learning. Med. Teacher 2011, 33, 478–485. [Google Scholar] [CrossRef]

- Salinitri, F.; O’Connell, M.B.; Garwood, C.; Lehr, V.T.; Abdallah, K. An Objective Structured Clinical Examination to Assess Problem-Based Learning. Am. J. Pharm. Educ. 2012, 76, 1–10. [Google Scholar] [CrossRef] [PubMed]

- Sturpe, D. Objective Structured Clinical Examinations in Doctor of Pharmacy Programs in the United States. Am. J. Pharm. Educ. 2010, 74, 1–6. [Google Scholar]

- Miller, G. The assessment of clinical skills/competence/performance. Acad. Med. 1990, 65, S63–S67. [Google Scholar] [CrossRef]

- Van der Vleuten, C.; Schuwirth, L.W. Assessing professional competence: From methods to programmes. Med. Educ. 2005, 39, 309–317. [Google Scholar] [CrossRef]

- O’Brien, B.; May, W.; Horsley, T. Scholary conversations in medical education. Acad. Med. 2016, 91, S1–S9. [Google Scholar] [CrossRef] [PubMed]

- Pittenger, A.; Chapman, S.; Frail, C.; Moon, J.; Undeberg, M.; Orzoff, J. Entrustable Professional Activities for Pharmacy Practice. Am. J. Pharm. Educ. 2016, 80. [Google Scholar] [CrossRef] [PubMed]

- Health and Social Care Information Centre. NHS Statistics Prescribing. In Key Facts for England; Health and Social Care Information Centre: Leeds, UK, 2014. [Google Scholar]

- Jarrett, J.; Berenbrok, L.; Goliak, K.; Meyer, S.; Shaughnessy, A. Entrustable Professional Activities as a Novel Framework for Pharmacy Education. Am. J. Pharm. Educ. 2018, 82, 368–375. [Google Scholar] [CrossRef] [PubMed]

- Hirsch, A.; Parihar, H. A Capstone Course with a Comprehensive and Integrated Review of the Pharmacy Curiculum and Student Assessment as a Preparation for Advanced Pharmacy Practice Experience. Am. J. Pharm. Educ. 2014, 78, 1–8. [Google Scholar] [CrossRef] [PubMed]

- Nestel, D.; Krogh, K.; Harlim, J.; Smith, C.; Bearman, M. Simulated Learning Technologies in Undergraduate Curricula: An Evidence Check Review for HETI; Health Education Training Institute: Melbourne, Australia, 2014. Available online: https://www.academia.edu/16527321/Simulated_Learning_Technologies_in_Undergraduate_Curricula_An_Evidence_Check_review_for_HETI (accessed on 20 April 2019).

- Van Zuilen, M.H.; Kaiser, R.M.; Mintzer, M.J. A competency-based medical student curriculum: Taking the medication history in older adults. J. Am. Geriatr. Soc. 2012, 60, 781–785. [Google Scholar] [CrossRef] [PubMed]

- Medina, M. Does Competency-Based Education Have a Role in Academic Pharmacy in the United States? Pharmacy 2017, 5, 13. [Google Scholar] [CrossRef]

- International Pharmaceutical Federation. FIP Statement Of Policy On Good Pharmacy Education Practice Vienna (Online); International Pharmaceutical Federation: Den Haag, The Netherlands, 2000. [Google Scholar]

- Medina, M.; Plaza, C.; Stowe, C.; Robinson, E.; DeLander, G.; Beck, D.; Melchert, R.; Supernaw, R.; Gleason, B.; Strong, M.; et al. Center for the Advancement of Pharmacy Education 2013 Educational Outcomes. Am. J. Pharm. Educ. 2013, 77, 162. [Google Scholar] [CrossRef] [Green Version]

- Webb, D.D.; Lambrew, C.T. Evaluation of physician skills in cardiopulmonary resuscitation. J. Am. Coll. Emerg. Phys. Univ. Ass. Emerg. Med. Serv. 1978, 7, 387–389. [Google Scholar] [CrossRef]

- Pharmaceutical Society of Australia. National Competency Standards Framework for Pharmacists in Australia; Pharmaceutical Society of Australia: Canberra, Australia, 2016; pp. 11–15. [Google Scholar]

- General Pharmaceutical Council. Future Pharmacists Standards for the Initial Education and Training of Pharmacists; General Pharmaceutical Council (online): London, UK, 2011. [Google Scholar]

- Olle tenCate. Entrustment as Assessment: Recognizing the Ability, the Right and the Duty to Act. J. Grad. Med. Educ. 2016, 8, 261–262. [Google Scholar] [CrossRef]

- Creuss, R.; Creuss, S.; Steinert, Y. Amending Miller’s Pyramid to Include Professional Identity Formation. Acad. Med. 2016, 91, 180–185. [Google Scholar] [CrossRef]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. Ann. Intern. Med. 2009, 151, 264–269. [Google Scholar] [CrossRef] [PubMed]

- Considine, J.; Botti, M.; Thomas, S. Design, format, validity and reliability of multiple choice questions for use in nursing research and education. Collegian 2005, 12, 19–24. [Google Scholar] [CrossRef]

- Case, S.; Swanson, D. Extended-matching items: A practical alternative to free-response questions. Teach. Learn. Med. 1993, 5, 107–115. [Google Scholar] [CrossRef]

- McCoubrie, P. Improving the fairness of multiple-choice questions: A literature review. Med. Teach. 2004, 26, 709–712. [Google Scholar] [CrossRef] [PubMed]

- Moss, E. Multiple choice questions: Their value as an assessment tool. Curr. Opin. Anaesthesiol. 2001, 14, 661–666. [Google Scholar] [CrossRef] [PubMed]

- Australian Pharmacy Council. Intern Year Blueprint Literature Review; Australian Pharmacy Council Limited: Canberra, ACT, Australia, 2017. [Google Scholar]

- Cobourne, M. What’s wrong with the traditional viva as a method of assessment in orthodontic education? J. Orthod. 2010, 37, 128–133. [Google Scholar] [CrossRef]

- Rastegarpanah, M.; Mafinejad, M.K.; Moosavi, F.; Shirazi, M. Developing and validating a tool to evaluate communication and patient counselling skills of pharmacy students: A pilot study. Pharm. Educ. 2019, 19, 19–25. [Google Scholar]

- Salas, E.; Wilson, K.; Burke, S.; Priest, H. Using Simulation-Based Training to Improve Patient Safety: What Does It Take? J. Qual. Patient Saf. 2005, 31, 363–371. [Google Scholar] [CrossRef]

- Abdel-Tawab, R.; Higman, J.D.; Fichtinger, A.; Clatworthy, J.; Horne, R.; Davies, G. Development and validation of the Medication-Related Consultation Framework (MRCF). Patient Educ. Couns. 2011, 83, 451–457. [Google Scholar] [CrossRef]

- Khan, K.; Ramachandran, S.; Gaunt, K.; Pushkar, P. The Objective Structured Clinical Examination (OSCE): AMEE Guide No. 81. Part I: An historical and theoretical perspective. Med. Teacher 2013, 35, e1437–e1446. [Google Scholar] [CrossRef]

- Van der Vleuten, C.P.M.; Schuwirth, L.; Scheele, F.; Driessen, E.; Hodges, B. The assessment of professional competence: Building blocks for theory development. Best Practice and Research. Clin. Obstet. Gynaecol. 2010, 24, 703–719. [Google Scholar]

- Williamson, J.; Osborne, A. Critical analysis of case based discussions. Br. J. Med. Pract. 2012, 5, a514. [Google Scholar]

- Davies, J.; Ciantar, J.; Jubraj, B.; Bates, I. Use of multisource feedback tool to develop pharmacists in a postgraduate training program. Am. J. Pharm. Educ. 2013, 77. [Google Scholar] [CrossRef] [PubMed]

- Patel, J.; Sharma, A.; West, D.; Bates, I.; Davies, J.; Abdel-Tawab, R. An evaluation of using multi-source feedback (MSF) among junior hospital pharmacists. Int. J. Pharm. Pract. 2011, 19, 276–280. [Google Scholar] [CrossRef] [PubMed]

- Patel, J.; West, D.; Bates, I.; Eggleton, A.; Davies, G. Early experiences of the mini-PAT (Peer Assessment Tool) amongst hospital pharmacists in South East London. Int. J. Pharm. Pract. 2009, 17, 123–126. [Google Scholar] [CrossRef]

- Archer, J.; Norcini, J.; Davies, H. Use of SPRAT for peer review of paediatricians in training. Br. Med. J. 2005, 330, 1251–1253. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Tochel, C.; Haig, A.; Hesketh, A. The effectiveness of portfolios for post-graduate assessment and education: BEME Guide No 12. Med. Teacher 2009, 31, 299–318. [Google Scholar] [CrossRef] [PubMed]

- Ten Cate, O. A primer on entrustable professional activities. Korean J. Med Educ. 2018, 30, 1–10. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- TenCate, O. Entrustment Decisions: Bringing the Patient Into the Assessment Equation. Acad. Med. 2017, 92, 736–738. [Google Scholar] [CrossRef]

- Thompson, L.; Leung, C.; Green, B.; Lipps, J.; Schaffernocker, T.; Ledford, C.; Davis, J.; Way, D.; Kman, N. Development of an Assessment for Entrustable Professional Activity (EPA) 10: Emergent Patient Management. West. J. Emerg. Med. 2016, 18, 35–42. [Google Scholar] [CrossRef]

- Cook, D.A.; Reed, D.A. Appraising the Quality of Medical Education Research Methods: The Medical Education Research Study Quality Instrument and the Newcastle-Ottawa Scale-Education. A Acad. Med. 2015, 90, 1067–1076. [Google Scholar] [CrossRef] [PubMed]

- Santos, S.; Manuel, J. Design, implementation and evaluation of authentic assessment experience in a pharmacy course: Are students getting it? In Proceedings of the 3rd International Conference in Higher Education Advances, Valencia, Spain, 21–23 June 2017. [Google Scholar]

- Mackellar, A.; Ashcroft, D.M.; Bell, D.; James, D.H.; Marriott, J. Identifying Criteria for the Assessment of Pharmacy Students’ Communication Skills With Patients. Am. J. Pharm. Educ. 2007, 71, 1–5. [Google Scholar] [CrossRef] [PubMed]

- Kadi, A.; Francioni-Proffitt, D.; Hindle, M.; Soine, W. Evaluation of Basic Compounding Skills of Pharmacy Students. Am. J. Pharm. Educ. 2005, 69, 508–515. [Google Scholar] [CrossRef]

- Kirton, S.B.; Kravitz, L. Objective Structured Clinical Examinations (OSCEs) Compared with Traditional Assessment Methods. Am. J. Pharm. Educ. 2011, 75, 111. [Google Scholar] [CrossRef] [PubMed]

- Kimberlin, C. Communicating With Patients: Skills Assessment in US Colleges of Pharmacy. Am. J. Pharm. Educ. 2006, 70, 1–5. [Google Scholar] [CrossRef] [PubMed]

- Aojula, H.; Barber, J.; Cullen, R.; Andrews, J. Computer-based, online summative assessment in undergraduate pharmacy teaching: The Manchester experience. Pharm. Educ. 2006, 6, 229–236. [Google Scholar] [CrossRef]

- Kelley, K.; Beatty, S.; Legg, J.; McAuley, J.W. A Progress Assessment to Evaluate Pharmacy Students’ Knowledge Prior to Beginning Advanced Pharmacy Practice Experiences. Am. J. Pharm. Educ. 2008, 72, 88. [Google Scholar] [CrossRef]

- Hanna, L.-A.; Davidson, S.; Hall, M. A questionnaire study investigating undergraduate pharmacy students’ opinions on assessment methods and an integrated five-year pharmacy degree. Pharm. Educ. 2017, 17, 115–124. [Google Scholar]

- Benedict, N.; Smithburger, P.; Calabrese, A.; Empey, P.; Kobulinsky, L.; Seybert, A.; Waters, T.; Drab, S.; Lutz, J.; Farkas, D.; et al. Blended Simulation Progress Testing for Assessment of Practice Readiness. Am. J. Pharm. Educ. 2017, 81, 14. [Google Scholar]

- World Health Organisation. Ensuring good dispensing practice. In Management Sciences for Health. MDS-3: Managing Access to Medicines and Health Technologies; Spivey, P., Ed.; World Health Organisation: Arlington, VA, USA, 2012. [Google Scholar]

- Pharmacy Board of Australia. Guidlines for Dispensing Medicines; Pharmacy Board of Australia: Canberra, Australia, 2015.

- The Pharmacy Guild of Australia. Dispensing Your Prescription Medicine: More Than Sticking A Label on A Bottle; The Pharmacy Guild of Australia: Canberra, Australia, 2016. [Google Scholar]

- Jungnickel, P.W.; Kelley, K.W.; Hammer, D.P.; Haines, S.T.; Marlowe, K.F. Addressing competencies for the future in the professional curriculum. Am. J. Pharm. Educ. 2009, 73, 156. [Google Scholar] [CrossRef]

- Merrium, S.; Bierema, L. Adult Learning: Linking Theory and Practice; Jossey Bass Ltd.: San Francisco, CA, USA, 2014; Volume 1. [Google Scholar]

- Scalese, R.; Obeso, V.; Issenberg, S.B. Simulation Technology for Skills Training and Competency Assessment in Medical Education. J. Gen. Intern. Med. 2007, 23, 46–49. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Ryall, T.; Judd, B.; Gordon, C. Simulation-based assessments in health professional education: A systematic review. J. Multidiscip. Healthc. 2016, 9, 69–82. [Google Scholar] [PubMed]

- Seybert, A.L.; Kobulinsky, L.R.; McKaveney, T.P. Human patient simulation in a pharmacotherapy course. Am. J. Pharm. Educ. 2008, 72, 37. [Google Scholar] [CrossRef] [PubMed]

- Smithburger, P.L.; Kane-Gill, S.L.; Ruby, C.M.; Seybert, A.L. Comparing effectiveness of 3 learning strategies: Simulation-based learning, problem-based learning, and standardized patients. Simul. Healthc. 2012, 7, 141–146. [Google Scholar] [CrossRef] [PubMed]

- Bray, B.S.; Schwartz, C.R.; Odegard, P.S.; Hammer, D.P.; Seybert, A.L. Assessment of human patient simulation-based learning. Am. J. Pharm. Educ. 2011, 75, 208. [Google Scholar] [CrossRef] [PubMed]

- Seybert, A. Patient Simulation in Pharmacy Education. Am. J. Pharm. Educ. 2011, 75, 187. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Abdulmohsen, H. Simulation-based medical teaching and learning. J. Fam. Community Med. 2010, 17, 35–40. [Google Scholar]

- Aggarwal, R.; Mytton, O.; Derbrew, M.; Hananel, D.; Heydenberg, M.; Barry Issenberg, S.; MacAulay, C.; Mancini, M.E.; Morimoto, T.; Soper, N.; et al. Training and simulation for patient safety. J. Qual. Saf. Health Care 2010, 19, i34–i43. [Google Scholar] [CrossRef] [Green Version]

- Boulet, J. Summative Assessment in Medicine: The Promise of Simulation for High-stakes Evaluation. Acad. Emerg. Med. 2008, 15, 1017–1024. [Google Scholar] [CrossRef]

- Sears, K.; Godfrey, C.; Luctkar-Flude, M.; Ginsberg, L.; Tregunno, D.; Ross-White, A. Measuring competence in healthcare learners and healthcare professionals by comparing self-assessment with objective structured clinical examinations: A systematic review. JBI Database Syst. Rev. 2014, 12, 221–229. [Google Scholar] [CrossRef]

- Nursing and Midwifery Board of Australia. Framework for Assessing Standards for Practice for Registered Nurses, Enrolled Nurses and Midwives. Australian Health Practitioner Regulation Agency. 2017. Available online: http://www.nursingmidwiferyboard.gov.au/Codes-Guidelines-Statements/Frameworks/Framework-for-assessing-national-competency-standards.aspx (accessed on 14 April 2019).

- Nulty, D.; Mitchell, M.; Jeffrey, A.; Henderson, A.; Groves, M. Best Practice Guidelines for use of OSCEs: Maximising value for student learning. Nurse Educ. Today 2011, 31, 145–151. [Google Scholar] [CrossRef] [PubMed]

- Marceau, M.; Gallagher, F.; Young, M.; St-Onge, C. Validity as a social imperative for assessment in health professions education: A concept analysis. Med. Educ. 2018, 52, 641–653. [Google Scholar] [CrossRef] [PubMed]

- Morgan, P.; Cleave-Hogg, D.; DeSousa, S.; Tarshis, J. High-fidelity patient simulation: Validation of performance checklists. Br. J. Anaesth. 2004, 92, 388–392. [Google Scholar] [CrossRef] [PubMed]

- Morgan, P.; Lam-McCulloch, J.; McIlroy, J.H.; Tarchis, J. Simulation performance checklist generation using the Delphi technique. Can. J. Anaesth. 2007, 54, 992–997. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Bann, S.; Davis, I.M.; Moorthy, K.; Munz, Y.; Hernandez, J.; Khan, M.; Datta, V.; Darzi, A. The reliability of multiple objective measures of surgery and the role of human performance. Am. J. Surg. 2005, 189, 747–752. [Google Scholar] [CrossRef] [PubMed]

- Boulet, J.; Murray, D.; Kras, J.; Woodhouse, J.; McAllister, J.; Ziv, A. Relaibility and validity of a simulation-based acute care skills assessment for medical students and residents. Anesthesiology 2003, 99, 1270–1280. [Google Scholar] [CrossRef] [PubMed]

- DeMaria, S., Jr.; Samuelson, S.T.; Schwartz, A.D.; Sim, A.J.; Levine, A.I. Simulation-based assessment and retraining for the anesthesiologist seeking reentry to clinical practice: A case series. Anesthesiology 2013, 119, 206–217. [Google Scholar] [CrossRef] [PubMed]

- Gimpel, J.; Boulet, D.; Errichetti, A. Evaluating the clinical skills of osteopathic medical students. J. Am. Osteopathy Assoc. 2003, 103, 267–279. [Google Scholar]

- Lipner, R.; Messenger, J.; Kangilaski, R.; Baim, D.; Holmes, D.; Williams, D.; King, S. A technical and cognitive skills eveluation of performance in interventional cardioogy procedures using medical simulation. J. Simul. Healthc. 2010, 5, 65–74. [Google Scholar] [CrossRef]

- Tavares, W.; LeBlanc, V.R.; Mausz, J.; Sun, V.; Eva, K.W. Simulation-based assessment of paramedics and performance in real clinical contexts. Prehosp. Emerg. Care 2014, 18, 116–122. [Google Scholar] [CrossRef]

- Isenberg, G.A.; Berg, K.W.; Veloski, J.A.; Berg, D.D.; Veloski, J.J.; Yeo, C.J. Evaluation of the use of patient-focused simulation for student assessment in a surgery clerkship. Am. J. Surg. 2011, 201, 835–840. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Kesten, K.S.; Brown, H.F.; Meeker, M.C. Assessment of APRN Student Competency Using Simulation: A Pilot Study. Nurs. Educ. Perspect. 2015, 36, 332–334. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Rizzolo, M.A.; Kardong-Edgren, S.; Oermann, M.H.; Jeffries, P.R. The National League for Nursing Project to Explore the Use of Simulation for High-Stakes Assessment: Process, Outcomes, and Recommendations. Nurs. Educ. Perspect. 2015, 36, 299–303. [Google Scholar] [CrossRef] [PubMed]

- McBride, M.; Waldrop, W.; Fehr, J.; Boulet, J.; Murray, D. Simulation in pediatrics: The reliability and validity of a multiscenario assessment. Pediatrics 2011, 128, 335–343. [Google Scholar] [CrossRef] [PubMed]

- Franklin, N.; Melville, P. Competency assessment tools: An exploration of the pedagogical issues facing competency assessment for nurses in the clinical environment. Collegian 2013, 22, 25–31. [Google Scholar] [CrossRef]

- Thompson, N. A practitioner’s guide for variable-length computerised classification testing. Pract. Assess. Res. Eval. 2007, 12, 1–13. [Google Scholar]

- Krieter, C.; Ferguson, K.; Gruppen, L. Evaluating the usefulness of computerized adaptive testing for medical in-course assessment. Acad. Med. 1999, 74, 1125–1128. [Google Scholar] [CrossRef]

- Pharmaceutical society of Australia. Dispensing Practice Guidelines; Pharmaceutical Society of Australia Ltd.: Canberra, ACT, Austrilia, 2017. [Google Scholar]

- Douglas, H.; Raban, M.; Walter, S.; Westbrook, J. Improving our understanding of multi-tasking in healthcare: Drawing together the cognitive psychology and healthcare literature. Appl. Ergon. 2017, 59, 45–55. [Google Scholar] [CrossRef]

- Shirwaikar, A. Objective structured clinical examination (OSCE) in pharmacy education—A trend. Pharm. Pract. 2015, 13, 627. [Google Scholar] [CrossRef]

- Haines, S.; Pittenger, A.; Stolte, S. Core entrustable professional activities for new pharmacy graduates. Am. J. Pharm. Educ. 2017, 81, S2. [Google Scholar]

- Pittenger, A.; Copeland, D.; Lacroix, M.; Masuda, Q.; Plaza, C.; Mbi, P.; Medina, M.; Miller, S.; Stolte, S. Report of the 2015–2016 Academic Affairs Standing Committee: Entrustable Professional Activities Implementation Roadmap. Am. J. Pharm. Educ. 2017, 81, S4. [Google Scholar] [PubMed]

- Marotti, S.; Sim, Y.T.; Macolino, K.; Rowett, D. From Entrustment of Competence to Entrustable Professional Activities. Available online: http://www.mm2018shpa.com/wp-content/uploads/2018/11/282_Marotti-S_From-assessment-of-competence-to-Entrustable-Professional-Activities-EPAs-.pdf (accessed on 14 April 2019).

- Al-Sallami, H.; Anakin, M.; Smith, A.; Peterson, A.; Duffull, S. Defining Entrustable Professional Activities to Drive the Learning of Undergradate Pharmacy Students School of Pharmacy; University of Otago: Dunedin, New Zealand, 2018. [Google Scholar]

- Croft, H.; Gilligan, C.; Rasiah, R.; Levett-Jones, T.; Schneider, J. Thinking in Pharmacy Practice: A Study of Community Pharmacists’ Clinical Reasoning in Medication Supply Using the Think-Aloud Method. Pharmacy 2017, 6, 1. [Google Scholar] [CrossRef] [PubMed]

- Epstein, R.M. Assessment in medical education. N. Engl. J. Med. 2007, 356, 387–396. [Google Scholar] [CrossRef] [PubMed]

- Cowan, T.; Norman, I.; Coopamah, V. Competence in nursing practice: A controversial concept—A focused literature review. Accid. Emerg. Nurs. 2007, 15, 20–26. [Google Scholar] [CrossRef] [PubMed]

- Ilgen, J.; Ma, I.W.Y.; Hatala, R.; Cook, D.A. A systematic review of validity evidence for checklists versus global rating scales in simulation-based assessment. Med. Educ. 2015, 49, 161–173. [Google Scholar] [CrossRef]

- Smith, V.; Muldoon, K.; Biesty, L. The Objective Structured Clinical Examination (OSCE) as a strategy for assessing clinical competence in midwifery education in Ireland: A critical review. Nurse Educ. Pract. 2012, 12, 242–247. [Google Scholar] [CrossRef]

- Rushforth, H. Objective structured clinical examination (OSCE): Review of literature and implications for nursing education. Nurse Educ. Today 2007, 27, 481–490. [Google Scholar] [CrossRef]

- Barman, A. Critiques on the objective structured clinical examination. Ann. Acad. Med. Singap. 2005, 34, 478–482. [Google Scholar]

- Malec, J.F.; Torsher, L.C.; Dunn, W.F.; Wiegmann, D.A.; Arnold, J.J.; Brown, D.A.; Phatak, V. The mayo high performance teamwork scale: Reliability and validity for evaluating key crew resource management skills. Simul. Healthc. 2007, 2, 4–10. [Google Scholar] [CrossRef]

- Flin, R.; Patey, R. Improving patient safety through training in non-technical skills. Br. Med. J. 2009, 339. [Google Scholar] [CrossRef]

- Forsberg, E.; Ziegert, K.; Hult, H.; Fors, U. Clinical reasoning in nursing, a think-aloud study using virtual patients—A base for an innovative assessment. Nurse Educ. Today 2014, 34, 538–542. [Google Scholar] [CrossRef] [PubMed]

- Tavares, W.; Brydges, R.; Myre, P.; Prpic, J.; Turner, L.; Yelle, R.; Huiskamp, M. Applying Kane’s validity framework to a simulation based assessment of clinical competence. Adv. Health Sci. Educ. Theory Pract. 2018, 23, 323–338. [Google Scholar] [CrossRef] [PubMed]

- Cizek, G. Progress on validity: The glass half full, the work half done. Assess. Educ. Princ. Policy Pract. 2016, 23, 304–308. [Google Scholar] [CrossRef]

| A move towards outcome-based education models, including competency-based approaches [2,4] |

| Increased quality assurance (QA) of tertiary education, evidenced directly through student performance [5] |

| Emphasis on human factors implicated in medical error and patient safety [6,7] |

| Increasing government and community expectations and pressure on universities for ‘work-ready’ graduates [8] including increasing attention for ‘registration upon graduation’ (RUG) models such as in the US and Thailand compared with ‘degree plus professional registration’ models common to the UK, Australia and New Zealand [9] |

| Increased accreditation requirements for programmes [10] |

| Integration of professional competency standards into education programs [2,9] |

| Increasing employer expectations [8,9] |

| Assessment Process | Description | Assessment Characteristics Related to Literature Review Themes | ||

|---|---|---|---|---|

| Integration of Competencies | Authenticity | Validity and Reliability | ||

| Multiple-Choice Questions (MCQs) including Extended Matching Questions (EMQs) and computer-adaptive tests (CATs) | Traditional MCQ most widely consists of a question (stem) followed by several (typically 4–5) possible answer options; may also be true/false format [33]. Single-best option MCQ format has been used extensively as a method of assessment [33]. EMQs are organised into four parts; a theme; a list of possible answers (options); question (lead-in statement); and a clinical problem (Stem) [34]. CATs select items for candidate based on their previous response and therefore customise assessment process according to ability. | Primarily assess knowledge in specific subject areas; may be possible to assess higher cognitive processes (e.g., interpretation, knowledge application) with well-constructed clinical scenarios [35]. EMQs are superior to traditional MCQ in assessing problem solving and clinical reasoning abilities [34]. | Lack assessment authenticity [33]; encourage rote learning [33]. | High levels of reliability [33,35]. Validity will vary depending on content and construction of questions, number of answer items. Validity may be improved by implementing training, writing guidelines, peer review and other validation processes [36]. Evidence suggests three-option MCQs improve both assessment efficiency and content validity. |

| Written examination, including modified essay question (MEQ) | Traditional written examination usually requires candidates to respond to a variety of questions using short, long or (mini/modified) essay style; open-ended responses in written form. Questions may elicit specific knowledge or facts, or incorporate theory of clinical skills and communications. | Primarily assess knowledge in specific subject areas; may be possible to assess higher cognitive processes (e.g., interpretation, knowledge application) with well-constructed clinical scenarios [35]. ‘Open-book’ written examinations also assess ability to incorporate assessment of information retrieval and incorporation into response. | Generally, lack authenticity, as students are not able to demonstrate performance. Questions that assess application of knowledge in real-world scenarios are more authentic than those that focus on student’s ability to reproduce information. | Lower levels of reliability when compared with MCQ, since responses are open ended. Wider sampling and more directed questioning generally increase reliability. Validity will vary depending on content and construction of questions, number of answer items. |

| Viva Voce “viva”/traditional oral examination | Oral (rather than written) examination conducted face-to-face with examiner(s). | As well as clinical knowledge, viva may be useful for the assessment of characteristics which are difficult to assess via other techniques such as professionalism, clinical reasoning, ethics, communication skill, problem solving [37]. | Lack assessment authenticity due to the hypothetical nature of questioning. | Viva examinations are often unstructured, wide variation may occur between questioning for different candidates and different assessors, thus they are prone to errors of variability [35]. Inter-rater reliability is generally poor [38] and validity is difficult to establish, dependent on the content of the questions asked, however given the flexibility in being able to vary content has the potential to improve validity [38]. |

| Simulated patient encounters/“role plays” and practical examination | Examination of practice-based skills through demonstration of that task e.g., patient counselling, pharmaceutical compounding examination [21]. | Tend to focus on one area of practice e.g., preparation of compounded product, counselling, medication history taking [39]. | Has the potential to be authentic. Authenticity is increased when psychological fidelity is high and decision-making closely simulates the real context of the skill [40] and requires integration of competencies. | Examples of validity and reliability established for some tools including communication and counselling skills of pharmacists (CCSP) tool [39] and medication related consultation framework (MRCF) [41]. Validity evidence presented in the literature relies heavily on psychometric properties. |

| Objective Structured Clinical Examination (OSCE) | The OSCE objectively tests multiple skill sets in a controlled environment. Candidates move through a series of time-limited stations for the assessment of professional tasks in a simulated environment using standardised marking rubrics [37,42]. | Encourage students to practice skills more holistically [37]. However, OSCEs tend to break down competencies into smaller units which are evaluate separately. Lack complete integration of clinical tasks. | Although OSCEs often use trained simulated patients in simulated environment, authenticity has been questioned as scenarios may not reflect the reality of clinical practice [43]. | Validity and reliability should be established for individual assessments. However, increasing the number of stations can improve validity and reliability [42], while increasing time spent at each station, using a standardised marking tool, use of standardised patients and having multiple assessors at each station can improve reliability [42]. |

| Workplace Based Assessments (WPBAs) | Case-based discussion (CBD) [44], Direct observation including mini-Clinical Evaluation Exercise (CEX) and multisource/360-degree feedback [45,46] are all assessment tools that evaluate performance in the environment in which the practitioner works [37]. | Integration is dependent on the assessment. | Authenticity is high due to the assessment of competence and performance takes place during normal work activities; advantages of authenticity rely on the appropriate use of tools, and engagement of both learner and assessor [37]. | Validity and reliability should be established for individual assessment tools. Often content validity is limited, as the assessment is of the student’s management of one specific case at one point in time. Construct validity is high because the tools assess actual practice in the workplace. Reliability is often dependent on assessor’s training and experience, and may be improved by using standardised, validated assessment tools. Examples of validated assessment tools in pharmacy education, e.g., pharmacy mini-PAT [47]; and in other health disciplines e.g., SPRAT [48]. |

| Portfolio | A collection of longitudinal evidence of professional development including performance evaluation samples, action plans, self-reflection, evidence of continuing professional development (CPD), presentations, documentation of critical incidents, evidence of research and quality improvement projects. | Integration is dependent on the source of evidence in the portfolio; there is opportunity to capture evidence from a range of settings that show amalgamation of competencies, but content requirements of portfolio may need to be clearly defined to ensure this. | Authenticity is high as samples of evidence are directly from workplace; may be used as a repository for completes WPBAs. | Valid method for assessing competence, however threats to validity exist as contents may vary considerably and are self-reported. Evidence shows a wide range of reliability scores [49]. |

| Entrustable Professional Activities (EPA) | EPAs are used as both a link competencies and professional responsibilities in practice; and as a mechanism to decide the level of supervision for a student [20]. | High level of integration as EPAs require multiple competencies to be applied in an integrative fashion [50], e.g., clinical tasks such as medicine dispensing combines several domains of competence [51]. | Authenticity is high due to the assessment of competence and performance takes place while performing units of professional practice that reflect the daily work of the practitioner. | Few studies report on the psychometric properties of EPAs. Those that do report moderately strong inter-rater reliability [52] and good face validity. |

| Assessment Type | Millers Pyramid Level [15] | ||||

|---|---|---|---|---|---|

| Knows (Knowledge) | Knows How (competence) | Shows How (Performance) | Does (Action) | Is (Identity) | |

| Multiple-Choice Questions (MCQ) | Yes | Partially | No | No | No |

| Extended Matching Questions (EMQ) | Yes | Partially | No | No | No |

| Written Examination | Yes | Yes | No | No | No |

| Computer-Adaptive Testing (CAT) | Yes | Partially | No | No | No |

| Viva Voce/Oral Exams | Yes | Yes | Partially | No | No |

| Simulated patient encounters/practical examination | Yes | Yes | Partially | No | No |

| Objective Structured Clinical Examination (OSCE) | Yes | Yes | Yes | No | No |

| Workplace Based Assessments (WBA) | Yes | Yes | Yes | Yes | Yes |

| Portfolio | Yes | Yes | Yes | Yes | Yes |

| Entrustable Professional Activities (EPA) | Yes | Yes | Yes | Yes | Yes |

| Citation/Location/Quality | Study Participants/Assessment Approach | Study Aims/Methods | Outcomes and Key Findings | Limitations | Reports on Integration of Competencies (Y/N) | Includes Simulation/Reports on Authenticity (Y/N) | Measures Validity/Reliability of Assessment Tool (Y/N) |

|---|---|---|---|---|---|---|---|

| Santos, S and Manuel, J. (2017) [54] Brisbane, Australia 5/18 (MERSQI) | Fourth-year undergraduate BPharm students (n = 14) and assessors (n = 6). Demonstration of research skills via submission of an abstract, poster and oral presentation. | Aim: Describe and evaluate the design and implementation of an authentic assessment in undergraduate pharmacy course. Survey of students and stakeholders’ perceptions on authenticity of assessment. | Authenticity in assessment is subjective to each student. Authenticity as rated by students was perceived as lower when compared with assessor perceptions. Use of a framework in design of an authentic assessment is valuable. | Single site. Small sample size. Level of details in statistical reporting poor. | N | Y | N |

| Hirsch, A; Parihar, H. (2014) [21] Georgia, USA 12/18 (MERSQI) | Fourth-year undergraduate PharmD students (n = 73). Broad range of assessment tools incorporated into a mega-OSCE (to evaluate student knowledge and skills written and verbal presentations, multiple-choice examinations, short answer calculations, standardized patient encounter and pharmaceutical compounding). | Aim: To create a capstone course that provides a comprehensive and integrated review of the pharmacy curriculum. Evaluation of student outcomes based on several assessment tools (components of a mega-OSCE). | 95% of students successfully passed the capstone course. Qualitative data described students rated the capstone course highly. Robust assessment techniques allowed faculty members to detect specific weaknesses and enabled remediation of those skills. | Details about individual assessments are poorly described. No control group. | N | Y | N |

| Mackellar, A. et al. (2007) [55] Manchester, UK 11/18 (MERSQI) | Pharmacy academics across three universities (n = 38). Tool for patients to assess the communication skills of pharmacy students. | Aim: To identify valid and reliable criteria by which patients can assess the communication skills of pharmacy students. Literature review and focus group discussion generated the potential assessment criteria. Survey was subsequently conducted to measure face validity and reliability for each assessment criterion. | 7 criteria identified that were important measures of pharmacy students’ communication skills and rated as face valid and reliable. The use of a 5-point descriptor scale (excellent, very good, good, fair and poor) is more discriminating than a 6-point numerical scale. | Limited statistical power due to modest sample size. | N | Y | Y |

| Kadi, A. et al. (2005) [56] Saudi Arabia 10.5/18 (MERSQI) | First-year undergraduate pharmacy students (n = 38). Assay analysis of compounded product for drug content and compared with nominal concentration. | Aim: To evaluate the accuracy of pharmacy students’ compounding skills. Objective assay result reported as a percentage difference from the nominal concentration. | Errors ranged from 25% to >200% of the label amount. 15% of students required >3 attempts before successfully preparing solution. Use of analytical methods that can be quick and inexpensive are important as an objective measurement of students compounding ability. | Only 54% of students participated. Lack of control group. | N | Y | N |

| Salinitri, F. et al. (2012) [13] Detroit, USA 10.5/18 (MERSQI) | Third-year undergraduate pharmacy students (n = 54). Objective Structured. Clinical Examination (OSCE) compared with written multiple-choice examination. | Aim: Compare pharmacy students’ performance on an OSCE to their performance on a written examination for the assessment of problem-based learning. Effectiveness of OSCE evaluated by 1) comparing OSCE results with written examination skills; and 2) survey views on effectiveness of OSCEs as perceived by faculty and students. | OSCE performance did not correlate with written examination scores. OSCE’s evaluate different competencies (clinical skills, problem solving, communications, social skills, knowledge) not measured with written examinations (knowledge, problem solving). Process of using OSCE was valued by students and faculty observers. | Single site. Survey tools were not validated. Order effect (OSCE first versus written examination first) not measured and may influence results. | Y | Y | N |

| Sturpe, D. (2009) [14] Maryland, USA 7/18 (MERSQI) | PharmD faculty members (n = 88). Objective Structured. Clinical Examination (OSCE). | Aim: Describe the current OSCE practices (awareness of, interest in, current practice and barriers) in Doctor of Pharmacy (PharmD) programs in the Unites States. Structured interviews with PharmD faculty members. | 37% of program responses reported using OSCEs; 63% of program responses reported they did not use OSCEs, but half of these were considering incorporating it into their curriculum. | Descriptive statistics were used to analyse interview transcripts. | N | N | N |

| Rastegarpanah, M. et al. (2019) [39] Tehran, Iran 11.5/18 (MERSQI) | Third- and fourth-year undergraduate pharmacy students (n = 12) and faculty experts (n = 7). Standardised patient (SP) simulation encounter. | Aim: Design and validate a tool to assess pharmacy students’ performance in developing effective communication and consultation skills. A 22-item tool was developed, and psychometric properties described following its use in student simulation encounters. | High inter-rater reliability between expert raters and simulated patient (SP) ratings (p = 0.01). | Small sample size limits generalisability; no control group. No between-scenario analysis to determine effect of different clinical scenarios. | N | Y | Y |

| Kirton, SB and Kravitz, L. (2011) [57] Hertfordshire, UK 13/18 (MERSQI) | Recent graduates of undergraduate pharmacy program now completing “preregistration” year (n = 39). Objective Structured. Clinical Examination (OSCE) compared with traditional written examination consisting of a series of multiple-choice and essay questions (MPP3 examination). | Aim: investigate correlation between performance in OSCE and traditional pharmacy practice examinations at the same level. Analysis of grades attained by student in their Year 1, 3 and 4 OSCE with assessment data from a same level Medicines and Pharmacy written examination. | When comparing Year 3 OSCE and Year 3 written exam data there was moderate correlation between results from the two methods of assessment. OSCEs add value to traditional methods of assessment because the different evaluation methods measure different competencies. | Data from OSCEs in Year 2 of the program was incomplete and therefore omitted in the analysis. | N | N | N |

| Kimberlin, C. (2006) [58] Florida, USA 10.5/18 (MERSQI) | Faculty members primarily responsible for communication skills instruction (n = 47). Standardised patient (SP)/simulation encounter (including video recorded consultations); include self-assessment, peer assessment and faculty/expert assessment. | Aim: Describe current practices in assessment of patient communication skills in US colleges of pharmacy Gathering syllabi and assessment instruments from programs, conducting content analyses of assessment instruments and conducting telephone interviews with academics about assessment procedures used in their institutions. | Content analyses revealed there is considerable variety in the skills assessed and the formatting and weighting of different skills. Qualitative interview data indicated concerns with lack of explicit criteria for acceptable performance and perceived lack of reliability for grading. | Modest (56%) response rate. Details about assessment methodology poorly described. | N | Y | N |

| Aojula, H. et al. (2006) [59] Manchester, UK 9/18 (MERSQI) | First-year Master of pharmacy (MPharm) students. Online computer-based assessment (CBA) using an online learning environment WebCT (course tools), consisting of multiple-choice questions (MCQ) and longer questions including text-match, diagram labelling and calculations. | Aim: Explore computer-based approaches for summative assessments with emphasis on development time, academic rigor, security and organisation. Pilot study was conducted and scores from CBA were compared with traditional marking of exams. | Discrepancy between hand-marking and computer-based marking was <1%, initially improved by embedding a Spellcheck tool. Online summative assessments may be used successfully with (1) an appropriate learning management system with inbuilt assessment tools; (2) time to familiarize staff to write CBA questions; (3) Training students with formative tests; (4) Contingency plan in case of internet failure. | Single site. No control group. Pilot study with module focused on cell biology and biochemistry not representative of practice-based pharmacy knowledge and skills. | N | N | N |

| Kelley, K. et al. (2008) [60] Ohio, USA 12/18 (MERSQI) | Fourth-year undergraduate PharmD students (n = 109). Case-based interactive assessment. | Aim: To develop an assessment tool that would (1) help students review therapeutic decision-making and improve confidence in their skills; (2) provide pharmacy practice residents with opportunity to lead small group discussions (3) provide program-level assessment data. Survey to measure student confidence in their skills and knowledge, and perceived usefulness of assessment method. Pre- and post- assessment scores of self-reported confidence levels. | No significant difference between pre- and post- test self-reported confidence levels. Assessment data was able to inform curricular mapping. 89% of students found the assessment useful. | Single site. Details about assessment methodology poorly described. | N | N | N |

| Hanna, L-A. et al. (2017) [61]. Belfast, UK 11/18 (MERSQI) | Fourth-year Master of Pharmacy (MPharm) degree (n = 118). Summative and formative assessment approaches. | Aim: Establish pharmacy students’ views on assessment and an integrated five-year degree. Paper-based self-administered questionnaire. Data analysis using descriptive statistics and non-parametric tests. Open-response questions analysed using thematic analysis. | Most respondents considered formative assessment improved academic performance. | Research students were excluded from the survey. | N | N | N |

| Benedict, N. et al. (2017) [62] Pennsylvania, USA 15/18 (MERSQI) | First (P1, n = 111)-and third (P3, n = 108)-year undergraduate PharmD students and first-year postgraduate (PGY1, n = 25) pharmacy residents Five-station, blended simulation of the experiences of one patient, structured to correspond to Miller’s Pyramid (including knowledge and performance evaluations) administered as a progress test. | Aim: To design an assessment of practice readiness using blended-simulation progress testing. The assessment was administered to learners at various points in their professional development to gauge progress. Assessment data was analysed for differences in learner scores (P1, P3 and PGY1) for each station. Rubric validity and reliability were determined. Student perception of assessment captured using survey for P1 and P3 students. | Patterns of results were consistent with expectations that scores would improve with advancing training levels. Key performance indicators improved significantly from P1 to P3 levels and then from P3 to PGY1 levels. 40% of surveyed participants indicated that the assessment was appropriate for their level of learning, with the majority of P3 students agreeing it was appropriate. | Survey not administered to PGY1 students; low survey response rate (50%) for P3 students. | N | Y | Y |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Croft, H.; Gilligan, C.; Rasiah, R.; Levett-Jones, T.; Schneider, J. Current Trends and Opportunities for Competency Assessment in Pharmacy Education–A Literature Review. Pharmacy 2019, 7, 67. https://0-doi-org.brum.beds.ac.uk/10.3390/pharmacy7020067

Croft H, Gilligan C, Rasiah R, Levett-Jones T, Schneider J. Current Trends and Opportunities for Competency Assessment in Pharmacy Education–A Literature Review. Pharmacy. 2019; 7(2):67. https://0-doi-org.brum.beds.ac.uk/10.3390/pharmacy7020067

Chicago/Turabian StyleCroft, Hayley, Conor Gilligan, Rohan Rasiah, Tracy Levett-Jones, and Jennifer Schneider. 2019. "Current Trends and Opportunities for Competency Assessment in Pharmacy Education–A Literature Review" Pharmacy 7, no. 2: 67. https://0-doi-org.brum.beds.ac.uk/10.3390/pharmacy7020067