Deep Learning and Parallel Processing Spatio-Temporal Clustering Unveil New Ionian Distinct Seismic Zone

Abstract

:1. Introduction

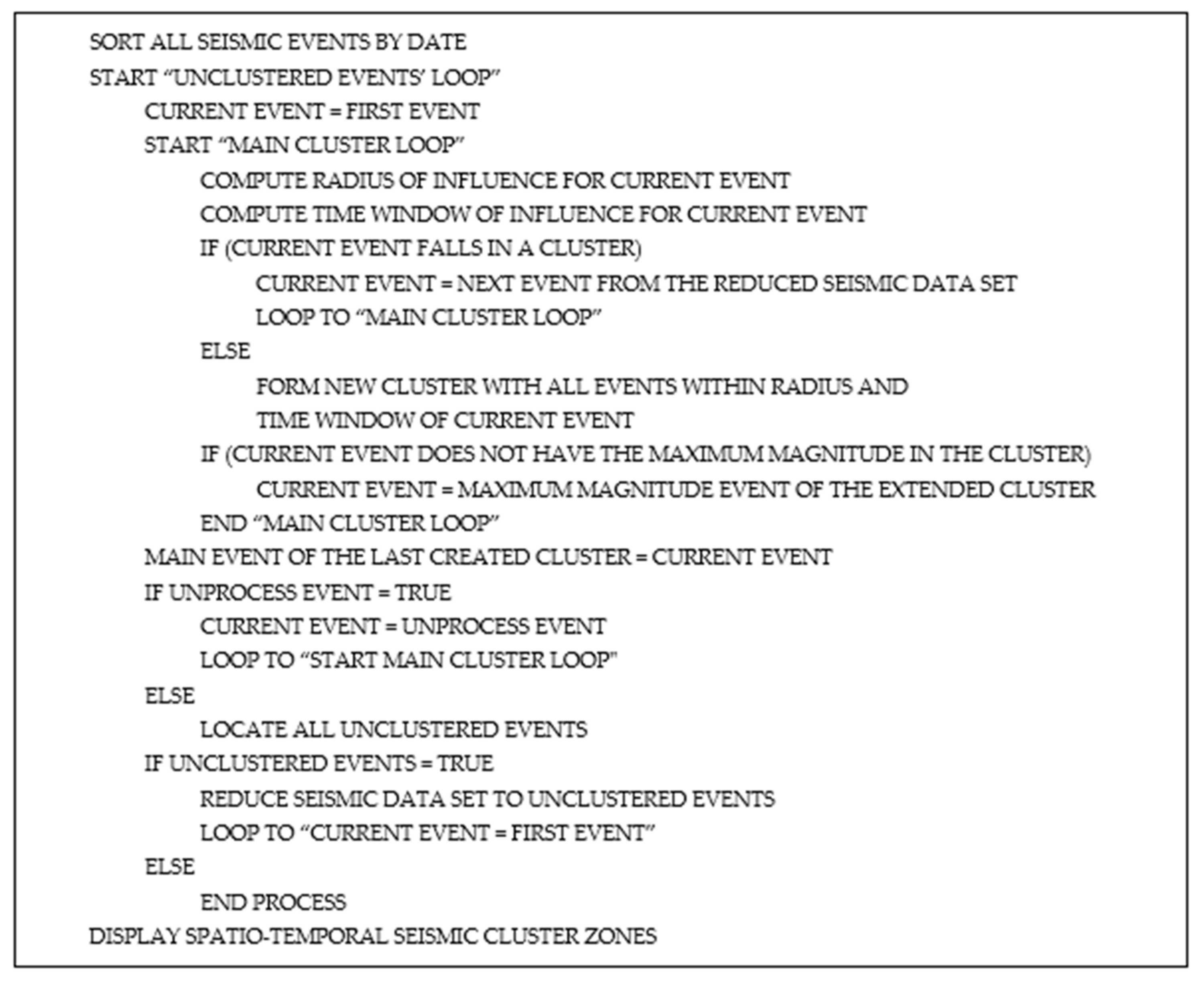

2. Materials and Methods

3. Results

4. Discussion

5. Conclusions

Funding

Conflicts of Interest

References

- Filici, C. On a Neural Approximator to ODEs. IEEE Trans. Neural Netw. 2008, 19, 539–543. [Google Scholar] [CrossRef] [PubMed]

- Gurumoorthy, R.; Kodiyalam, S. Neural network approximator with a novel learning scheme for design optimization with variable complexity data. In Proceedings of the 37th Structure, Structural Dynamics and Materials Conference, Salt Lake City, UT, USA, 15–17 April 1996; pp. 190–195. [Google Scholar]

- Lastovetsky, A. Advanced Heterogeneous Parallel Programming in mpC. In Parallel Computing on Heterogeneous Networks, 1st ed.; Zomaya, A., Ed.; Wiley: Hoboken, NJ, USA, 2004; pp. 215–254. [Google Scholar]

- Michelucci, U. Applied Deep Learning, 1st ed.; Apress: Berkeley, CA, USA, 2018; pp. 1–410. [Google Scholar]

- Vinod-Kumar, K. Earthquake and Active Faults. In Remote Sensing Applications; Roy, P.S., Dwivedi, R.S., Vijayan, D., Eds.; NRSC: Balanagar, India, 2015; pp. 339–350. [Google Scholar]

- Earle, S. Plate Tectonics. In Physical Geology, 2nd ed.; BCcampus: Victoria, BC, Canada, 2019. [Google Scholar]

- Konstantaras, A. Expert knowledge-based algorithm for the dynamic discrimination of interactive natural clusters. Earth Sci. Inform. 2016, 9, 95–100. [Google Scholar] [CrossRef]

- Konstantaras, A. Classification of distinct seismic regions and regional temporal modelling of seismicity in the vicinity of the Hellenic seismic arc. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 1857–1863. [Google Scholar] [CrossRef]

- Konstantaras, A.; Katsifarakis, E.; Maravelakis, E.; Skounakis, E.; Kokkinos, E.; Karapidakis, E. Intelligent spatial-clustering of seismicity in the vicinity of the Hellenic seismic arc. Earth Sci. Res. 2012, 1, 1–10. [Google Scholar] [CrossRef] [Green Version]

- Dobrovolsky, I.; Gershenzon, N.; Gokhberg, M. Theory of electrokinetic effects occurring at the final stage in the preparation of a tectonic earthquake. Phys. Earth Planet. Inter. 1989, 57, 144–156. [Google Scholar] [CrossRef]

- Zubkov, S. The appearance times of earthquake precursors. Izv. Akad. Nauk SSSR Fiz. Zemli (Solid Earth) 1987, 5, 87–91. [Google Scholar]

- Drakatos, G.; Latoussakis, J. A catalog of aftershock sequences in Greece (1971–1997): Their spatial and temporal characteristics. J. Seismol. 2001, 5, 137–145. [Google Scholar] [CrossRef]

- Guo, D.; Jin, H.; Gao, P.; Zhu, X. Detecting spatial community structure in movements. Int. J. Geogr. Inf. Sci. 2018, 32, 1326–1347. [Google Scholar] [CrossRef]

- Zhang, W.; Fang, C.; Zhou, L.; Zhu, J. Measuring megaregional structure in the Pearl River Delta by mobile phone signaling data: A complex network approach. Cities 2020, 104, 102809. [Google Scholar] [CrossRef]

- GI-NOA: Geodynamics Institute—National Observatory of Athens. Available online: http://www.gein.noa.gr/en (accessed on 13 July 2020).

- Bodri, B. A neural-network model for earthquake occurrence. Geodynamics 2001, 32, 289–310. [Google Scholar] [CrossRef]

- Aggarwal, C. Training deep neural networks. In Neural Networks and Deep Learning; Springer: New York, NY, USA, 2018; pp. 105–167. [Google Scholar]

- Konstantaras, A.; Vallianatos, F.; Varley, M.; Makris, J. Soft-computing modelling of seismicity in the southern Hellenic Arc. IEEE Geosci. Remote Sens. Lett. 2008, 5, 323–327. [Google Scholar] [CrossRef]

- Konstantaras, A.; Varley, M.R.; Vallianatos, F.; Makris, J.; Collins, G.; Holifield, P. Detection of weak seismo-electric signals upon the recordings of the electrotelluric field by means of neuro-fuzzy technology. IEEE Geosci. Remote Sens. Lett. 2007, 4, 161–165. [Google Scholar] [CrossRef]

- Maravelakis, E.; Konstantaras, A.; Kabassi, K.; Chrysakis, I.; Georgis, C.; Axaridou, A. 3DSYSTEK web-based point cloud viewer. In Proceedings of the 5th International Conference on Information, Intelligence, Systems & Applications (IISA 2014), Chania, Greece, 7–9 July 2014; pp. 262–266. [Google Scholar]

- Axaridou, A.; Chrysakis, I.; Georgis, C.; Theodoridou, M.; Doerr, M.; Konstantaras, A.; Maravelakis, E. 3D-SYSTEK: Recording and exploiting the production workflow of 3D-models in cultural heritage. In Proceedings of the 5th International Conference on Information, Intelligence, Systems & Applications (IISA 2014), Chania, Greece, 7–9 July 2014; pp. 51–56. [Google Scholar]

- Zhang, W.; Thill, J.C. Detecting and visualizing cohesive activity-travel patterns: A network analysis approach. Comput. Environ. Urban Syst. 2017, 66, 117–129. [Google Scholar] [CrossRef]

- Hellenic Survey of Geology & Mineral Exploration. Available online: www.igme.gr (accessed on 13 July 2020).

- Jing, Q. Analysis of intensive learning and supervised learning. Acad. J. Comput. Inf. Sci. 2018, 1, 80–84. [Google Scholar]

- Konstantaras, A.; Varley, M.R.; Vallianatos, F.; Collins, G.; Holifield, P. Neuro-fuzzy prediction-based adaptive filtering applied to severely distorted magnetic field recordings. IEEE Geosci. Remote Sens. Lett. 2006, 3, 439–441. [Google Scholar] [CrossRef]

- Manish, J. Beginning Modern Unix, 1st ed.; Apress: Berkeley, CA, USA, 2018; pp. 1–413. [Google Scholar]

| Year | Month | Day | Hour | Actual Min | Date Sec | Lat | Long | Depth | Mag | Estimated Date/(Comments) |

|---|---|---|---|---|---|---|---|---|---|---|

| 1997 | NOV | 18 | 13 | 7 | 36.9 | 37.26 | 20.49 | 5 | 6.1 | 12 September 1997, 20:05:11 |

| 1997 | NOV | 18 | 13 | 13 | 48.3 | 37.36 | 20.65 | 5 | 5.6 | (Significant Interim EQs) |

| 1998 | APR | 29 | 3 | 30 | 37.1 | 35.99 | 21.98 | 5 | 5.5 | (Significant Interim EQs) |

| 2003 | AUG | 14 | 5 | 14 | 53.9 | 38.79 | 20.56 | 12 | 5.9 | 01 June 2003, 22:17:07 |

| 2005 | JAN | 31 | 1 | 5 | 29.1 | 37.41 | 20.11 | 16 | 5.7 | (Significant Interim EQs) |

| 2005 | OCT | 18 | 15 | 25 | 59.5 | 37.58 | 20.86 | 22 | 5.6 | (Significant Interim EQs) |

| 2007 | MAR | 25 | 13 | 57 | 58.2 | 38.34 | 20.42 | 15 | 5.5 | (Significant Interim EQs) |

| 2008 | JAN | 6 | 5 | 14 | 19.3 | 37.11 | 22.78 | 86 | 6.1 | 30 March 2008, 10:28:46 (Possible occurrence of seismic clustering phenomenon where deeper underground faults’ seismic energy release triggered other underground faults in upper ground layers.) |

| 2008 | FEB | 14 | 10 | 9 | 23.4 | 36.50 | 21.78 | 41 | 6.2 | |

| 2008 | FEB | 14 | 12 | 8 | 55.2 | 36.22 | 21.75 | 38 | 6.1 | |

| 2008 | FEB | 20 | 18 | 27 | 4.9 | 36.18 | 21.72 | 25 | 6.0 | |

| 2008 | JUΝ | 8 | 12 | 25 | 27.9 | 37.98 | 21.51 | 25 | 6.5 | |

| 2008 | JUN | 21 | 11 | 36 | 22.8 | 36.03 | 21.83 | 12 | 5.5 | (Significant Interim EQs) |

| 2009 | FEB | 16 | 23 | 16 | 38.5 | 37.13 | 20.78 | 15 | 5.5 | (Significant Interim EQs) |

| 2009 | NOV | 3 | 5 | 25 | 9.3 | 37.39 | 20.35 | 39 | 5.6 | (Significant Interim EQs) |

| 2015 | NOV | 17 | 7 | 10 | 7.3 | 38.67 | 20.60 | 11 | 6.0 | 27 May 2015, 5:32:20 |

| 2018 | OCT | 25 | 22 | 54 | 49.6 | 37.34 | 20.51 | 10 | 6.6 | 14 April 2019, 10:54:09 |

© 2020 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Konstantaras, A. Deep Learning and Parallel Processing Spatio-Temporal Clustering Unveil New Ionian Distinct Seismic Zone. Informatics 2020, 7, 39. https://0-doi-org.brum.beds.ac.uk/10.3390/informatics7040039

Konstantaras A. Deep Learning and Parallel Processing Spatio-Temporal Clustering Unveil New Ionian Distinct Seismic Zone. Informatics. 2020; 7(4):39. https://0-doi-org.brum.beds.ac.uk/10.3390/informatics7040039

Chicago/Turabian StyleKonstantaras, Antonios. 2020. "Deep Learning and Parallel Processing Spatio-Temporal Clustering Unveil New Ionian Distinct Seismic Zone" Informatics 7, no. 4: 39. https://0-doi-org.brum.beds.ac.uk/10.3390/informatics7040039