1. Introduction

Due to the exponential growth of hand held devices, the widespread availability of maps and inexpensive network bandwidths have popularized location based services. According to Skyhook, the number of location-based applications being developed each month is increasing exponentially. Thus, spatial queries such as

nearest neighbor, range queries and reverse nearest neighbor [

1,

2,

3,

4,

5] have received a significant amount of attention from the research community. However, most of the existing applications are limited to traditional spatial queries, which return objects based on their distances from the query point.

In this paper, we study the top-k spatial preference query, which returns a ranked list of best spatial objects based on the neighborhood facilities. Given a set of data objects , a top-k spatial preference query retrieves a set of objects in D based on the quality of the facilities (the quality is calculated by aggregating the distance score and non-spatial score) in its neighborhood. Many real-life scenarios exist to illustrate the useful-ness of preference queries. Thus, if we consider a scenario in which a real estate agency office maintains a database of available apartments, a customer may want to rank the apartments based on neighboring facilities (e.g., market, hospitals, and school).

In another example, a tourist may be looking for hotels, where he may be interested in hotels located near some good quality restaurants, cafes or tourist spots.

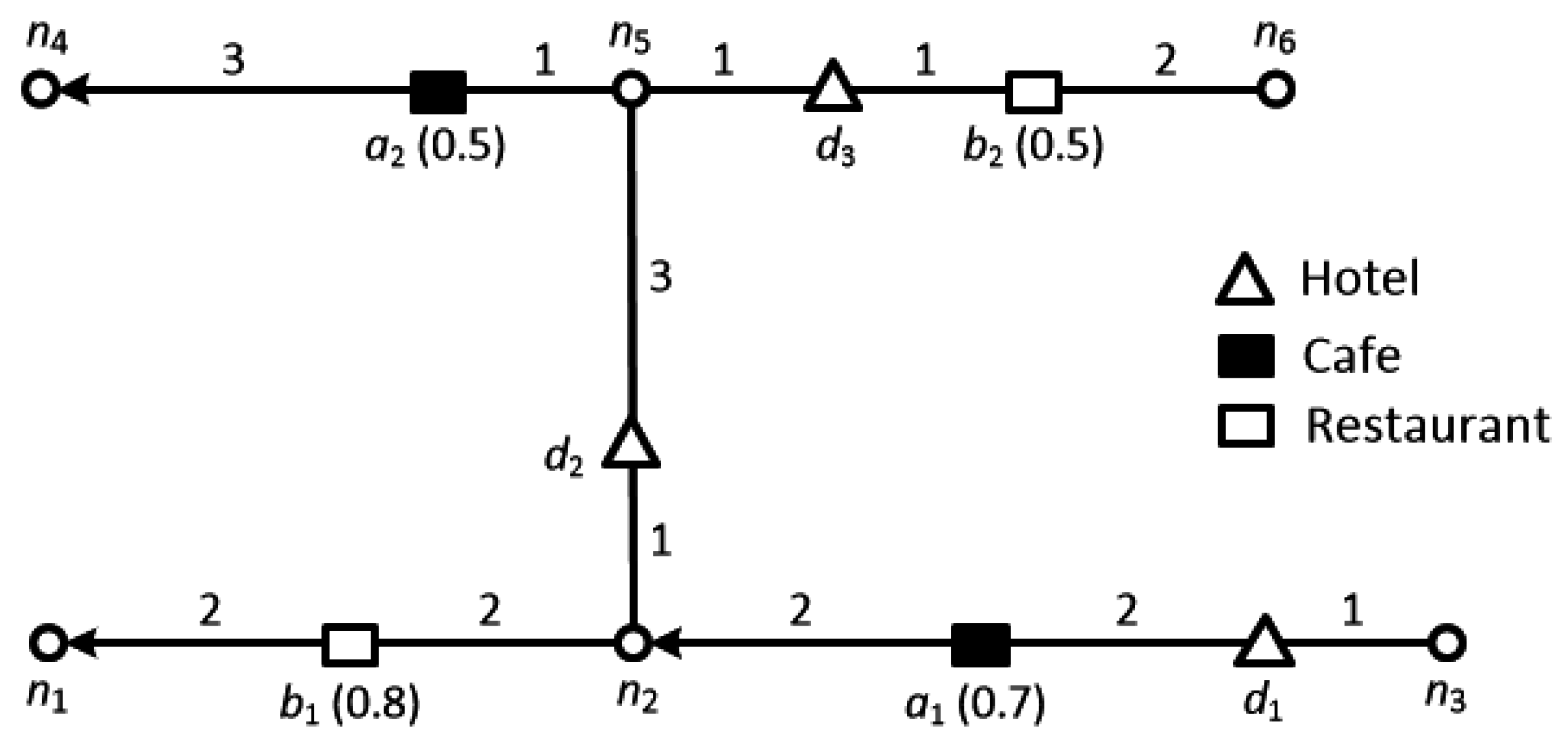

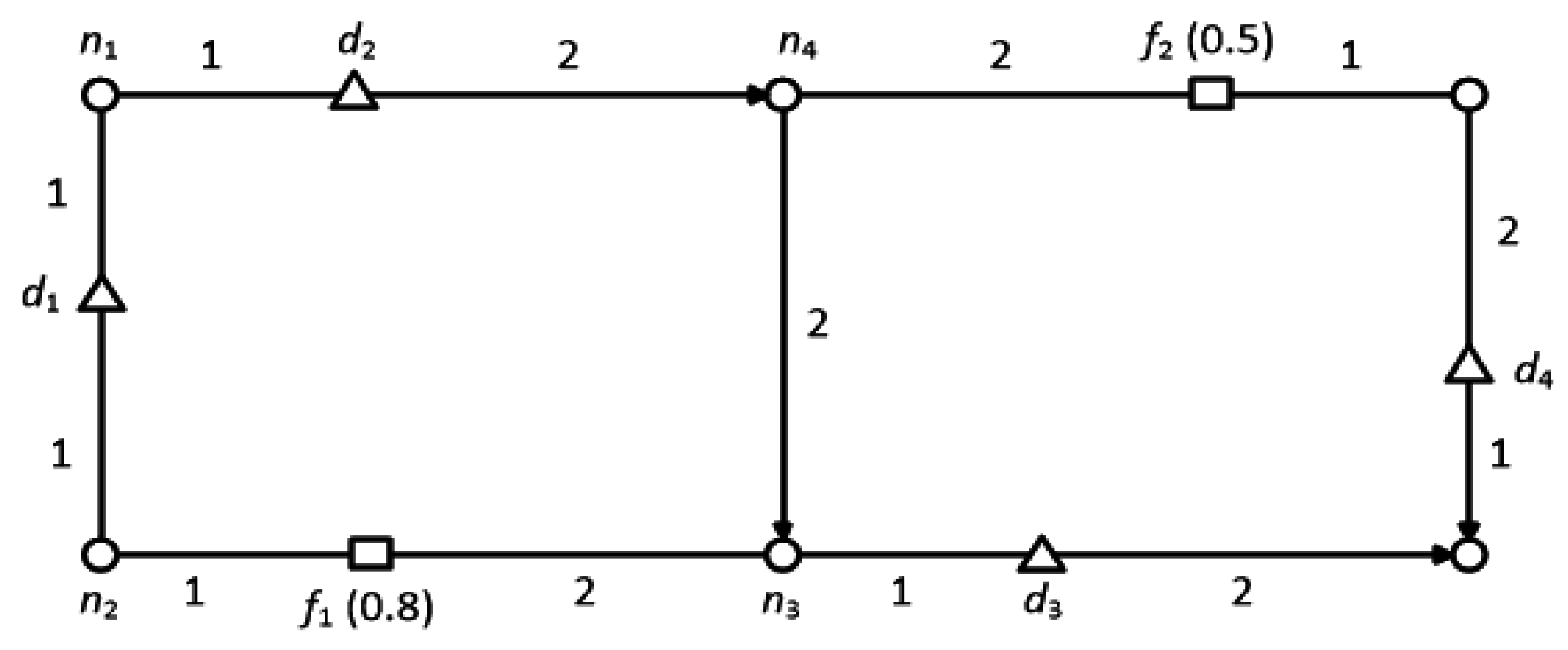

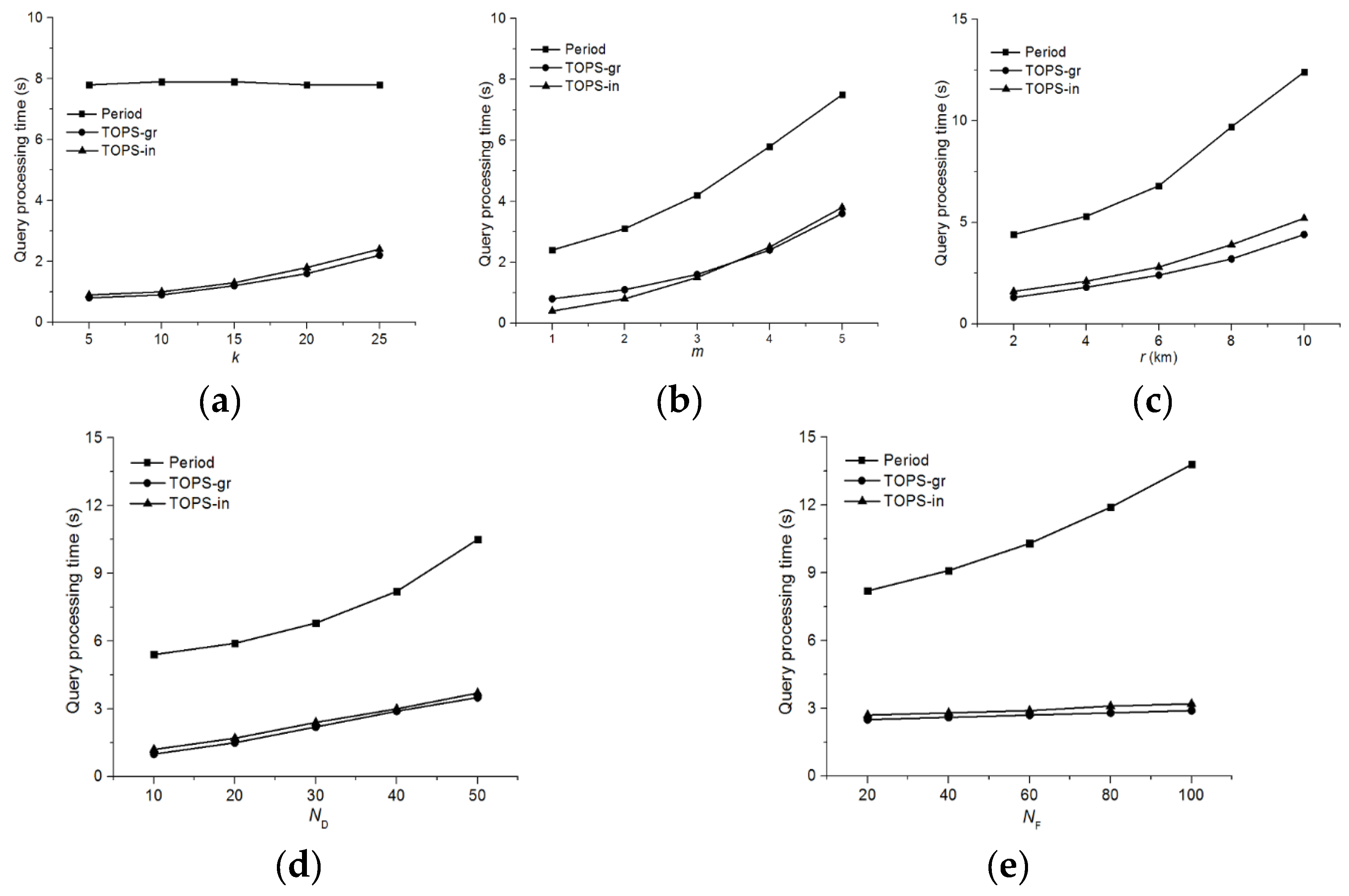

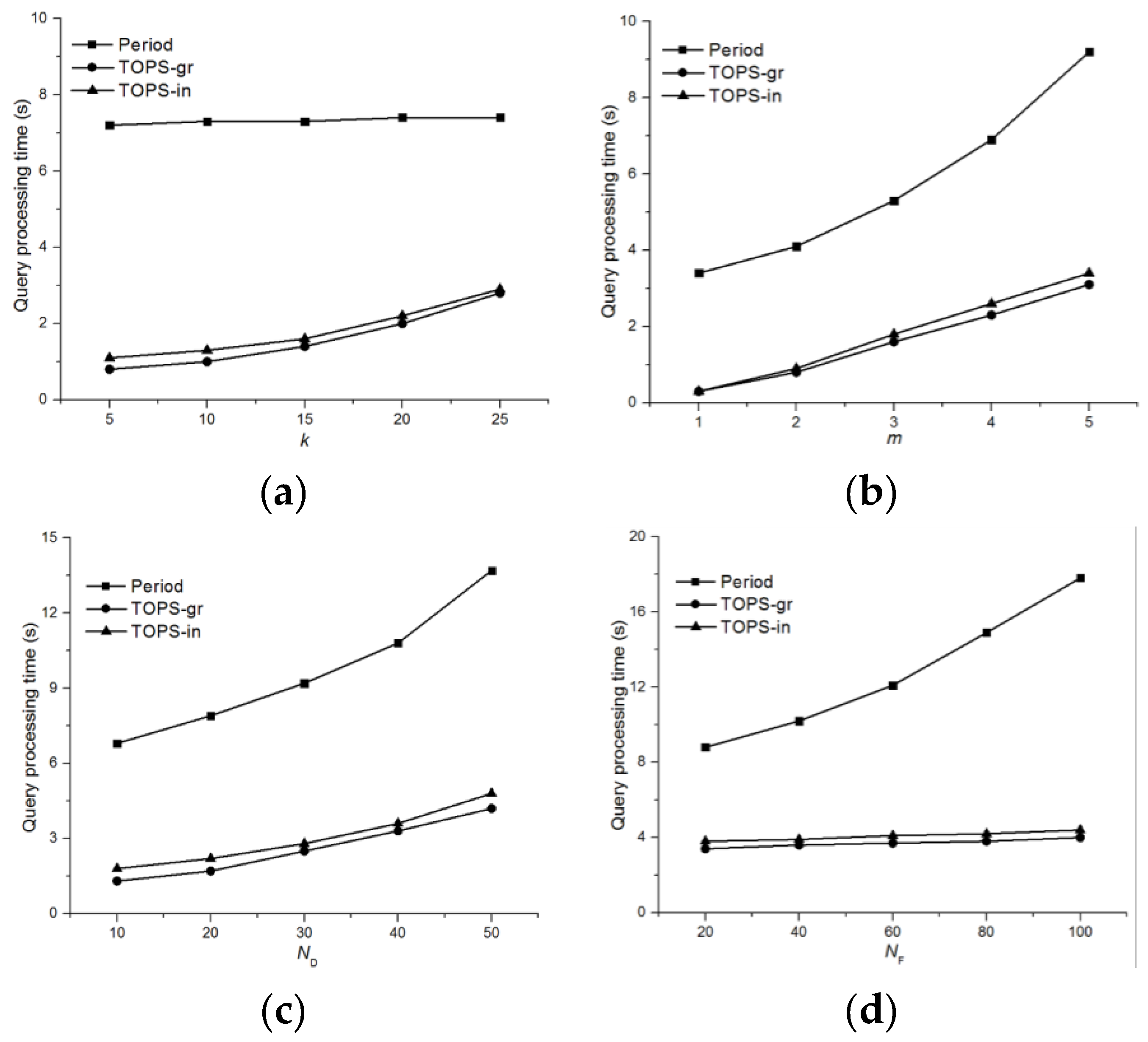

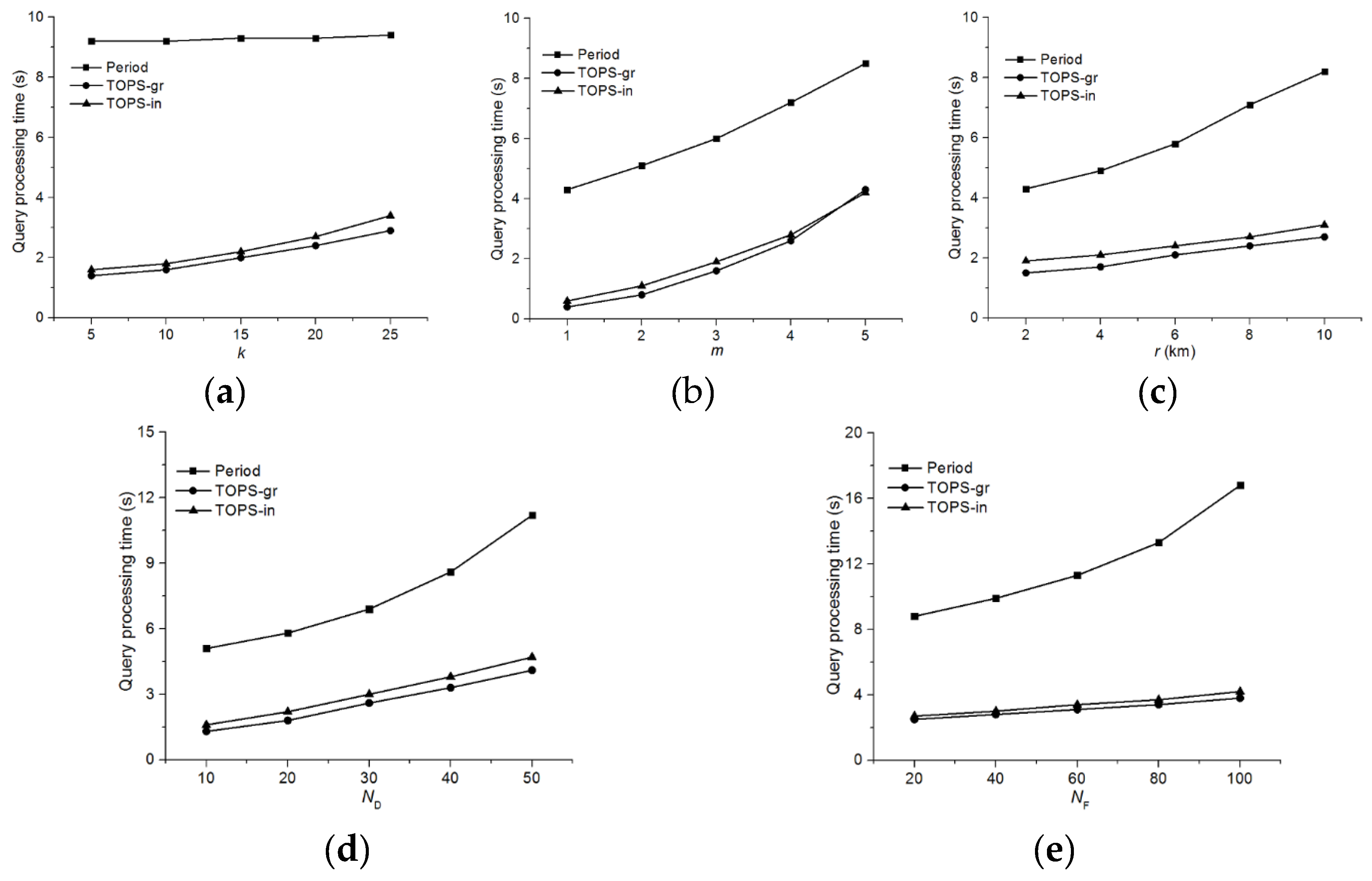

Figure 1 illustrates the data objects (i.e., hotels) as triangles, and two distinct feature datasets: solid rectangles denote cafes while hollow rectangles denote restaurants. The number on each edge denotes the cost of traveling on that edge, where the cost of an edge can be considered as the amount of time required to travel along it e.g.,

. The numbers in parentheses over the feature objects denote the score of that particular feature object e.g., the score of feature object

is 0.5. Now, let us consider a tourist looking for a hotel near to cafes and restaurants. The tourist can restrict the range of the query so we assume that range is 3 (i.e.,

) in this example. The score of the hotels can be determined according to the following criteria (1) the maximum quality for each feature in the neighborhood region; and (2) the aggregate of these qualities. For instance, if the hotels are ranked based on the scores of cafes only, the top hotel would be

because the score of

and

are 0.7, 0 and 0.5, respectively. It should be noted that the score of

is 0 although

is within its range, because it is a directed road network and no path connects

to

. On the contrary, if the hotels are ranked based only on the scores of restaurants, the top hotel would be

because the score of

and

are 0, 0.8 and 0.5, respectively. Finally, if the hotels are ranked based on the summed scores of restaurants and cafes, then the top hotel would be

because the score of

and

are 0.7, 0.8 and 1, respectively.

Two basic factors are considered when ranking objects: (1) the spatial ranking, which is the distance and (2) the non-spatial ranking, which ranks the objects based on the quality of the feature objects. Our top-k spatial preference query algorithm efficiently integrates these two factors to retrieve a list of data objects with the highest score.

Unlike traditional top-

k queries [

6,

7], top-

k spatial preference queries require that the score of a data object is defined by the feature objects that satisfies a spatial neighborhood condition such as the range, nearest neighbor, or influence [

8,

9,

10,

11]. Therefore, a pair comprising a data object and a feature objects needs to be examined in order to determine the score of the corresponding data object. In addition, processing top-

k spatial preference queries in road networks is more complex than in Euclidean space, because the former requires the exploration of the spatial neighborhood along the given road network.

Top-

spatial preference queries are intuitive and they have several useful applications such as hotel browsing. Unfortunately, most of the existing algorithms are focused on the Euclidean space and little attention has been given to road networks. Indeed, although few algorithms exist for the preference queries in road networks, they all consider undirected road networks. Motivated by aforementioned reasons, we propose a new approach to process top-

spatial preference queries in directed road networks, where each road segment has a particular orientation (i.e., either directed or undirected). In our method, the feature objects in the road network are grouped together in order to reduce the number of pairs that need to be examined to find the top-

data objects. We propose a method for grouping feature objects based on pivot nodes; as described in detail in

Section 4.2.1. All of the pairs of data objects and feature groups are mapped onto a distance-score space, and a subset of pairs is identified that is sufficient to answer spatial preference queries. In order to map the pairs, we describe a mathematical formula for computing the minimum and maximum distances in directed road networks between data objects and feature groups. Finally, we present our Top-

k Spatial Preference Query Algorithm (TOPS), which can efficiently compute the top-

k data objects using these pairs.

This study is an extended version of our previous investigation of top-

k spatial preference queries in directed road networks [

12]. However, we present four new extensions that we did not consider in our preliminary study [

12]. The first extension is an enhanced grouping technique which can efficiently obtain multiple feature groups associated with a single pivot node (

Section 4.2.1). The second extension studies adaptations of the proposed algorithms to deal with neighborhood conditions other than range score, e.g., nearest neighbor score and influence score (

Section 5). In the third extension, we present an efficient incremental maintenance method for materialized skyline sets (

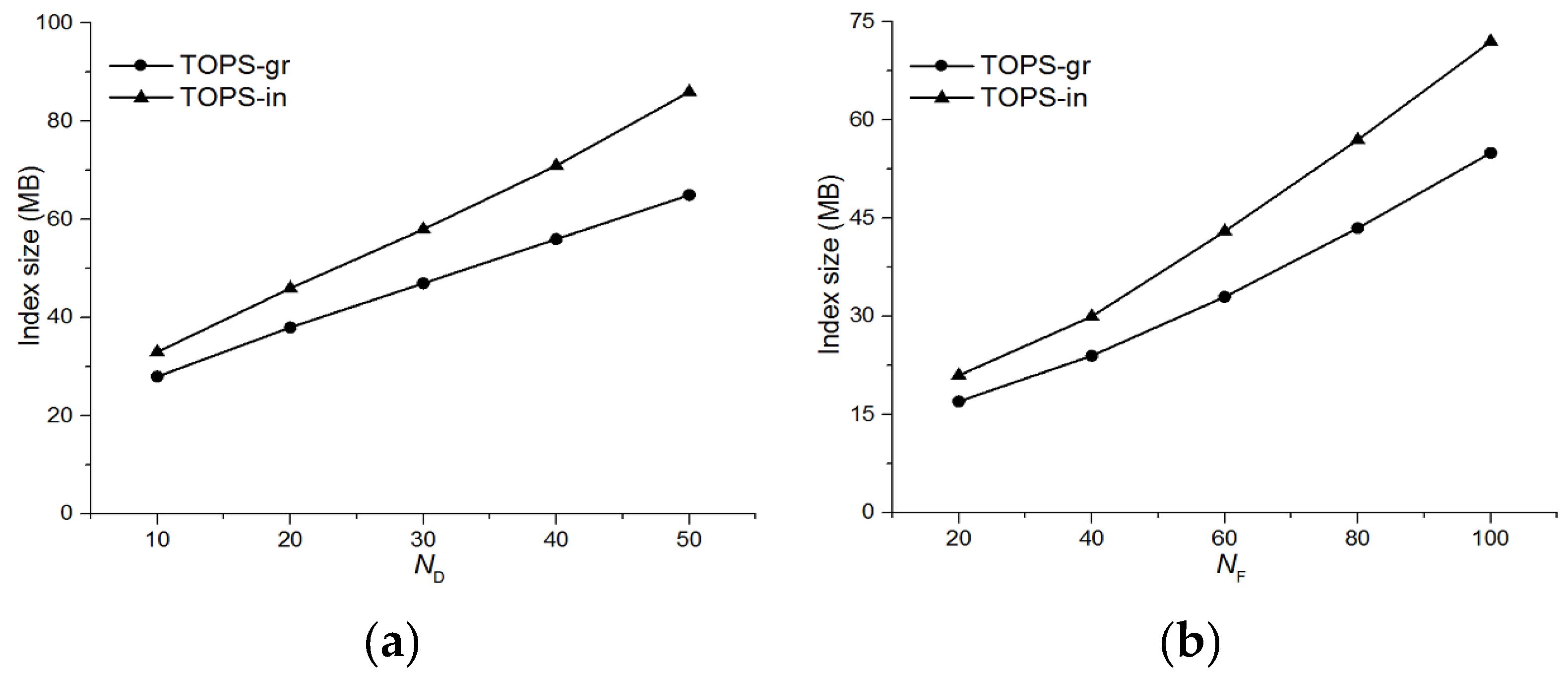

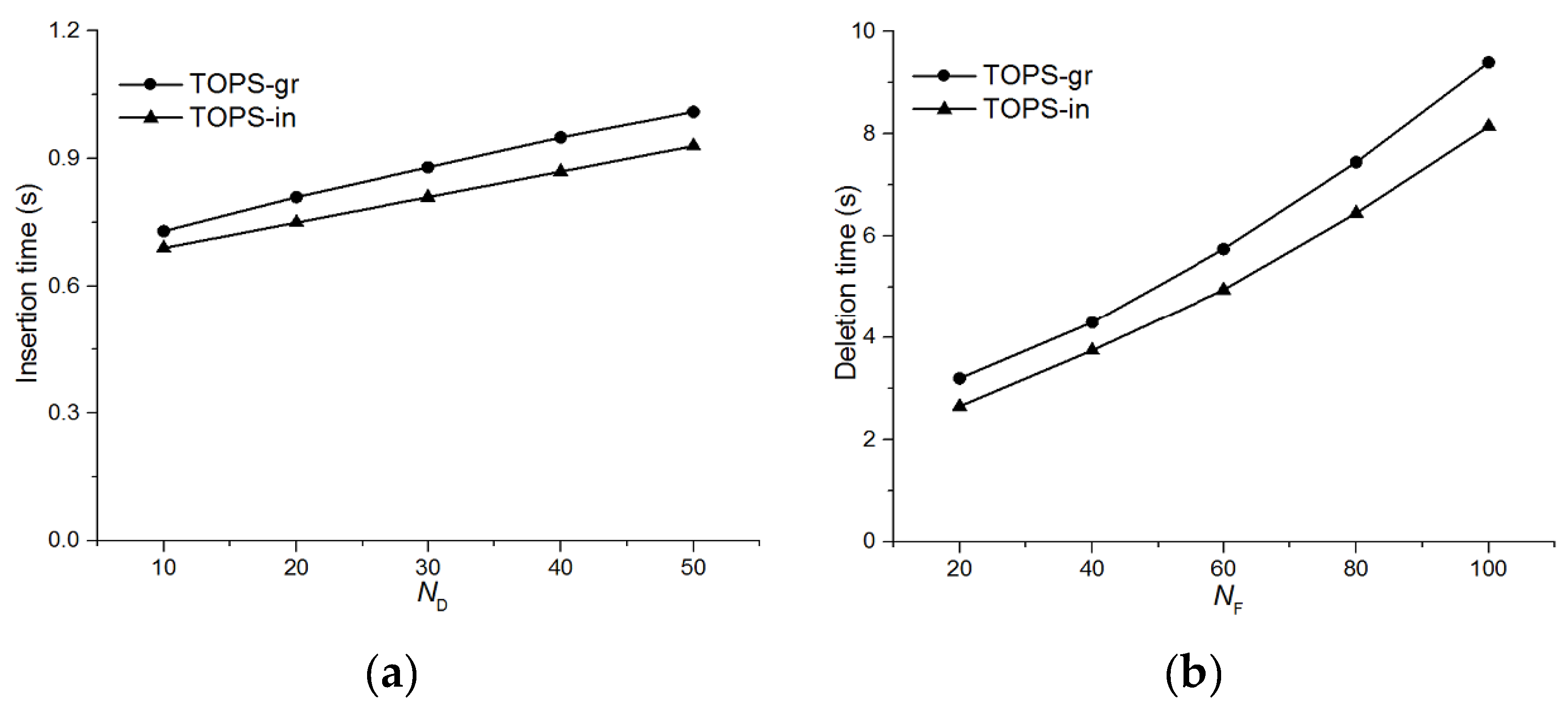

Section 5). In the fourth extension, we conduct an extensive experimental study in which we increase the number of parameters to evaluate the performance of TOPS; as well as implementing two versions of TOPS:

and

where we compared their performance with the Period approach (

Section 6). In addition to these major extensions, we also present a Lemma to prove that the materialized skyline set is sufficient for determining the partial score of a data object

. Furthermore, we also discuss the limitations of undirected road networks based algorithms in a directed road networks (

Section 4.4).

The contributions of this paper are summarized as follows:

We propose an efficient algorithm called TOPS for processing top-k preference queries in directed road networks. To the best of our knowledge, this is the first study to address this problem.

We present a method for grouping feature objects based on a pivot node. We show the mapping of data objects and feature groups in a distance-score space to generate a skyline set.

We state lemmas for computing the minimum and maximum distances between the data object and the feature group.

In addition, we propose a cost-efficient method for the incremental maintenance of materialized skyline sets.

Based on experimental evaluations, we study the effects of applying our proposed algorithm with various parameters using real-life road dataset.

The remainder of this paper is organized as follows. We discuss related research in

Section 2. In

Section 3, we define the primary terms and notation used in this study, as well as formulating the problem. In

Section 4, we explain the pruning and grouping of feature objects, as well as mapping of pairs of data objects and feature groups to a distance-score space.

Section 5 presents our proposed algorithm for processing top-

k spatial preference queries in directed road networks. Our extensive experimental evaluations are presented in

Section 6. Finally, we give our conclusion in

Section 7.

3. Preliminaries

Section 3.1 defines the terms and notations used in this paper.

Section 3.2 formulates the problem using an example to illustrate the results obtained from top-

k spatial preference queries.

3.1. Definition of Terms and Notations

Road Network: A road network is represented by a weighted directed graph (G = N, E, W) where N, E, and W denote the node set, edge set, and edge distance matrix, respectively. Each edge is also assigned an orientation, which is either undirected or directed. An undirected edge is represented by , where and are adjacent nodes, whereas a directed edge is represented as or . Naturally, the arrows above the edges denote their associated directions.

Segment

s(

pi,

pj) is the part of an edge between two points

p1 and

p2 on the edge. An edge comprises one or more segments. An edge is also considered to be a segment where the nodes are the end points of the edge. To simplify the presentation,

Table 1 lists the notations used in this study.

3.2. Problem Formulation

Given a set of data objects and a set of m feature dataset the top-k spatial preference query returns the k data objects with the highest scores. The score of a data object is defined by the scores of the feature objects in the spatial neighborhood of the data object. Each feature object f has a non-spatial score, denoted as s( f ), which indicates the quality (goodness) of f and it is graded in the range [0,1].

The score of a data object d is determined by aggregating the partial scores with respect to a neighborhood condition θ and the ith feature dataset Fi. The aggregation function ‘agg’ can be any monotone function (sum, max, min), but in this study we use the sum to simplify the discussion. We consider the range (rng), nearest neighbor (nn), and influence (inf) constraints as the neighborhood condition θ. In particular, the score is defined as, , where and .

The partial score

is determined by the feature objects that belongs to the ith feature dataset

Fi only and that satisfy the neighborhood condition

θ. In other words

is defined by the highest score

s(f) for a single feature object

that satisfies the spatial constraint

θ. Similar to previous studies [

9,

10,

11], the partial scores

for different neighborhood conditions

θ are defined as follows:

Range(rng) score of d:

Nearest neighbor (nn) score of d:

Influence(inf) score of d:

Next, we evaluate the scores of data objects

d1,

d2 and

d3 in

Figure 1. We consider the range constraint value

r = 3.

Table 2 summarizes the scores obtained of

d1,

d2 and

d3 using the aforementioned definitions of the neighborhood conditions {

rng,

nn,

inf}.

The score of data object d is the sum of the partial score of each feature set. The range score of d1 is calculated as . The ith partial range score of a data object d is the maximum non-spatial score of the feature objects which are within the range r. Therefore, the first partial score , because and . However, because there is no restaurant located within the defined range r. Similarly, the range scores of d2 and d3 are and , respectively.

The nearest neighbor score of d1 is calculated as . The ith partial nearest neighbor score of a data object d is the score of the nearest feature object to d. Therefore, the first partial score , because a1 is the closest feature object of d1 and , whereas because b1 is the closest feature object of d1 and . Similarly, the nearest neighbor score of d2 and d3 are and , respectively.

The influence score of d1 is calculated as . The ith partial influence score of a data object d is calculated using the score of the feature object and its distance to the data object. Specifically, the influence score is inversely proportional to the distance between d and f. Therefore, the influence score decreases rapidly as the distance between the feature object f and data object d increases. The first partial score , because , , , and , whereas , because , , , and . Similarly, the influence score of d2 and d3 are and , respectively.

3.3. Finding Pivot Nodes

We now discuss the method used for computing the pivot nodes. In our approach, we group the feature objects based on the pivot nodes. Each feature object is associated with one pivot node, and thus feature objects sharing the same pivot node can be grouped together. It is obvious that the performance of the proposed scheme will improve if the number of feature groups is small. Therefore, the main objective is to retrieve the minimum number of pivot nodes. Computing the minimum number of pivot nodes is a minimum vertex cover problem. The minimum vertex cover comprises a set of nodes that can connect all the edges of the graph with the minimum number of nodes.

Definition 1: A vertex cover of a graph is a subset such that if then either or or both. In other words, a vertex cover is a subset of nodes that contains at least one node on each edge.

The minimum vertex cover is an NP-complete problem and it is also closely related to many other hard graph problems. Therefore, numerous studies have been conducted to design optimization and approximation techniques based on Branch and Bound Algorithm, Greedy Algorithm and Genetic Algorithm. We employ the technique proposed by Hartmann [

28] based on the Branch and bound algorithm because it is a complete algorithm, thereby ensuring that we find the best solution or the optimal solution of various optimization problems, including the minimum vertex cover. However, the only tradeoff when using Branch and Bound algorithm is that the running time increases with large graphs.

The Branch and Bound algorithm recursively explores the complete graph by determining the presence or absence of one node in the cover during each step of the recursive process, and then recursively solving the problem for the remaining nodes. The complete search space can be considered as a tree where each level determines the presence or absence of one node, and there are two possible branches to follow for each node; one corresponds to selecting the node for the cover whereas the other corresponds to ignoring the node. Virtually a node that is covered and all of its adjacent edges are removed from the graph. The algorithm does not need to descend further into the tree when a cover has been found, i.e., when all of the edges are covered. Next, the backtracking process starts and search continues to higher levels of the tree to identify a cover with a possibly smaller vertex cover. During backtracking all of the covered nodes are reinserted in the graph. Subsets of the nodes are determined that yield legitimate vertex covers and the smallest in size is the minimum vertex cover.

Let us consider an example in

Figure 2, where we found that the set of nodes

constructs a minimum vertex cover, that connects all of the edges in a given road network.

4. Pruning and Grouping

Top-k spatial preference queries return a ranked set of spatial data objects. Unlike traditional top-k queries the rank of each data object is determined by the quality of the feature objects in its spatial neighborhood. Thus, computing the partial score of a data object d based on the feature set Fi requires the examination of every pair of objects (d, f). Therefore, for a large number of objects, the search space that needs to be explored to determine the partial score is also significantly high, thereby further increasing the challenges of efficiently processing top-k spatial queries in directed road networks.

In

Section 4.1, we discuss the dominance relation and we then explain the pruning lemma.

Section 4.2 presents the grouping algorithm and the computation of the feature group score

, as well as discussing the computation of the minimum and maximum distances between data objects and feature groups.

Section 4.3 describes the mapping of pairs of data objects and feature groups to the distance-score space. Finally, we discuss the limitations of undirected based algorithms in directed road networks in

Section 4.4.

4.1. Pruning

In this section, we present a method for finding the dominant feature objects that contribute only to the score of a data object. The feature objects that do not contribute to the score of a data object will be pruned automatically. This dramatically reduces the search space, thereby significantly decreasing the computational cost. In order to make the pruning step more efficient, we use the pre-computed distances stored in a minimum distance table MDT. The MDT stores the pre-computed distances between the pair of nodes

ni and

nj in a directed road network. Each tuple in a MDT is of the form

, where

is used as a search key for retrieving the value of

. It should be noted that the network distance between two nodes,

ni and

nj, is not symmetrical in a directed road network (i.e.,

). Therefore, we need to insert a separate entry to retrieve the distance from

nj to

ni.

Figure 2 shows an example of a directed road network, which we employ throughout this section.

Before presenting the pruning lemma, let us define some useful terminologies:

Static Dimension: The static dimensions i (i.e., ) are fixed criteria that are not changed by the motion of the query, such as the rank of any restaurant or price.

Static Equality: An object o is statically equal to object if, for every static dimension i, . We denote the static equality as .

Static Dominance: An object

o is statically dominated by another object

if

is better than

o in every static dimension. We use

to denote that object

statically dominates object

o. We use

to denote that

either statically dominates

o or is statically equivalent to

o. In

Figure 2,

because the non-spatial score of

f1 is better than that of

f2 i.e.,

.

Complete Dominance: To explain the pruning lemma we need to define complete dominance. An object

o is completely dominated by another object

with respect to data object

d, if

as well as

. In other words,

completely dominates

o if

is equally good in terms of its static dimensions and it is also closer to the data object

d. In

Figure 2, the feature object

f1 completely dominates

f2 with respect to

because

and

. On the other hand,

f4 is not completely dominated by

f1, although

but

Dominant Pair (DP): Given two pairs and , is said to dominate , if and . The set of pairs that are not dominated by any other pair in are referred as dominant set .

Lemma 1: A feature object is a dominant object if and only if for any other feature object f for which ,

Proof: The proof is straight forward and thus it is omitted. Intuitively, a Lemma 1 state that is a dominant object if is closer to d than every other object f and it is at least as good as f in terms of its static dimensions (i.e., ).

In

Figure 2,

f2 is not a dominant object of

d1 because a feature object

f1 exists such that

and

. Hence,

f2 is completely dominated by

f1, and thus it is pruned, whereas,

f4 is a dominant object because it is closer to

d1. Here, note that

f3 cannot be a dominant object for data object

d1 because we are considering a directed road network and no path exists to

f3 from

d1.

Figure 3 depicts the mapping of

to the distance-score space

M. We formally define the distance-score space in

Section 4.3. The black square shows the mapping of pairs

where

and

. Now, by applying the dominance relationship onto the mapping in

Figure 3a, we find that pair

is completely dominated by

. Therefore,

.

Figure 3b shows that

and

are dominated pairs. Similarly,

Figure 3c shows the mapping of

, and it is clear that both pairs

and

are not dominated by any other pair.

4.2. Grouping

In this section, we describe our approach for grouping the feature objects. The pruning phase reduces the number of feature objects, but this can be reduced further by merging them into a group. In addition, the grouping technique reduces the size of skyline set and the entries in R-tree [

29], thereby enhancing the efficiency of algorithm by minimizing the memory consumption required. As mentioned earlier, the score for data object

d is computed from the score of the feature objects

which requires that we examine every pair of objects (

d, f). The performance of the algorithm will decline dramatically if the number of feature objects is excessively high. Therefore, the main purpose of grouping is to further reduce the number of feature objects by grouping them together, which consequently reduces the number of pairs. Thus, instead of evaluating the individual pairs

, our algorithm evaluates evaluates

, where

g denotes a feature group and a set of feature groups are represented as

Gi. Grouping the feature objects has two main advantages as follows.

4.2.1. Grouping Method

We now discuss the method for grouping feature objects based on pivot nodes. We have described the technique for finding the minimum pivot nodes in

Section 3.3. For grouping, each feature object is associated with one of a pivot node. In pruning phase, we find the dominant feature objects for each data object. The dominant feature objects of each data object are grouped together if they are associated with the same pivot node. In our previous study [

12], we grouped all the feature objects connected to one pivot node, thereby generating one feature group per pivot node. However, in some cases, more than one feature group may be associated with a single pivot node if dominant feature objects of multiple data objects share the same pivot node.

Let us consider the same example shown in

Figure 2, where node

is the pivot node and the feature objects

f1,

f2,

f3, and

f4 are connected to it. As mentioned in

Section 4.1, for data object

d1 the dominant objects are

f1 and

f4 whereas feature object

f2 and

f3 are pruned. However, for data object

d2;

f1 and

f3 is dominant whereas

f2 and

f4 are pruned. Therefore, two groups are formed

and

which are associated with pivot node

.

Table 3 summarizes the grouping of feature objects.

4.2.2. Computation of the Group Score

Due to the separate score of each feature object, the computation of partial score

becomes costly for a large number of feature objects. We devised a new method for calculating the partial scores based on the group score denoted as

. The group score is the highest score for any feature object that belongs to a group such that it qualifies the neighborhood conditions.

Table 3 shows that

,

and

. Therefore,

which is the highest score of the feature object belongs to

. The score of other groups can be computed in a similar fashion.

The partial score

by using

can be defined as follows:

Range(rng) score of d:

Nearest neighbor (nn) score of d:

Influence(inf) score of d:

We modify the formulae presented in

Section 3.2 to compute the partial score

by using the group score

instead of the feature score

. The only difference is

is used instead of

for range and influence score whereas

is used instead of

for nearest neighbor score.

4.2.3. Computation of the Distance between a Data Object and Feature Group

In this section, we present Lemmas for the computation of the minimum and maximum distances between a data object and feature group. The subset of pairs

retrieved in the grouping step is indexed in an R-tree [

17], where it is necessary to compute the minimum and maximum distances between a data object and feature group. Lemma 2 presents the computation of

, while Lemmas 3 and 4 describe the computation of

for

and

, respectively.

Lemma 2: Given a data object and a feature group

g,

is as follows:

Proof: This lemma is self-evident, so the proof is omitted. Here, denotes the boundary point of feature group. For , ,

We consider a directed road network and thus to determine

, it is necessary to evaluate

and

where,

and

refer to directed and undirected segments, respectively. To compute

, we consider the two cases separately: (1)

and (2)

. For

we use the method proposed by Cho et al. in [

11].

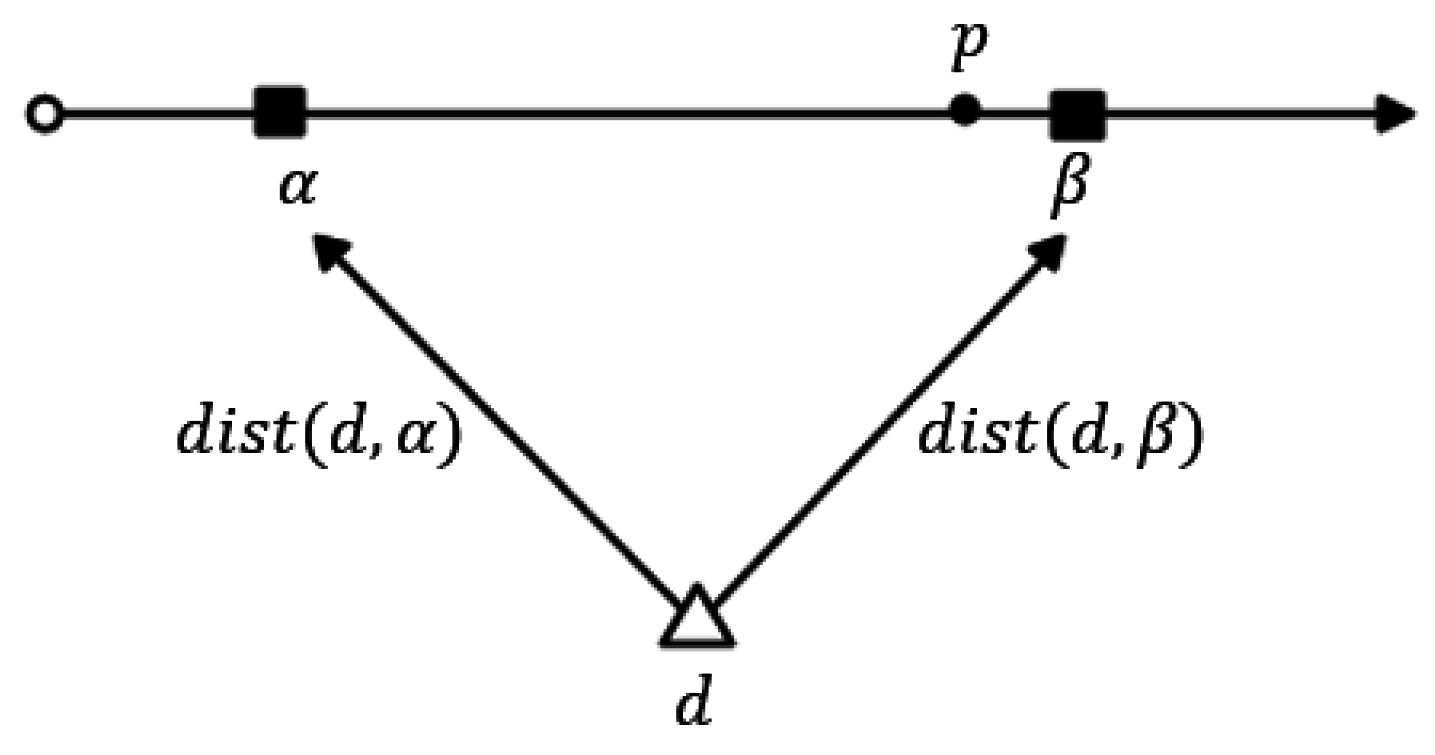

Lemma 3: If , then there are following two cases:

(Case 3a): If a path from

to

exists,

Proof: Let us suppose that

and

β are the boundary points on the road segment that belongs to a feature group and data object

d is located between

As shown in

Figure 4, there exists a point

p such that

. According to

Figure 4, it is obvious that

. From the equation above, we can observe that the distance value will increase with the value of

, so to obtain the maximum distance value, the point

p must be very close to

d, and thus we can say that

. Therefore, we can rewrite the equation above as

which is equal to

.

(Case 3b): If no path exists from to ,

Proof: This lemma is self-evident, so the proof is omitted.

Lemma 4: If

, then

is as follows:

Proof: According to the definition

. As shown in

Figure 5, it is obvious that we obtain the maximum distance from

d to

p when point

p is located very close to

, and thus we can say that

. Two paths exist from

d to

:

and

, and thus

or

Therefore, we can say that

.

Table 4 summarizes the minimum and maximum distances along with the score for the

in

Figure 2.

4.3. Mapping to Distance-Score Space

In this section, we formally define the search space of the top-k spatial preference queries by defining a mapping of the data objects d and any feature group g to a distance-score space. Let denote a pair comprising data object and a feature group , then is represented as . Each pair is mapped to either a point or a line segment in the distance-score space M, defined by the axes and , where corresponds to the distance between data object d and feature group g and corresponds to score of .

Definition 2: (Mapping of to M): The mapping of pairs comprising a data object and a feature group to the 2-dimensional space M (called distance-score space) is .

In the following, we define the dominance relation which is the subset of pairs of M that comprise the skyline set of M, denoted as . The skyline set S is the set of pairs which are not dominated by any other pair .

Definition 3: (Dominance ): Given two pairs is said to dominate another pair , denoted as , if and or if and .

Let

be the set of all pairs that are not dominated by any other pair in

.

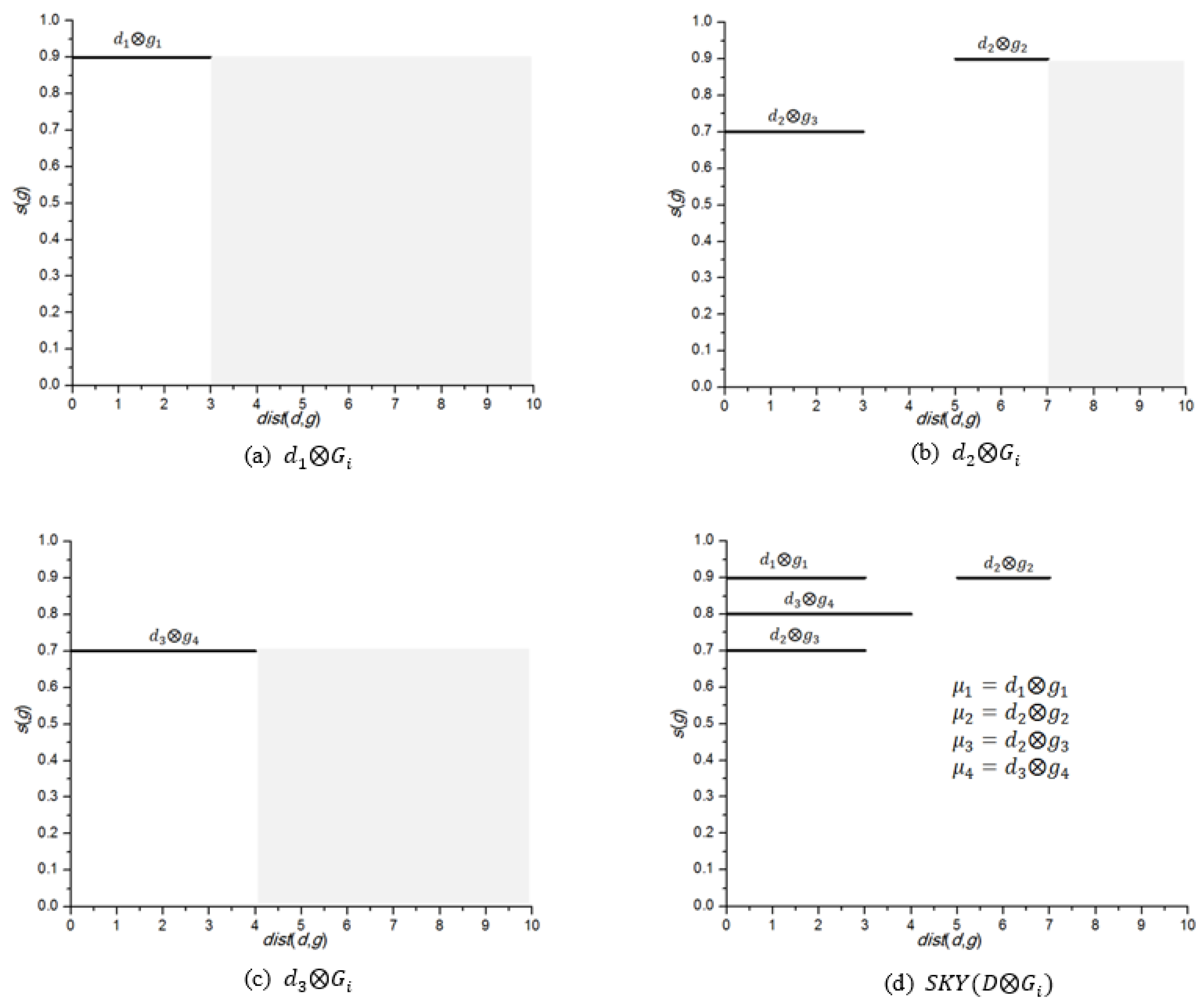

Figure 6 shows the mapping of

in

Table 4 to the distance-score space

M.

Figure 6a shows the mapping of

,

Figure 6b shows the mapping of

and

Figure 6c shows the mapping of

. The skyline sets of

,

and

are

,

and

, respectively. The pairs related to different data objects (e.g.,

and

) are definitely incomparable. Finally, the skyline set for

is the union of the skyline sets of all the data objects

. Thus,

Figure 6d shows the skyline set,

.

Observe that in

Figure 6a,

is mapped at 0.9 because

. It should be noted that

can be changed according to the neighborhood conditions. As explained earlier,

because

and

. Now, if we consider range condition

, the

, because

, which does not satisfy the neighborhood condition. In this scenario, the

is changed to 0.5 which is the score of

. Thus,

is mapped at 0.5.

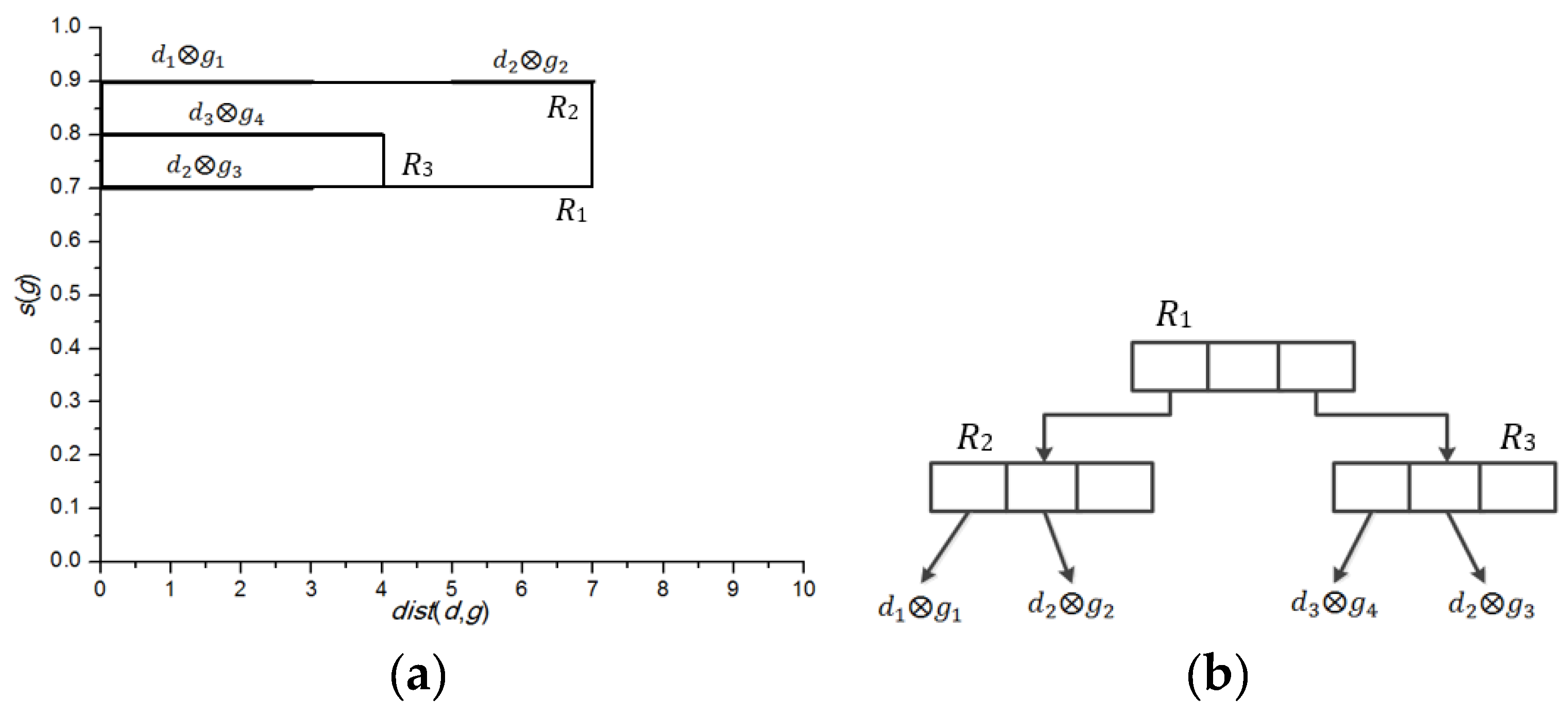

Figure 7a shows the mapping of

to

M.

Figure 7b, on the other hand, shows an R-tree that indexes the four pairs in

, assuming that node capacity of R-tree is set to 3. Therefore, index node

R2 encloses

and

, whereas index node

R3, encloses

and

. Finally, we present Lemma 5, which proves that

is sufficient for determining the partial score of each data object

Before presenting Lemma 5, recall that each feature object

is a dominant feature object and not dominated by any other feature object

.

Lemma 5: For any spatial preference query, is sufficient for determining the partial score of a data object .

Proof: Let us assume that is not sufficient for obtaining the partial score of a data object . This means that there is a feature group that contributes to . Now if or , then , and if , then . However, a pair such that there is another pair and which is equivalent to either and or if and . Hence, the partial score of d is if or , and if . This contradicts our assumption that contributes to . Therefore, is sufficient for obtaining the component score of a data object .

4.4. Limitations of Undirected Algorithms in Directed Road Networks

In contrast to undirected road networks, the network distance between two nodes is not symmetrical in directed road networks, i.e.,

.

Figure 8a shows an undirected road network, where there are two data objects

d1 and

d2, and two feature objects

f1 and

f2. To simplify the presentation, we consider a single feature dataset

.

Let us now evaluate the scores of the data objects. Suppose that the neighborhood condition is the range and value of the range constraint r = 3. The range score of d1 is 0.8 because and . Similarly, the range score of d2 is 0.6 because and . Therefore, d1 is the top-1 result with .

Now, assume the directed road network as shown in

Figure 8b. In this case, the range score of

d1 is 0 because no feature object exists within distance

r. However, the range score of

d2 remains the same because

f1 still exists within distance

r. Therefore, in the directed road network,

d2 is the top-1 result with

. This example clearly demonstrates that an algorithm based on an undirected road network cannot be applied to directed road networks.

The research study closest to our present work was presented by Cho et al. [

11]. They proposed an algorithm called ALPS for processing preference queries in undirected road networks, where the data objects in a road sequence are grouped to form a data segment in their approach. The motivation behind grouping data objects is that data objects in a sequence are close to each other, so it is more efficient to process them together rather than handling each data object separately. However, ALPS fall short in answering preference queries in directed road networks.

Now, we present why ALPS cannot process preference queries in directed road networks. Consider a directed road in

Figure 9 where there are four data objects

d1,

d2,

d3 and

d4 and two feature objects

f1 and

f2 which are denoted as triangles and rectangles, respectively. The data objects

d1 and

d2 lies in a same sequence are grouped and converted to data segment

and data objects

d3 and

d4 are converted to

. Observe that, grouping of

is not valid because there is no path that exists to connect

d3 to

d4, as shown in

Figure 9.

Another issue is indexing of data segments and feature object pairs in R-tree for directed road networks. Let

denote a pair that consists of a data segment

dseg and feature object

f. To index a pair in R-tree it is necessary to compute minimum and maximum distances between data segment

dseg and a feature object

f. In

Figure 9, as per ALPS computation

and

. Conversely, the actual

. ALPS computes the minimum and maximum distances between the data segment and the feature object based on the assumption that

, but this assumption is not applicable in a directed road network where

. Thus, ALPS generates an erroneous R-tree which leads to incorrect results. The above example demonstrates that ALPS do not work for directed road networks. For ALPS, to answer preference queries in a directed road network, the method for grouping and computing

and

should be modified to consider the particular orientation of each road segment.

Comparing ALPS and TOPS conceptually, ALPS adopts the grouping of data objects into data segments whereas TOPS groups the feature objects. ALPS first groups the data objects to data segments and then prunes the dominated pairs which may allow the redundant pairs in the R-tree. However, TOPS first prune the pairs to avoid any redundant pair and then group them based on pivot nodes. Therefore, query processing time can be high in ALPS. Similarly, due to a higher number of redundant pairs, ALPS might utilize more disk size for indexing of skyline sets. However, the index construction time of ALPS can be better than TOPS because a lower number of skyline sets needs to be generated due to grouping of data objects.

5. Top-k Spatial Preference Query Algorithm

In this section, we present the Top-k Spatial Preference Query Algorithm (TOPS), for top-k spatial preference queries. TOPS is appropriate for all three neighborhood conditions (range, nearest neighbor and influence), but we discuss the range constraint to simplify the explanation. We then present the necessary modifications for supporting nearest neighbor and influence scores. Our algorithm processes the top-k preference query by sequential accessing the data objects in descending order of their partial score. In order to achieve this, TOPS retrieves qualifying data objects, one by one during query processing in descending order based on their partial scores, which can rapidly produce a set of k top data objects with the highest scores.

Algorithm 1 computes the top-k data objects with the highest score by aggregating the partial scores of data objects retrieved from each max heap Hi. For each skyline set, , we employ a max heap Hi to traverse the data objects in descending order of their component score. Whenever the method is called, the data object dhigh with the highest component score is popped from the max heap Hi. Let Dk be the current top-k set and Ri is the recent component score seen in Hi. In addition, TOPS maintains a list of candidate data objects Dc, which may become top-k data objects. Ri is set to (line 6) and the lower bound score is also updated using the aggregate function (line 7). If the number of data objects in Dk is less than k or is greater than the k-th highest score of data object in Dk, then dhigh is added to Dk. If dhigh is already in Dc then it will be removed from the Dc list. If the number of data objects in Dk = k + 1, the data object with the lowest is moved from Dk to Dc (line 8–12). Then, t is set to the lowest of the data objects in Dk (line 13). The upper bound score is computed for each data object .

| Algorithm 1: TOPS(Hi,k,r) |

| Input: Hi: a max heap with entries in descending order of partial range score, k: number of requested data objects with highest score, r: range constraint |

| Output: Top-k data objects with highest score |

| 1: | /* set of candidate data objects */ |

| 2: | /* current Top-k set */ |

| 3: | /* Recent partial score seen in Hi |

| 4: | while ∃Hi such that do |

| 5: | |

| 6: | | /* pop data object with highest score from Hi*/ |

| 7: | | |

| 8: | | | If number of data objects in or then |

| 9: | | | | |

| 10: | | | | if then |

| 11: | | | | if number of data objects in then |

| 12: | | | | and |

| 13: | | | | |

| 14: | | else |

| 15: | | | /* If then add it in Dc*/ |

| 16: | | | for each data object do |

| 17: | | | | |

| 18: | | | | | If then |

| 19: | |

| 20: | If and number of data objects in then |

| 21: | break while statement |

| 22: | Return Dk |

Finally, for each candidate object in Dc, the upper bound score is computed by . The maximum is then set to u (lines 16–19). If then no newly observed data object will end up in Dk. Therefore, the algorithm terminates and returns Dk if and the number of data objects in , or if all the heaps are exhausted (lines 20–22).

In order to illustrate our proposed algorithm, let us consider the example presented in

Figure 7, where the R-tree is shown to index the skyline set

that have been constructed using the example in

Figure 2. We recall that hotels correspond to the data objects

and cafes correspond to the feature objects

. Let us consider that the client requested the following query of the top-k spatial preference query: “Find two hotels that are associated with a high-grade cafe which are located within a distance of 4”. We recall that in this query,

and

. After pruning and grouping the final generated skyline set is shown in

Figure 7a,

. The algorithm checks all the qualifying pairs based on the neighborhood condition

Three pairs

and

are retrieved one at a time from

R, and pushed onto a max heap

H. Thus,

. Finally,

d1 and

d3 are selected as the Top-2 query result because they have scores of 0.9 and 0.8, respectively.

Algorithm 2 returns the data objects one by one in descending order based on their partial score . Initially, the heap Hi contains the root node of an R-tree. Hi comprises records rc, which can be either the data object or the R-tree node. Each time the record rc with the highest partial score is popped from Hi. If rc indicates an R-tree node (line 3), then the algorithm verifies if the feature group satisfies the neighborhood condition . If entry s satisfies the neighborhood condition, it is added into (line 4, 5). If it does not satisfy neighborhood condition, which means a feature object exists such that . Therefore, each feature object needs to be examined to verify that (line 8). All the qualifying records are inserted to Heap Hi and the highest score of each feature object is assigned as a group score s(g) (lines 9, 10). Finally, when data object d is found, it is returned as with the highest partial score (lines 15, 17).

Algorithm 2 can be adapted with minor modifications to the nearest neighbor and influence scores. For the nearest neighbor score, the pairs are pruned such that f is not the nearest neighbor of d. Thus, during the construction of , the data objects are flagged to indicate whether or not f is the nearest neighbor of d (bit 1 if f is the nearest neighbor, and a 0 bit otherwise). For the influence score, the radius r is only used to compute the score and the score of feature object is reduced in proportion to the distance to a data object. Therefore, the verification conditions from Algorithm 2 (lines 4 and 8) are removed from the algorithm for the influence score. Thus, for each feature object , the component influence score is computed with respect to feature object and a corresponding entry is added to .

| Algorithm 2: NextHighestRangeScore(Hi,r) |

| Input: Hi: a max heap with entries in descending order of partial range score, r: range constraint |

| Output: The next data object in Hi with highest partial score |

| 1: | |

| 2: | while do |

| 3: | for each record c do |

| 4: | if then |

| 5: | Push record s to Hi |

| 6: | else |

| 7: | for each feature object do |

| 8: | if then |

| 9: | Push record s to Hi |

| 10: | compute |

| 11: | | end if |

| 12: | | end for |

| 13: | | end if |

| 14: | | end for |

| 15: | | |

| 16: | end while |

| 17: | return rc |

Incremental Maintenance

In this section, we discuss the incremental maintenance of the skyline set during the insertion, deletion and updating processes of the data and feature objects. We use the adaptation of the branch-and-bound skyline (BBS) the dynamic skyline algorithm for incremental maintenance of the skyline set. First, we update which is retrieved during the pruning phase and the Skyline set is then updated based on the updated set. Once the dominant set is updated, the update of group and is simple and straight forward. Insertions and deletions of data objects are fairly simple and cost-effective. When a new data object dnew is inserted into D, all of the dominant pairs are added to the set and the feature objects with the same pivot node form a feature group Next, the pairs are inserted in skyline set . If a data object ddeleted is deleted, then the pairs are deleted from the skyline set and all of the pairs are deleted from the set. Updates to the spatial location of a data object d are processed as a deletion followed by an insertion.

Next, we discuss the insertion, deletion and update processes of feature objects. The scores of feature objects are usually updated more frequently compared with the spatial location. Therefore, the most frequent maintenance operation is updating the score of a feature object. Updating the score of a feature object can potentially affect the score of a group which may affect the materialized skyline set . However, the updating cost is not very high due to the dominance relationship. Let us assume that the score of a feature object fupdated has been updated. As a consequence, the following two cases may occur: (1) the dominant set is still valid, (2) the dominant set is no longer valid. In the first case, if the set is valid, this means that the materialized skyline sets are also valid so there is no need for maintenance and the score of fupdated is simply updated. If fupdated has the highest score in the group, then the score of the group is also updated. In the second case, we check the dominance relationship. Only the score of the feature object is updated, so the maintenance algorithm simply performs a static dominance relation to update the set. First, we check whether , and the pairs are then removed from the set. Next, we check whether any feature object is dominated by fupdated. If , then the pairs are removed from the sets. Finally, the information regarding the groups (i.e., max/min distance and s(g)) is modified based on updated set and is generated.

Let us consider the addition of a new feature object fnew to the feature object set Fi. First, we need to execute the dominance check. If a pair is dominated by any other pair in set, this does not affect the dominant set, so it is simply discarded. However, if it is not dominated by any other pair then the maintenance algorithm will issue a query to retrieve all of the pairs that are dominated by . If no pair is retrieved then is simply added to the set, otherwise, the maintenance algorithm will remove all of the existing pairs from that are dominated by . Similarly, the information regarding groups is changed based on the updated set and is generated.

Next, we explain the maintenance of the skyline set after the deletion of feature objects. First, we need to check whether . If , then no further processing is required; otherwise, the maintenance algorithm is called. For incremental maintenance, we need to determine the set of pairs that are exclusively dominated by . If such pairs exist, then the dominant pairs are computed for these pairs and added to set; otherwise, is simply removed from the set. Finally, as mentioned earlier, the information regarding groups is modified based on the updated set and is generated.

Finally, we analyze the time complexities of adding, deleting, and updating a data object and a feature object. As mentioned earlier, incremental maintenance is performed on instead of ; therefore, we analyze the time complexity in terms of updating and maintaining . The time complexity of adding a data object is , where m is the number of feature datasets. Specifically, the dominant set of is generated for each feature dataset m which has a time complexity of . Then, the dominant set is updated which has a time complexity of . The time complexity of deleting a data object is . Thus, the time complexity of updating a data object is because updating a data object can be handled by a deletion of data object followed by an insertion.

The time complexity of adding a feature object is . This is because for each pair , the dominance check is performed to verify whether dominates or whether is dominated by . Next, we analyze the time complexity of deleting a feature object . Let be the set of pairs that are exclusively dominated by . For each data object , the exclusive dominance region for is determined, which has the time complexity of ). Then, the dominant set for the pairs that are exclusively dominated by is determined which has the time complexity of . Thus, the time complexity of deleting a feature object is . Lastly, the time complexity of updating a feature object is because the update (i.e., location or score update) of a feature object can be handled as a deletion followed by an insertion.