Reflective Noise Filtering of Large-Scale Point Cloud Using Multi-Position LiDAR Sensing Data

Abstract

:1. Introduction

- To the best of our knowledge, this study is the first to implement the noise region denoising for large-scale point clouds containing only single-echo reflection values.

- Most current methods are based on statistical principles to remove some of the noise. However, these conventional methods cannot differentiate the reflected noise from other normal objects. The method proposed herein successfully solves this problem.

- The proposed method can be applied to large-scale point clouds. The methods used in previous studies were only for the point clouds of individual objects or for areas with sparse point cloud density. The proposed method can denoise large-scale point clouds using multiple sensing data.

2. Related Work

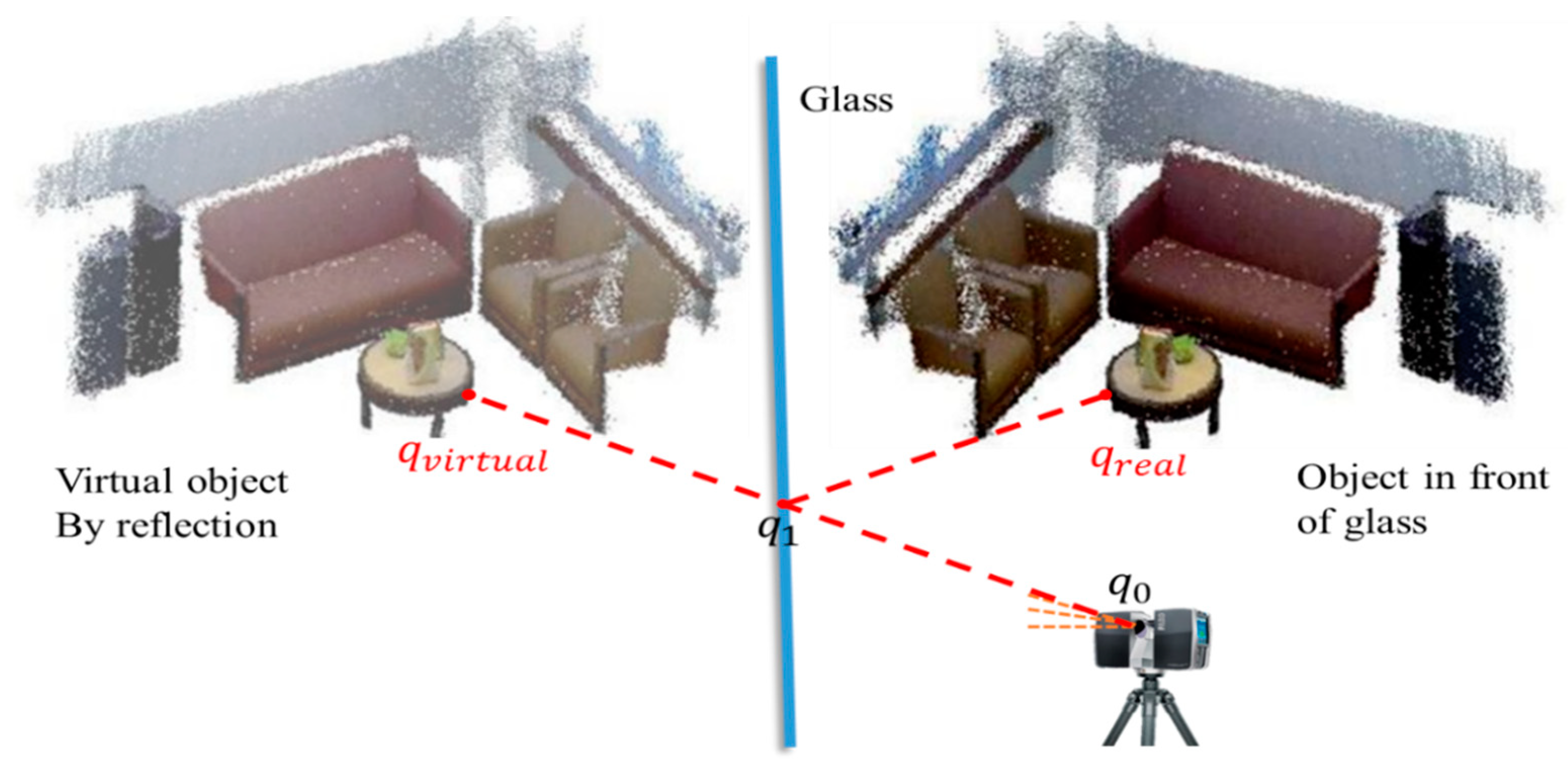

3. Proposed Method

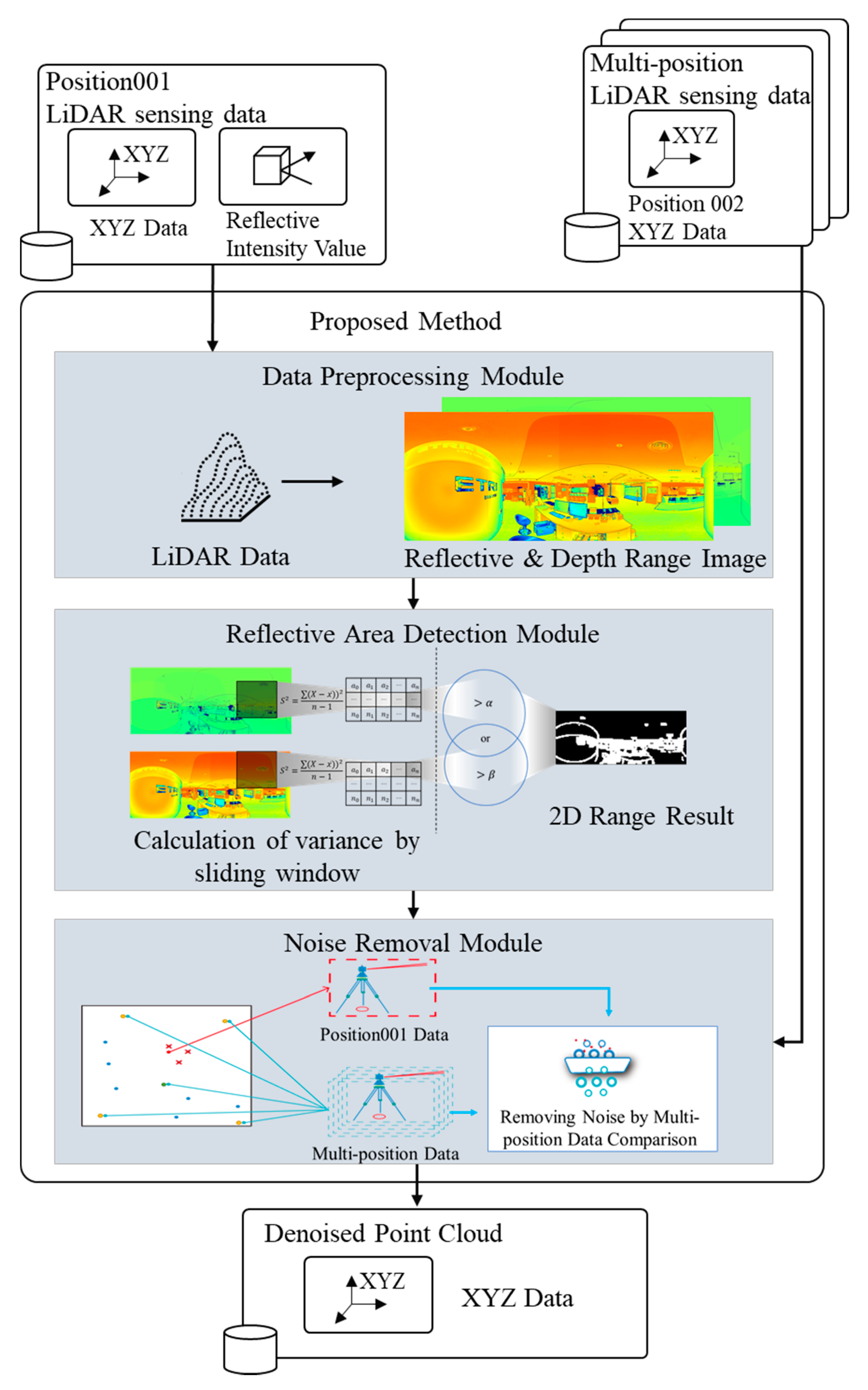

3.1. Overview

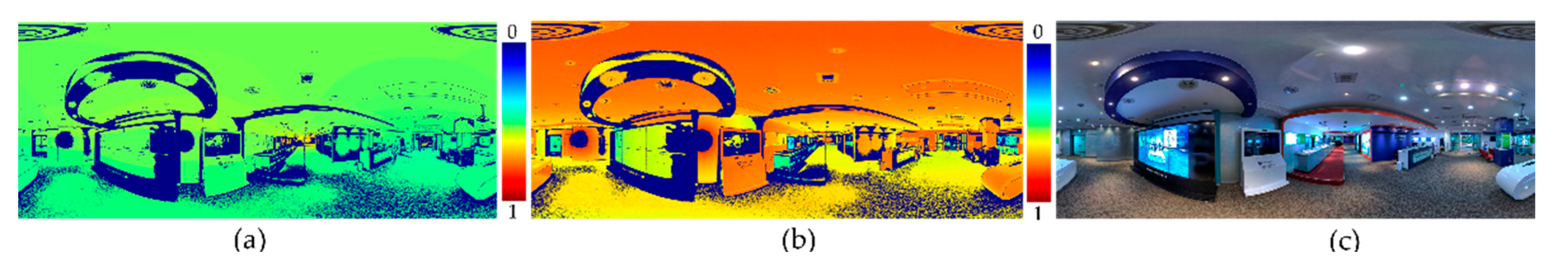

3.2. Data Preprocessing Module

3.3. Reflective Area Detection Module

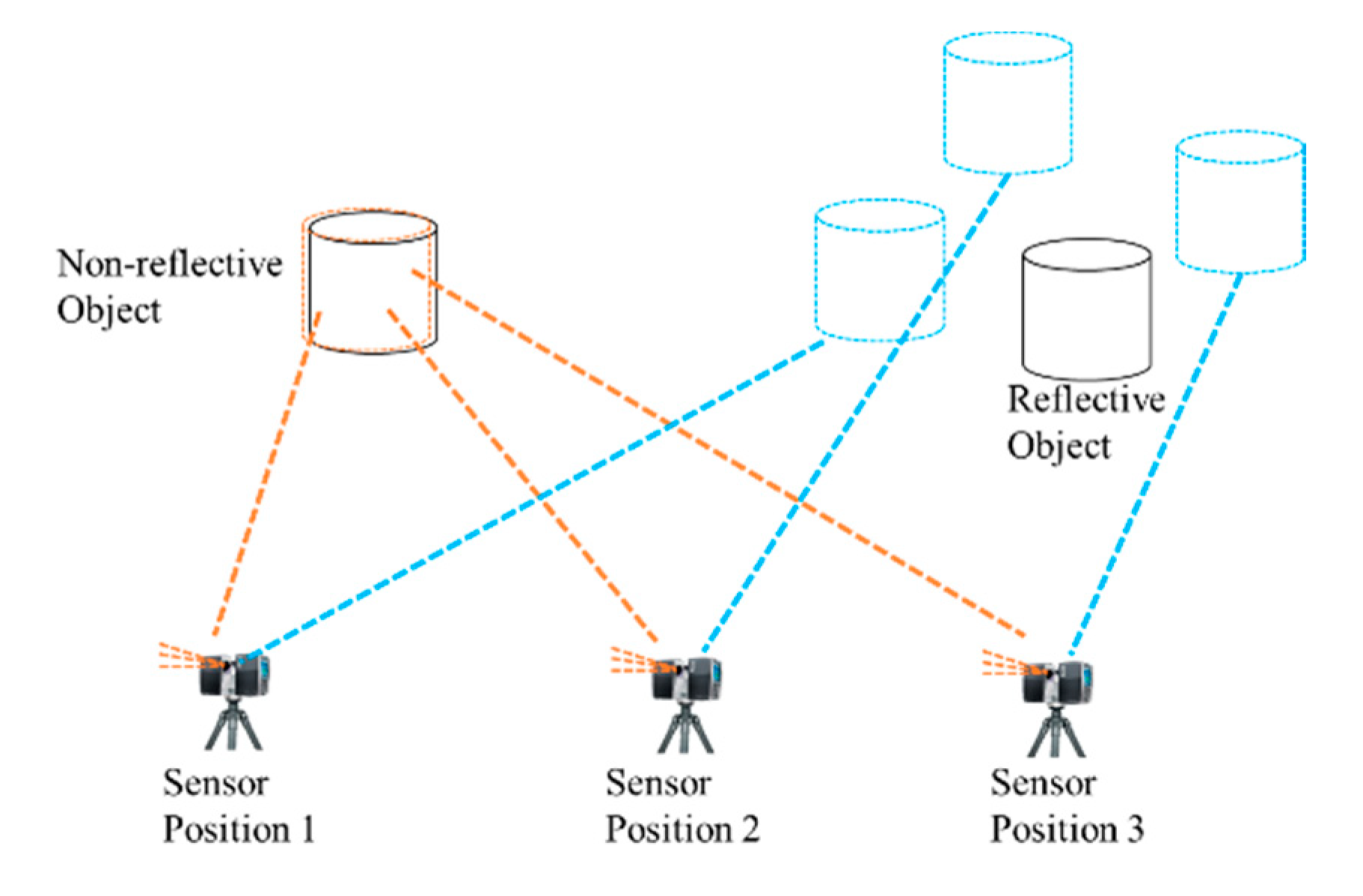

3.4. Noise Removal Module

| Algorithm 1. Noise removal using multi-position LiDAR sensing data comparison |

| Input: Threshold_of_deletes_nearest_sensor γ, Matching_point_threshold δ, Number_nearest_sensor ε Radius_distance ζ Peripheral LiDAR sensor position list Target LiDAR sensor position () |

| Output: DenoisePointCloud |

| For target sensor reflective area result from target sensor position () Load Peripheral LiDAR position data from LiDAR sensor position Sort Peripheral LiDAR position data from LiDAR sensor position Delete peripheral sensors position around the target sensor () by threshold γ. Select peripheral sensors by threshold ε. Load peripheral sensors point cloud from peripheral sensors position into k-d tree for each noise point do find original location from point cloud search noise point from k-d tree if presence of other points in threshold ζ & number of points searched > δ then add to normal point else add to noise point end for for normal point Search point cloud location using normal point index Save denoise point cloud end for end for |

4. Experiments and Evaluation

4.1. Data Acquisition

4.2. Generation of Ground Truth Data and Experimental Environment

4.3. Noise Detection and Performance

4.4. Noise Detection and Performance

5. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Mehendale, N.; Neoge, S. Review on Lidar Technology. Available online: https://ssrn.com/abstract=3604309 (accessed on 2 August 2021).

- Vines, P.; Kuzmenko, K.; Kirdoda, J.; Dumas, D.C.S.; Mirza, M.M.; Millar, R.; Paul, D.J.; Buller, G.S. High performance planar germanium-on-silicon single-photon avalanche diode detectors. Nat. Commun. 2019, 10, 1–9. [Google Scholar] [CrossRef] [Green Version]

- Huang, Z.; Lv, C.; Xing, Y.; Wu, J. Multi-modal Sensor Fusion-Based Deep Neural Network for End-to-end Autonomous Driving with Scene Understanding. IEEE Sens. J. 2020, 21, 11781–11790. [Google Scholar]

- Tachella, J.; Altmann, Y.; Mellado, N.; McCarthy, A.; Tobin, R.; Buller, G.S.; Tourneret, J.Y.; McLaughlin, S. Real-time 3D reconstruction from single-photon lidar data using plug-and-play point cloud denoisers. Nat. Commun. 2019, 10, 1–6. [Google Scholar] [CrossRef] [Green Version]

- Tachella, J.; Altmann, Y.; Ren, X.; McCarthy, A.; Buller, G.S.; McLaughlin, S.; Tourneret, J.-Y. Bayesian 3D Reconstruction of Complex Scenes from Single-Photon Lidar Data. SIAM J. Imaging Sci. 2019, 12, 521–550. [Google Scholar] [CrossRef] [Green Version]

- Kuzmenko, K.; Vines, P.; Halimi, A.; Collins, R.; Maccarone, A.; McCarthy, A.; Greener, Z.M.; Kirdoda, J.; Dumas, D.C.S.; Llin, L.F.; et al. 3D LIDAR imaging using Ge-on-Si single–photon avalanche diode detectors. Opt. Express 2020, 28, 1330–1344. [Google Scholar] [CrossRef]

- Schwarz, B. Mapping the world in 3D. Nat. Photonics 2010, 4, 429–430. [Google Scholar] [CrossRef]

- Huo, L.-Z.; Silva, C.A.; Klauberg, C.; Mohan, M.; Zhao, L.-J.; Tang, P.; Hudak, A.T. Supervised spatial classification of multispectral LiDAR data in urban areas. PLoS ONE 2018, 13, e0206185. [Google Scholar] [CrossRef]

- Altmann, Y.; Wallace, A.; McLaughlin, S.; McLaughlin, S. Spectral Unmixing of Multispectral Lidar Signals. IEEE Trans. Signal Process. 2015, 63, 5525–5534. [Google Scholar] [CrossRef] [Green Version]

- FARO SCENE. Available online: https://www.faro.com/en/Products/Software/SCENE-Software (accessed on 2 August 2021).

- Levin, A.; Weiss, Y. User assisted separation of reflections from a single image using a sparsity prior. IEEE Trans. Pattern Anal. Mach. Intell. 2007, 29, 1647–1654. [Google Scholar] [CrossRef] [PubMed]

- Fan, Q.; Yang, J.; Hua, G.; Chen, B.; Wipf, D. A generic deep architecture for single image reflection removal and image smoothing. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 3238–3247. [Google Scholar]

- Wan, R.; Shi, B.; Duan, L.Y.; Tan, A.H. Crrn: Multi-scale guided concurrent reflection removal network. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 19–21 June 2018; pp. 4777–4785. [Google Scholar]

- Zhang, X.; Ng, R.; Chen, Q. Single image reflection separation with perceptual losses. In Proceedings of the IEEE conference on computer vision and pattern recognition, Salt Lake City, UT, USA, 19–21 June 2018; pp. 4786–4794. [Google Scholar]

- Han, X.-F.; Jin, J.-S.; Wang, M.-J.; Jiang, W.; Gao, L.; Xiao, L. A review of algorithms for filtering the 3D point cloud. Signal Process. Image Commun. 2017, 57, 103–112. [Google Scholar] [CrossRef]

- Anand, S.; Mittal, S.; Tuzel, O.; Meer, P. Semi-Supervised Kernel Mean Shift Clustering. IEEE Trans. Pattern Anal. Mach. Intell. 2014, 36, 1201–1215. [Google Scholar] [CrossRef]

- Chen, L.-F.; Jiang, Q.-S.; Wang, S.-R. A hierarchical method for determining the number of clusters. J. Softw. 2008, 19, 62–72. [Google Scholar] [CrossRef]

- Uncu, Ö.; Gruver, W.A.; Kotak, D.B.; Sabaz, D.; Alibhai, Z.; Ng, C. GRIDBSCAN: GRId density-based spatial clustering of applications with noise. In Proceedings of the 2006 IEEE International Conference on Systems, Man and Cybernetics, Taipei, Taiwan, 8–11 October 2006; IEEE: Piscataway, NJ, USA, 2006; Volume 4, pp. 2976–2981. [Google Scholar]

- Lloyd, S. Least squares quantization in PCM. IEEE Trans. Inf. Theory 1982, 28, 129–137. [Google Scholar] [CrossRef]

- Ester, M.; Kriegel, H.P.; Sander, J.; Xu, X. A density-based algorithm for discovering clusters in large spatial databases with noise. In Proceedings of the 2nd International Conference on Knowledge Discovery and Data Mining KDD-96, Portland, OR, USA, 2–4 August 1996; pp. 226–231. [Google Scholar]

- Erisoglu, M.; Calis, N.; Sakallioglu, S. A new algorithm for initial cluster centers in k-means algorithm. Pattern Recognit. Lett. 2011, 32, 1701–1705. [Google Scholar] [CrossRef]

- Liu, R.; Zhu, B.; Bian, R.; Ma, Y.; Jiao, L. Dynamic local search based immune automatic clustering algorithm and its applications. Appl. Soft Comput. 2015, 27, 250–268. [Google Scholar] [CrossRef]

- Omran, M.G.H.; Salman, A.; Engelbrecht, A.P. Dynamic clustering using particle swarm optimization with application in image segmentation. Pattern Anal. Appl. 2005, 8, 332–344. [Google Scholar] [CrossRef]

- Mattei, E.; Castrodad, A. Point Cloud Denoising via Moving RPCA. Comput. Graph. Forum 2016, 36, 123–137. [Google Scholar] [CrossRef]

- Sun, Y.; Schaefer, S.; Wang, W. Denoising point sets via L0 minimization. Comput. Aided Geom. Des. 2015, 35-36, 2–15. [Google Scholar] [CrossRef]

- Huang, H.; Wu, S.; Gong, M.; Cohen-Or, D.; Ascher, U.; Zhang, H. Edge-aware point set resampling. ACM Trans. Graph. 2013, 32, 1–12. [Google Scholar] [CrossRef]

- Li, X.; Zhang, Y.; Yang, Y. Outlier detection for reconstructed point clouds based on image. In Proceedings of the 2017 First International Conference on Electronics Instrumentation & Information Systems (EIIS), Harbin, China, 3–5 June 2017; IEEE: Piscataway, NJ, USA, 2017; pp. 1–6. [Google Scholar]

- Czerniawski, T.; Nahangi, M.; Walbridge, S.; Haas, C. Automated removal of planar clutter from 3D point clouds for improving industrial object recognition. In Proceedings of the International Symposium on Automation and Robotics in Construction, Auburn, AL, USA, 18–21 July 2016; Volume 33, pp. 1–8. [Google Scholar]

- Rusu, R.B.; Marton, Z.C.; Blodow, N.; Dolha, M.; Beetz, M. Towards 3D point cloud based object maps for household environments. Robot. Auton. Syst. 2008, 56, 927–941. [Google Scholar] [CrossRef]

- Weyrich, T.; Pauly, M.; Keiser, R.; Heinzle, S.; Scandella, S.; Gross, M.H. Post-processing of Scanned 3D Surface Data. In Proceedings of the IEEE eurographics symposium on point-based graphics, Grenoble, France, 8 August 2004; pp. 85–94. [Google Scholar]

- Koch, R.; May, S.; Koch, P.; Kühn, M.; Nüchter, A. Detection of specular reflections in range measurements for faultless robotic slam. In Advances in Intelligent Systems and Computing, Proceeding of the Robot 2015: Second Iberian Robotics Conference, Lisbon, Portugal, 19–21 November 2015; Springer: Berlin, Germany, 2016; pp. 133–145. [Google Scholar]

- Zhao, X.; Yang, Z.; Schwertfeger, S. Mapping with reflection-detection and utilization of reflection in 3d lidar scans. In Proceedings of the 2020 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR), Abu Dhabi, United Arab Emirates, 4–6 November 2020; IEEE: Piscataway, NJ, USA, 2020. [Google Scholar]

- Jiang, J.; Miyagusuku, R.; Yamashita, A.; Asama, H. Glass confidence maps building based on neural networks using laser range-finders for mobile robots. In IEEE/SICE International Symposium on System Integration (SII); IEEE: Taipei, Taiwan, 2017; pp. 405–410. [Google Scholar]

- Foster, P.; Sun, Z.; Park, J.J.; Kuipers, B. VisAGGE: Visible angle grid for glass environments. In Proceedings of the IEEE International Conference on Robotics and Automation, Karlsruhe, Germany, 6–10 May 2013; pp. 2213–2220. [Google Scholar]

- Kim, J.; Chung, W. Localization of a Mobile Robot Using a Laser Range Finder in a Glass-Walled Environment. IEEE Trans. Ind. Electron. 2016, 63, 3616–3627. [Google Scholar] [CrossRef]

- Wang, X.; Wang, J.-G. Detecting glass in Simultaneous Localisation and Mapping. Robot. Auton. Syst. 2017, 88, 97–103. [Google Scholar] [CrossRef]

- Hui, L.; Di, L.; Xianfeng, H.; Deren, L. Laser intensity used in classification of lidar point cloud data. In Proceedings of the IGARSS 2008—2008 IEEE International Geoscience and Remote Sensing Symposium, Boston, MA, USA, 7–11 July 2008; IEEE: Piscataway, NJ, USA, 2008; Volume 2. [Google Scholar]

- Song, J.H.; Han, S.H.; Yu, K.Y.; Kim, Y.I. Assessing the possibility of land-cover classification using lidar intensity data. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2002, 34, 259–262. [Google Scholar]

- Koch, R.; May, S.; Murmann, P.; Nuechter, A. Identification of transparent and specular reflective material in laser scans to discriminate affected measurements for faultless robotic SLAM. Robot. Auton. Syst. 2017, 87, 296–312. [Google Scholar] [CrossRef]

- Koch, R.; May, S.; Nüchter, A. Detection and purging of specular reflective and transparent object influences in 3d range measurements. In Proceedings of the International Archives of the Photogrammetry, Remote Sensing and Spatial Information Sciences, Wuhan, China, 18–22 September 2017; pp. 377–384. [Google Scholar]

- Wang, R.; Bach, J.; Ferrie, F.P. Window detection from mobile LiDAR data. In Proceedings of the 2011 IEEE Workshop on Applications of Computer Vision, WACV, Washington, DC, USA, 5–7 January 2011; pp. 58–65. [Google Scholar]

- Wang, R.; Ferrie, F.P.; Macfarlane, J. A method for detecting windows from mobile LiDAR data. Photogramm. Eng. Remote. Sens. 2012, 78, 1129–1140. [Google Scholar] [CrossRef] [Green Version]

- Ali, H.; Ahmed, B.; Paar, G. Robust window detection from 3d laser scanner data. In Proceedings of the 2008 Congress on Image and Signal Processing, Sanya, China, 27–30 May 2008; Volume 2, pp. 115–118. [Google Scholar]

- Velten, A.; Willwacher, T.; Gupta, O.; Veeraraghavan, A.; Bawendi, M.G.; Raskar, R. Recovering three-dimensional shape around a corner using ultrafast time-of-flight imaging. Nat. Commun. 2012, 3, 745. [Google Scholar] [CrossRef] [PubMed] [Green Version]

- Mei, H.; Yang, X.; Wang, Y.; Liu, Y.; He, S.; Zhang, Q.; Wei, X.; Lau, R.W. Don’t hit me! glass detection in real-world scenes. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 14–19 June 2020. [Google Scholar]

- Yun, J.-S.; Sim, J.-Y. Reflection removal for large-scale 3d point clouds. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 4597–4605. [Google Scholar]

- Yun, J.-S.; Sim, J.-Y. Virtual Point Removal for Large-Scale 3D Point Clouds With Multiple Glass Planes. IEEE Trans. Pattern Anal. Mach. Intell. 2019, 43, 729–744. [Google Scholar] [CrossRef]

- Biasutti, P.; Aujol, J.F.; Brédif, M.; Bugeau, A. Range-Image: Incorporating sensor topology for LiDAR point cloud processing. Photogramm. Eng. Remote Sens. 2018, 84, 367–375. [Google Scholar] [CrossRef]

- OpenCV. Available online: https://opencv.org (accessed on 2 August 2021).

- Razali, N.M.; Wah, Y.B. Power comparisons of shapiro-wilk, kolmogorov-smirnov, lilliefors and anderson-darling tests. J. Stat. Model. Anal. 2011, 2, 21–33. [Google Scholar]

- FARO LASER SCANNER FOCUS3D X 130. Available online: https://www.aniwaa.com/product/3d-scanners/faro-faro-laser-scanner-focus3d-x-130/ (accessed on 2 August 2021).

- Nurunnabi, A.; West, G.; Belton, D. Outlier detection and robust normal-curvature estimation in mobile laser scanning 3D point cloud data. Pattern Recognit. 2015, 48, 1404–1419. [Google Scholar] [CrossRef] [Green Version]

| Scanner Settings Name | Parameters |

|---|---|

| Scan Angular Area (Vertical) | |

| Scan Angular Area (Horizontal) | |

| Resolutions | |

| Scanner Distance Range | 130 m |

| Horizontal Motor Speed Factor | 1.02 |

| Filter Name | Parameters Name | Parameters |

|---|---|---|

| Proposed Method | Sliding window size | 100 |

| Stride | 50 | |

| Depth variance threshold | 0.2 | |

| Reflection variance threshold | 10,000 | |

| Threshold of deletes nearest sensor γ | 4 | |

| Matching point threshold δ | 1 | |

| Nearest neighbor number ε | 8 | |

| Radius distance ζ | 0.01 | |

| FARO Dark Scan Point Filter | Reflectance Threshold | 900 |

| FARO Distance Filter | Minimum Distance | 0 |

| Maximum Distance | 200 | |

| FARO Stray Point Filter | Grid Size | 3 px |

| Distance Threshold | 0.02 | |

| Allocation Threshold | 50% |

| Sensors Number | The Size of Range Image | Total Number of Point Cloud | Total Number of Noise Point |

|---|---|---|---|

| S001 | 4268 | 44,088,440 | 128,601 |

| S002 | 4268 | 44,088,440 | 139,343 |

| S003 | 4268 | 44,122,584 | 80,130 |

| S004 | 4268 | 44,088,440 | 128,456 |

| S005 | 4268 | 44,122,584 | 178,147 |

| S006 | 4268 | 44,079,904 | 412,792 |

| S007 | 4268 | 44,088,440 | 283,019 |

| S008 | 4268 | 44,088,440 | 133,439 |

| S009 | 4268 | 44,088,440 | 42,168 |

| S010 | 4268 | 44,088,440 | 109,443 |

| S011 | 4268 | 44,114,048 | 137,085 |

| S012 | 4268 | 44,096,976 | 69,148 |

| S013 | 4268 | 44,088,440 | 77,341 |

| S014 | 4268 | 44,105,512 | 132,483 |

| S015 | 4268 | 44,088,440 | 98,729 |

| Sensors Number | Accuracy (the Higher, the Better) | |

|---|---|---|

| FARO Result | Our Result | |

| S001 | 0.95351 | 0.97139 |

| S002 | 0.93753 | 0.98322 |

| S003 | 0.94718 | 0.98905 |

| S004 | 0.95088 | 0.99058 |

| S005 | 0.93813 | 0.98503 |

| S006 | 0.92625 | 0.98012 |

| S007 | 0.93602 | 0.98734 |

| S008 | 0.94119 | 0.98779 |

| S009 | 0.94173 | 0.98412 |

| S010 | 0.93568 | 0.98917 |

| S011 | 0.94076 | 0.98981 |

| S012 | 0.95078 | 0.97774 |

| S013 | 0.94661 | 0.97318 |

| S014 | 0.93407 | 0.97917 |

| S015 | 0.94165 | 0.98947 |

| average | 0.94146 | 0.98381 |

| Sensors Number | ODR | IDR | FPR | FNR | ||||

|---|---|---|---|---|---|---|---|---|

| FARO Result | Our Result | FARO Result | Our Result | FARO Result | Our Result | FARO Result | Our Result | |

| S001 | 0.16994 | 0.55673 | 0.95580 | 0.97261 | 0.04419 | 0.02738 | 0.83005 | 0.44326 |

| S002 | 0.18977 | 0.69611 | 0.93990 | 0.98413 | 0.06009 | 0.01586 | 0.81022 | 0.30389 |

| S003 | 0.23453 | 0.86457 | 0.94847 | 0.98928 | 0.05152 | 0.01071 | 0.76546 | 0.13543 |

| S004 | 0.22589 | 0.79470 | 0.95300 | 0.99115 | 0.04699 | 0.00884 | 0.77410 | 0.20530 |

| S005 | 0.18062 | 0.80563 | 0.94121 | 0.98576 | 0.05879 | 0.01423 | 0.81938 | 0.19436 |

| S006 | 0.14959 | 0.72257 | 0.93359 | 0.98256 | 0.06640 | 0.01744 | 0.85040 | 0.27742 |

| S007 | 0.15795 | 0.65505 | 0.94104 | 0.98948 | 0.05895 | 0.01051 | 0.84204 | 0.34494 |

| S008 | 0.12123 | 0.82198 | 0.94368 | 0.98829 | 0.05631 | 0.01170 | 0.87876 | 0.17801 |

| S009 | 0.16194 | 0.84753 | 0.94248 | 0.98425 | 0.05751 | 0.01574 | 0.83805 | 0.15246 |

| S010 | 0.05995 | 0.76042 | 0.93785 | 0.98974 | 0.06214 | 0.01025 | 0.94004 | 0.23957 |

| S011 | 0.05431 | 0.84133 | 0.94353 | 0.99027 | 0.05647 | 0.00972 | 0.94568 | 0.15866 |

| S012 | 0.16055 | 0.89068 | 0.95203 | 0.97788 | 0.04797 | 0.02211 | 0.83944 | 0.10931 |

| S013 | 0.11603 | 0.82901 | 0.94807 | 0.97344 | 0.05192 | 0.02656 | 0.88396 | 0.17098 |

| S014 | 0.22899 | 0.80661 | 0.93619 | 0.97969 | 0.06380 | 0.02031 | 0.77100 | 0.19338 |

| S015 | 0.18443 | 0.79391 | 0.94335 | 0.98991 | 0.05664 | 0.01008 | 0.81556 | 0.20608 |

| average | 0.15971 | 0.77912 | 0.94401 | 0.98456 | 0.05597 | 0.01542 | 0.84027 | 0.22087 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Gao, R.; Park, J.; Hu, X.; Yang, S.; Cho, K. Reflective Noise Filtering of Large-Scale Point Cloud Using Multi-Position LiDAR Sensing Data. Remote Sens. 2021, 13, 3058. https://0-doi-org.brum.beds.ac.uk/10.3390/rs13163058

Gao R, Park J, Hu X, Yang S, Cho K. Reflective Noise Filtering of Large-Scale Point Cloud Using Multi-Position LiDAR Sensing Data. Remote Sensing. 2021; 13(16):3058. https://0-doi-org.brum.beds.ac.uk/10.3390/rs13163058

Chicago/Turabian StyleGao, Rui, Jisun Park, Xiaohang Hu, Seungjun Yang, and Kyungeun Cho. 2021. "Reflective Noise Filtering of Large-Scale Point Cloud Using Multi-Position LiDAR Sensing Data" Remote Sensing 13, no. 16: 3058. https://0-doi-org.brum.beds.ac.uk/10.3390/rs13163058